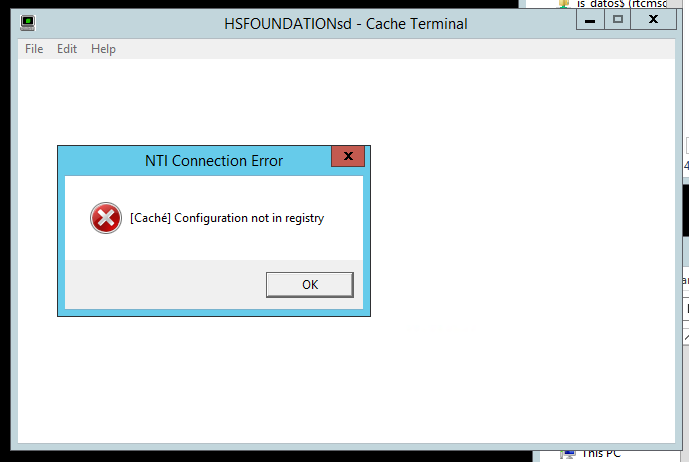

I want to avoid the pop up error messages or redirect them to a file when a terminal launched from a batch file wasn't able to open. I that example the name of the instance was wrong. I am looking for a kind of silent mode or whatever let me avoid this messages.