Hello,

We wonder how could we send an HL7 message to a service to test it.

For example, we have a service, at localhost port 19111

We have tried to use SoapUI to send a REST POST request as follows:

.png)

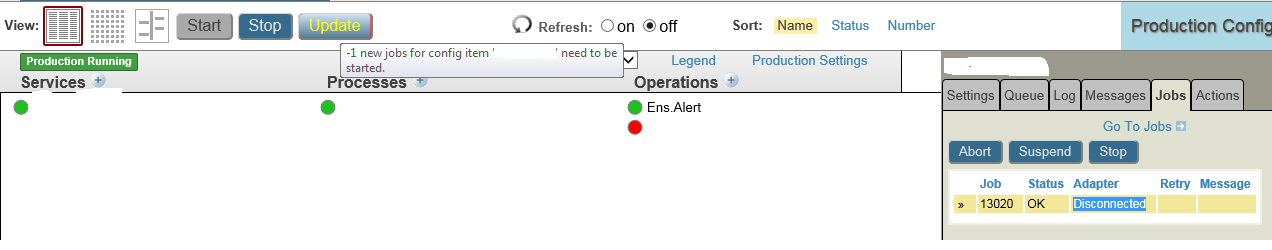

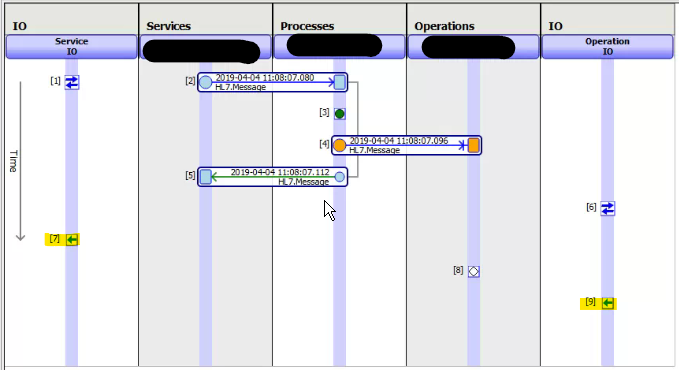

At the event log we see the following trace:

Then, if we try with POSTMAN as follows:

We observe:

Would it be the only way to test services, pointing a TCP HL7 operation to the service, and send messages using the Test Action?

We mean that we are testing manually sending messages from the operation "ProbarADTs" to "Servicios.TCP.GeneralHospitalADT":

How could we do it from SoapUI or Postman?

.png)