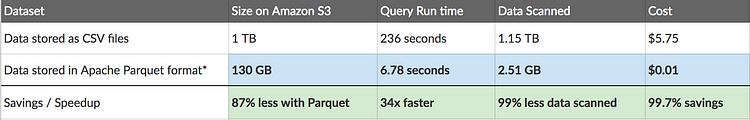

Modern data architectures utilize real-time data capture, transformation, movement, and loading solutions to build data lakes, analytical warehouses, and big data repositories. It enables the analysis of data from various sources without impacting the operations that use them. To achieve this, establishing a continuous, scalable, elastic, and robust data flow is essential. The most prevalent method for that is through the CDC (Change Data Capture) technique. CDC monitors for small data set production, automatically captures this data, and delivers it to one or more recipients, including analytical data repositories. The major benefit is the elimination of the D+1 delay in analysis, as data is detected at the source as soon as it is produced, and later is replicated to the destination.

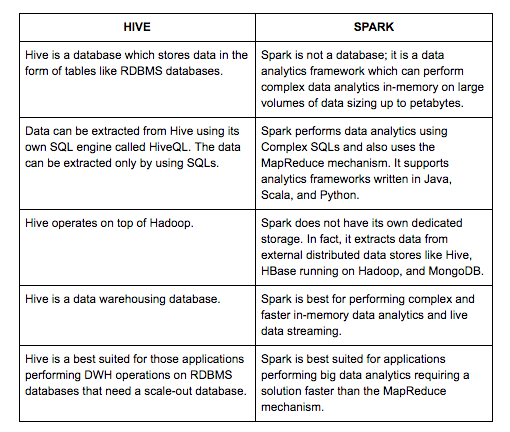

This article will demonstrate the two most common data sources for CDC scenarios, both as a source and a destination. For the data source (origin), we will explore the CDC in SQL databases and CSV files. For the data destination, we will use a columnar database (a typical high-performance analytical database scenario) and a Kafka topic (a standard approach for streaming data to the cloud and/or to multiple real-time data consumers).

Overview

This article will provide a sample for the following interoperability scenario:

.png)

.png)