Hi everyone! 👋

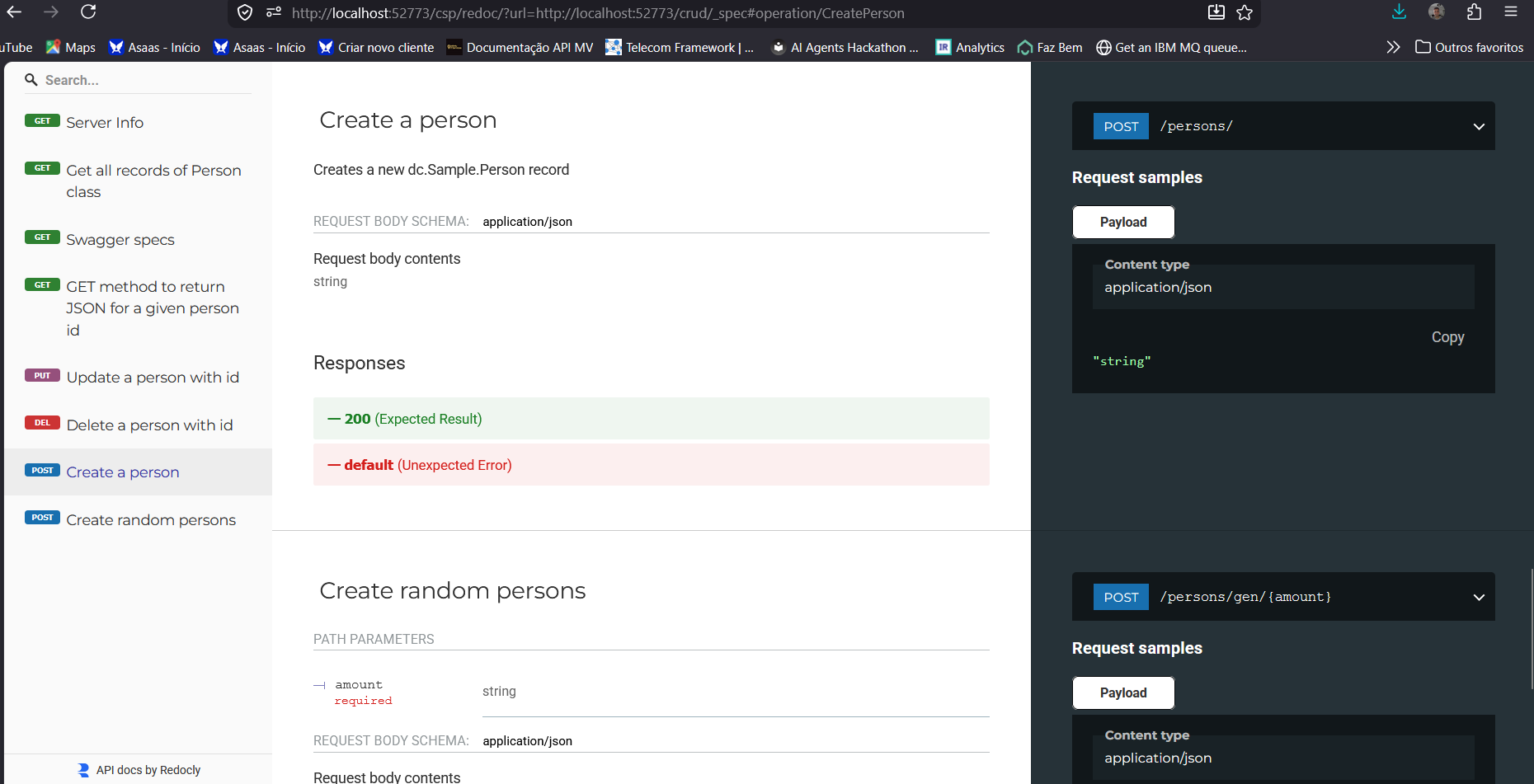

I’m excited to share the project I’ve submitted to the current InterSystems .Net, Java, Python, and JavaScript Contest — it’s called IRIStool and Data Manager, and you can find it on the InterSystems Open Exchange and on my GitHub page.

Dev Community resources

Pagination

Events

13 Apr - 24 May

01 May - 30 Jun

25 May - 14 Jun

02 Jun

03:00 pm - 04:30 pm IDT

Trending posts

Bonuses for the InterSystems Technical Article Contest 2026

by Anastasia Dyubaylo

[Demo Video] IRIS Agents

by Suprateem Banerjee

Winners of the InterSystems Technical Article Contest 2026

by Anastasia Dyubaylo

Show more (2)

Trending apps

Calendar-dataset

by Dmitrij Vladimirov

13

ClinoveraModernizeOnForme

by Olga Verevkina

READY 2026 LoanDemo

by Joshua Brandt

Featured app

FHIR-Patient-Viewer

by Fan Ji

News

Community in numbers

Members

27K

Posts

26.2K

Comments

60.7K

Views

13.9M

Likes

38.6K