Hi, Community!

Check a new session recording from Global Summit 2017:

iKnow What You'll Do Next Summer

InterSystems IRIS Natural Language Processing (NLP), formerly known as iKnow, allows you to perform text analysis on unstructured data sources in a variety of natural languages without any prior knowledge of their content. It does this by applying language-specific rules that identify semantic entities. Because these rules are specific to the language, not the content, NLP can provide insight into the contents of texts without the use of a dictionary or ontology. Learn more.

Hi, Community!

Check a new session recording from Global Summit 2017:

iKnow What You'll Do Next Summer

You may have already covered this in another place, but how does one insert "Relevant Articles?" in a post?

This summer the Database Platforms department here at InterSystems tried out a new approach to our internship program. We hired 10 bright students from some of the top colleges in the US and gave them the autonomy to create their own projects which would show off some of the new features of the InterSystems IRIS Data Platform. The team consisting of Ruchi Asthana, Nathaniel Brennan, and Zhe “Lily” Wang used this opportunity to develop a smart review analysis engine, which they named Lumière.

Hi,

Has anyone ever estimated the amount of disk space consumed by the iKnow indexing process ? I know this will be a rough estimate, but, I imagine that for sizing purposes, that would be enough.

The language the unstructured text is in is English.

thanks in advance -

Steve

InterSystems' iKnow technology allows you to identify the concepts in natural language texts and the relations that link them together. As that's still a fairly abstract definition, we produced this video to explain what that means in more detail. But when meeting with customers, what really counts is a compelling demonstration, on data that makes sense to them, so they understand the value in identifying these concepts over classic top-down approaches. That's why it's probably worth spending a few articles on some of the demo apps and tools we've built to work with iKnow.

In the first article in this series, we'll start with the Knowledge Portal, a simple query interface to explore the contents of your domain.

In my cache studio i couldn't find the a namespace of iknow so how can i check is my studio version is compatible to to the one i am using now. If i don't have one then can be able to create a new namespace in studio?

I have a class with text property, which contains html text (usually pieces, so it may be invalid), here's a sample value:

<div moreinfo="none">Word1 Word2</div><br> <a href = "123" >Word3</a>

When I add iFind index on text, there are at least two problems:

How can I pass plaintext into iFnd index?

Can we use iknow in the Cache tool means if i don't have deepsee tool to work on like for doing some sample programs. Because i don't have deepsee tool with me?

And is there any open source for downloading deepsee?

Hi Community!

Enjoy the video of the week about InterSystems iKnow Technology:

A Cure for Clinician Frustration

In iknow they are mentioning that Japanese words cannot be used for iknow in CRC and in CC, If one needs to use iknow for Japanese character how does concepts and relation works for them?

Anyone can explain iKnow technology? Then Why iknow technology is uses a "bottom up" approach?

Hi, Community!

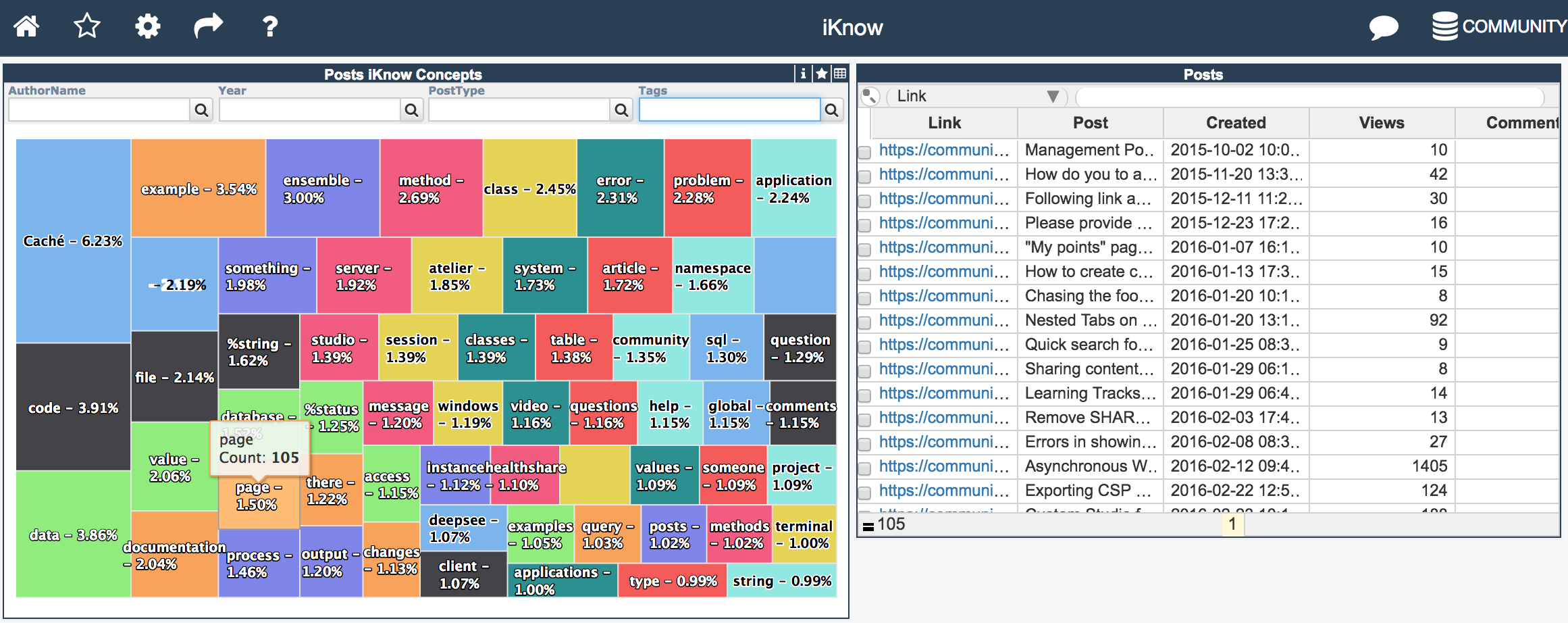

Hope you know and use the Developer Community Analytics page which build with InterSystems DeepSee and DeepSee Web.

We are playing with InterSystems iKnow analytics against Developer Community posts and introduced the new dashboard, which shows Top 60 concepts for all the posts:

If you've worked with iKnow domain definitions, you know they allow you to easily define multiple data locations iKnow needs to fetch its data from when building a domain. If you've worked with DeepSee cube definitions, you'll know how they tie your cube to a source table and allow you to not just build your cube, but also synchronize it, only updating the facts that actually changed since the last time you built or synced the cube. As iKnow also supports loading from non-table data sources like files, globals and RSS feeds, the same tight synchronization link doesn't come out of the box. In this article, we'll explore two approaches for modelling DeepSee-like synchronization from table data locations using callbacks and other features of the iKnow domain definition infrastructure.

After a five-part series on sample iKnow applications (parts 1, 2, 3, 4, 5), let's turn to a new feature coming up in 2017.1: the iKnow REST APIs, allowing you to develop rich web and mobile applications. Where iKnow's core COS APIs already had 1:1 projections in SQL and SOAP, we're now making them available through a RESTful service as well, in which we're trying to offer more functionality and richer results with fewer buttons and less method calls. This article will take you through the API in detail, explaining the basic principles we used when defining them and exploring the most important ones to get started.

This earlier article already announced the new iKnow REST APIs that are included in the 2017.1 release, but since then we've added extensive documentation for those APIs through the OpenAPI Specification (aka Swagger), which you'll find in the current 2017.1 release candidate. Without wanting to repeat much detail on how the APIs are organised, this article will show you how you can consult that elaborate documentation easily with Swagger-UI, an open source utility that reads OpenAPI specs and uses it to generate a very helpful GUI on top of your API.

Hello!

My group and I are currently doing a research project on natural language processing and iKnow plays a big role in this project. I am aware that the algorithms iKnow use aren't public, and I respect that.

My question is, are there any public documents/research that explains, at least part of, the algorthims iKnow uses and the motivations for using them?

Here is a concrete example: We are using GetSimilar() for many of our results and it works very well.

The iKnow documentation shows an example for adding sources to a domain after an initial loading of sources.

The example uses text files. However, our data is now in Cache SQL tables.

Is it possible to add sources from a Cache SQL table, and is there an example of how this is done?

Thank you.

This article contains the tutorial document for a Global Summit academy session on Text Categorization and provides a helpful starting point to learn about Text Categorization and how iKnow can help you to implement Text Categorization models. This document was originally prepared by Kerry Kirkham and Max Vershinin and should work based on the sample data provided in the SAMPLES namespace.

A group of students at the Chalmers University of Technology (Gothenburg, Sweden) tried different approaches to automatically rating the quality of emergency calls, including iKnow.

Excerpt: "The most impressive results produced by iKnow is its ability to correctly classify 100% of the calls using the Average algorithm. This is quite surprising since iKnow only compares low-level concepts, how words relates to each other."

Full story: http://publications.lib.chalmers.se/records/fulltext/244534/244534.pdf

Hi,

I am using iknow text categorization to classify texts. I have 11 medical articles as my training set. Here is part of the source code:

ListerAndLoader

SET domId=domoref.Id

SET flister=##class(%iKnow.Source.File.Lister).%New(domId)

SET myloader=##class(%iKnow.Source.Loader).%New(domId)

UseLister

SET dirpath = "D:\iKnowTestCase\SmallDataBase\Medical"

SET stat = myloader.SetLister(flister)

SET stat = myloader.ProcessList(dirpath,$LB("txt"),0,"")

IF stat '= 1 {WRITE "The lister failed: ",$System.Status.DisplayError(stat) QUIT }

TrainingSet

SET tTrainingSet = ##class(%iKnow.Filters.

How to retrive the unstructure data using iknow concept in Cache . Given real time Example of these concepts?

In a conference call earlier this week, a customer described how they built an iKnow domain with clinical notes and now wanted to filter the contents of that domain based on the patient's diagnosis codes. With such filters, they wanted to explore the corellations between iKnow entities and certain diagnosis codes, first through the Knowledge Portal to get a good sense of the sort of entities and then through more analytical means with the aim of eventually building smart early warning systems.

This is the fourth article in a series on iKnow demo applications, showcasing how the concepts and context provided through iKnow's unique bottom-up approach can be used to implement relevant use cases and help users be more productive in their daily tasks. Previous articles discussed the Knowledge Portal, the Set Analysis Demo and the Dictionary Builder Demo, each of which gradually implemented slightly more advanced interactions with what iKnow gleans from unstructured data.

This week, we'll look into one more demo application, the Rules Builder Demo, in which we'll build on previous work but again climb a step on the level ladder, implementing a more high-level use case than in the previous ones. The idea came from an opportunity where we were asked to help the customer in the finance sector make sense of vast volumes of contract data. They wanted to semi-automate the extraction of logical rules from that text (in fluent legalese!), so they could be fed into other systems. While this was an exciting use case to work on (and more on it in this GS2016 presentation), we've also used it in other cases, for example to extract mentions of ejection fraction from Electronic Health Records.

This is the third article in a series on iKnow demo applications, showcasing how the concepts and context provided through iKnow's unique bottom-up approach can be used to implement relevant use cases and help users be more productive in their daily tasks. Previous articles discussed the Knowledge Portal, a straightforward tool to browse iKnow indexing results, and the Set Analysis Demo, in which you can use the output of iKnow indexing to organize your texts according to their content, such as in patient cohort selection.

This week, we'll look into another demo application, the Dictionary Builder demo, in which we'll marry iKnow's bottom-up insights with top-down expertise, organizing our domain knowledge into dictionaries that are composed of the actual terms used in the data itself. Sticking to a top-down approach only, you'd risk missing out on some terminology used in the field that a domain expert sitting in his office wouldn't be aware of.

In previous articles on iKnow, we described a number of demo applications (iKnow demo apps parts 1, 2, 3, 4 & 5) that are either part of the regular kit or can be easily installed from GitHub. All of those applications assumed you already had your iKnow domain ready, with your data of interest loaded and ready for exploration. In this article, we'll shed more light on how exactly you can get to that stage: how you define and then build a domain.

Earlier in this series, we've presented four different demo applications for iKnow, illustrating how its unique bottom-up approach allows users to explore the concepts and context of their unstructured data and then leverage these insights to implement real-world use cases. We started small and simple with core exploration through the Knowledge Portal, then organized our records according to content with the Set Analysis Demo, organized our domain knowledge using the Dictionary Builder Demo and finally build complex rules to extract nontrivial patterns from text with the Rules Builder Demo.

This time, we'll dive into a different area of the iKnow feature set: iFind. Where iKnow's core APIs are all about exploration and leveraging those results programmatically in applications and analytics, iFind is focused specifically on search scenarios in a pure SQL context. We'll be presenting a simple search portal implemented in Zen that showcases iFind's main features.

Sentiment Analysis is a thriving research area in the broader context of big data, with many small as well as large vendors offering solutions extracting sentiment scores from free text. As sentiment is highly dependent on the subject a piece of text is about (financial news vs tweets about the latest computer game), most of these solutions are targeted at specific markets and/or focus on a given type of source data, such as social media content.

I have a class which, in the previous instance, was able to extract metadata field names and data from a text file, and load this information into a domain. I am trying to run this in the field test instance, but it is not loading the metadata - only the field names. I am not getting an error, but the data is not loaded.

The few changes I made to the original class:

Previously, this class also ran iTables. I commented all that code out.

To create the domain, I replaced the line:

set tSC = ##class(HSTA.DomainExpert.utils.DomainUtils).%CreateDomain(dname,.

Presenter: Danny Wijnschenk

Task: Help people make better decisions by letting application deal with all the data.

Approach: As an example, we’ll extend a demo asset management application for portfolio and trade compliance, using iKnow technology to translate agreements into rules that ensure portfolio compliance prior to trade execution.

In this session, we’ll discuss how easy it is to extend a classic application that deals with straightforward transactions, to also offer insights and actions based on more complex, unstructured data. We’ll present a use case on portfolio compliance from the financial services industry.

Content related to this session, including slides, video and additional learning content can be found here.

Presenter: Benjamin De Boe

Task: Extract specialized information from your unstructured data

Approach: Combine InterSystems iKnow technology with third-party and custom text-processing tools

This session explains how you can easily combine ISC, third-party and custom text processing tools to get the broadest insights in your unstructured data.

Content related to this session, including slides, video and additional learning content can be found here.