Hey Community!

We're happy to share the next video in the "Code to Care" series on our InterSystems Developers YouTube:

Artificial Intelligence (AI) is the simulation of human intelligence processes by machines, especially computer systems. These processes include learning (the acquisition of information and rules for using the information), reasoning (using rules to reach approximate or definite conclusions) and self-correction.

Hey Community!

We're happy to share the next video in the "Code to Care" series on our InterSystems Developers YouTube:

A Continuous Training (CT) pipeline formalises a Machine Learning (ML) model developed through data science experimentation, using the data available at a given point in time. It prepares the model for deployment while enabling autonomous updates as new data becomes available, along with robust performance monitoring, logging, and model registry capabilities for auditing purposes.

InterSystems IRIS already provides nearly all the components required to support such a pipeline. However, one key element is missing: a standardised tool for model registry.

Hi folks,

One of the things I've been thinking about recently: how strong AI is changing my work as an ObjectScript developer right now! Yeah, yeah, I know I'm not a very fast, it should have happened 2-3 years ago... But it's only now that I've realized that I am not writing the code all my workday like before.

Why do we need this?

Lack of Compiled Context: AI tools only see source code; they don't know what the final compiled routine looks like.

Macro Hallucination: Because AI doesn't see our #include files or system macros, it often makes them up, wasting time during debugging.

The Documentation Gap: Deep logic optimization often requires understanding internal macros that aren't fully covered in public documentation.

Manual Overhead: Currently, the only way to fix this is to manually use the IRIS VS Code extension to find the "truth" in the routine.

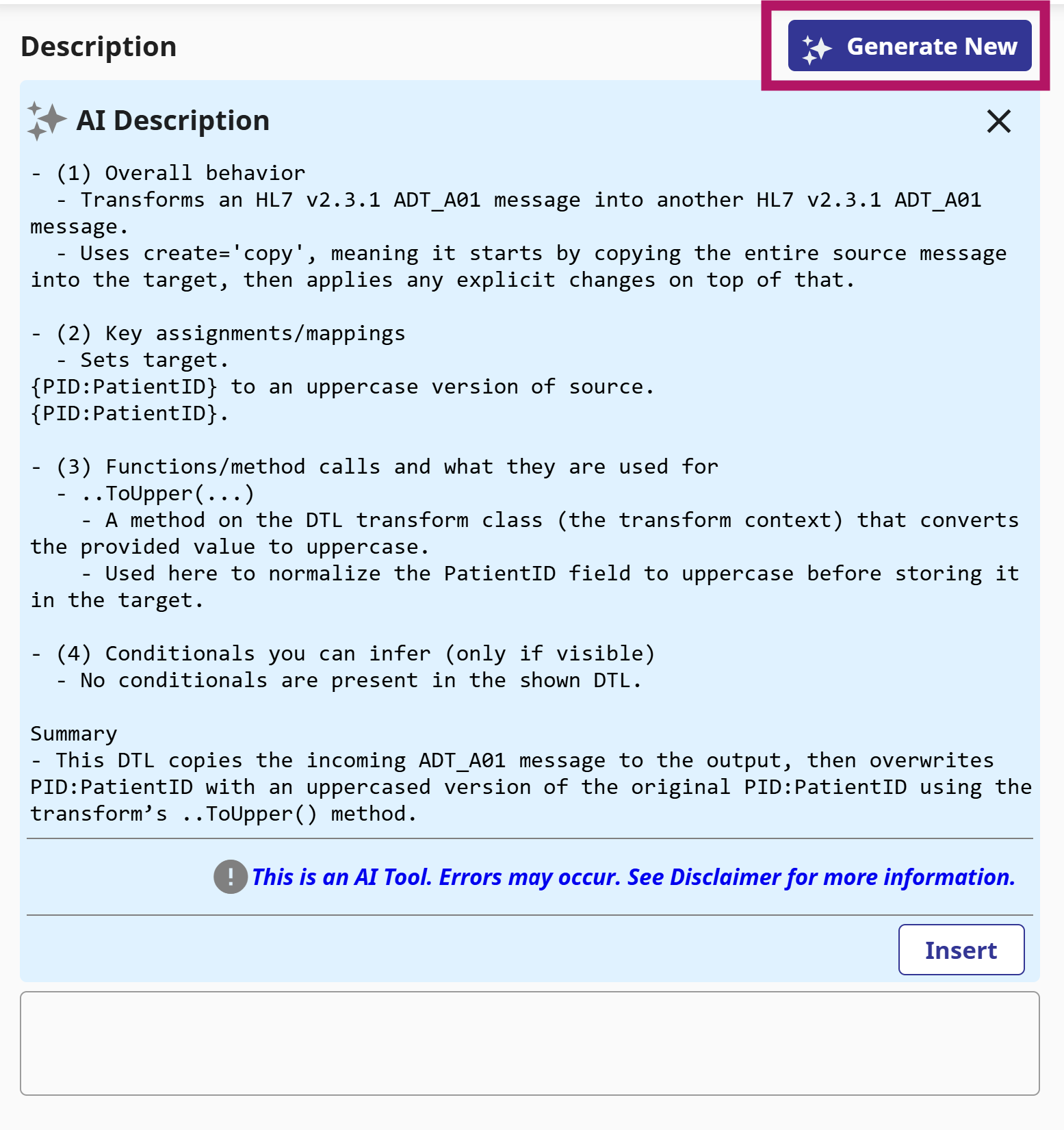

v2026.1 was just released as GA, and one of the features I'm looking forward to using is the DTL Explainer feature.

This allows you to take a Data Transformation, and with a click of a button get a human-readable description of the transformation (which you can also use as the basis for the DTL Description).

For complex DTLs, especially ones you didn't write yourself, or you did but a long time ago, this will allow you to get a clear quick understanding of what it's doing.

Hi Community,

Enjoy the new video on InterSystems Developers YouTube:

Keywords: Vibe coding, Windsurf, IRIS, TIE

Has anyone not been trying "vibe coding" so far?

Even merely 3 years ago, if anyone asked

Keywords: IRIS, Agents, Agentic AI, Smart Apps

Transformer based LLMs appear to be a pretty good "universal logical–symbolic abstractor". They started to bridge up the previous abyss among human languages and machine languages, which in essence are all logic symbols that could be mapped into the same vector space.

Wondering for 3 years we might be able to just use English (etc human natural languages) to do IRIS implementations as well, one day.

You’ve seen how tools like Lovable are shaking up web development. People are spinning up entire apps just by talking to an AI, almost like pair‑programming on steroids.

Now imagine bringing that same “vibe coding” experience into healthcare. know, it sounds crazy. Healthcare is complex, full of regulations, and usually gives us a headache just thinking about the interoperability rules.

That’s exactly the space where withLove lives: an AI‑Native, Low‑Code platform built entirely on InterSystems IRIS for Health.

Hi developers!

I'm testing vibecoding with ObjectScript and my silicon friend created a code-block that got me thinking "what's wrong"?

Here is the piece of code:

for i=0:1:(json.%Size()-1) {

set p = json.%Get(i)

if (p="value1")!(p="value2") {

quit 1

}AI wanted to quit from a method with a return value. Good intention, but bad use of the command.

And ObjectScript compiler compiles this code with no error(?) (syntax linter in VSCode says it's a syntax, kudos @Brett Saviano ).

But in action, it produces <COMMAND>, of course.

Welcome to the finale of our journey in building MAIS.

Now, the stage is set. Our agents are ready, defined, and eager to work. However, they remain frozen in time. They require a mechanism to drive the conversation, execute their requested tools, and pass the baton to one another.

Today, we will assemble the Nervous System

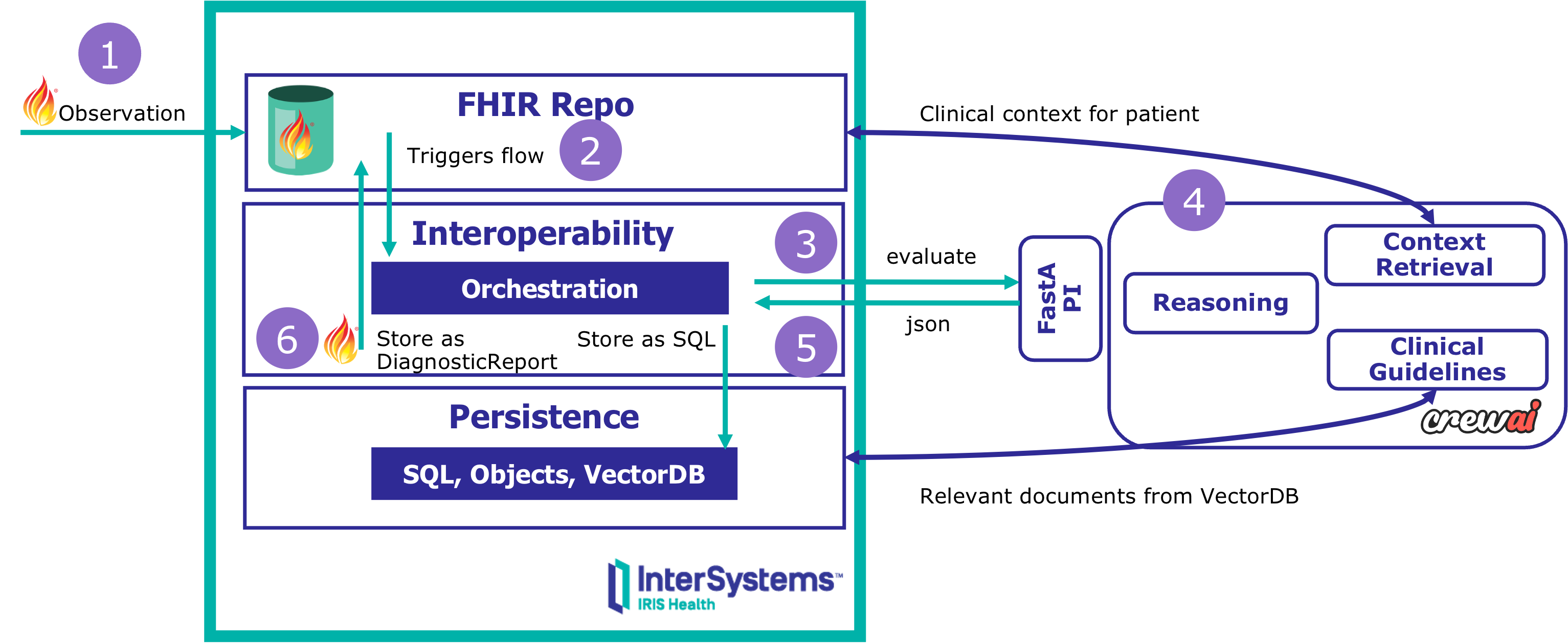

Ever since I started using IRIS, I have wondered if we could create agents on IRIS. It seemed obvious: we have an Interoperability GUI that can trace messages, we have an underlying object database that can store SQL, Vectors and even Base64 images. We currently have a Python SDK that allows us to interface with the platform using Python, but not particularly optimized for developing agentic workflows. This was my attempt to create a Python SDK that can leverage several parts of IRIS to support development of agentic systems.

10:47 AM — Jose Garcia's creatinine test results arrive at the hospital FHIR server. 2.1 mg/dL — a 35% increase from last month.

What happens next?

No chatbot. No manual prompts. No black-box reasoning.

This is event-driven clinical decision support with full explainability:

✅ Triggered automatically by FHIR events ✅ Multi-agent reasoning (context, guidelines, recommendations) ✅ Complete audit trail in SQL (every decision, every evidence source) ✅ FHIR-native outputs (DiagnosticReport published to server)

Built with:

You'll learn: 🖋️ How to orchestrate agentic AI workflows within production-grade interoperability systems — and why explainability matters more than accuracy alone.

In Part 1, we laid the technical foundation of MAIS (Multi-Agent Interoperability Systems). We have successfully wired up the 'Brain', built a robust Adapter using LiteLLM, locked down our API keys with IRIS Credentials, and finally cracked the trick code on the Python interoperability puzzle.

However, right now our system is merely a raw pipe to an LLM. It processes text, but it lacks identity.

Today, in Part 2, we will define the Anatomy of an Agent We will move from simple API calls to structured Personas.

Some concepts make perfect sense on paper, whereas others require you to get your hands dirty. Take driving, for example. You can memorize every component of the engine mechanics, but that does not mean you can actually drive.

You cannot truly grasp it until you are in the driver's seat, physically feeling the friction point of the clutch and the vibration of the road beneath. While some computing concepts are intuitive, Intelligent Agents are different. To understand them, you have to get in the driver's seat.

Hey Community!

We're happy to share the next video in the "Code to Care" series on our InterSystems Developers YouTube:

Hi everyone.

I'm going to give you a quick tip on how to implement an AI agent to search the Intersystems documentation integrated into Teams.

Yes, I know the documentation page has its own AI search engine and it's quite effective, but this way we'd have faster access, especially if Teams is your company's corporate tool.

You can also create another AI agent to search articles published in the developer community (which also has its own integrated AI search engine).

Hi,

We're working on new capabilities to help you build Agents and AI applications faster with InterSystems IRIS. In order to better understand which entry points and development methodologies would help you most, we've created this brief survey: Building AI solutions with InterSystems IRIS.

Filling it in should not take much more than 5 minutes, and your feedback on this exciting topic will help us fine tune our designs and prioritize the right features.

Thanks in advance!

benjamin

Hey Community!

We're happy to share the next video in the "Code to Care" series on our InterSystems Developers YouTube:

Hey Developers,

Enjoy the new video on InterSystems Developers YouTube

⏯ Integrating AI Agents into InterSystems IRIS - Patterns and Techniques @ READY 2025

Hey Developers,

Enjoy the new video on InterSystems Developers YouTube

Hey Community,

Enjoy the new video on InterSystems Developers YouTube:

⏯ Analytics and AI with InterSystems IRIS - From Zero to Hero @ Ready 2025

Yes, yes! Welcome! You haven't made a mistake, you are in your beloved InterSystems Developer Community in Spanish.

You may be wondering what the title of this article is about, well it's very simple, today we are gathered here to honor the Inquisitor and praise the great work he performed.

Perfect, now that I have your attention, it's time to explain what the Inquisitor is. The Inquisitor is a solution developed with InterSystems technology to subject public contracts published daily on the platform https://contrataciondelestado.es/ to scrutiny.

Hey Community,

Enjoy the new video on InterSystems Developers YouTube:

This anthropic article made me think of several InterSystems presentations and articles on the topic of data quality for AI applications. InterSystems is right that data quality is crucial for AI, but I imagined there would be room for small errors, but this study suggests otherwise. That small errors can lead to big hallucinations. What do you think of this? And how can InterSystems technology help?

In my previous article, I introduced the FHIR Data Explorer, a proof-of-concept application that connects InterSystems IRIS, Python, and Ollama to enable semantic search and visualization over healthcare data in FHIR format, a project currently participating in the InterSystems External Language Contest.

In this follow-up, we’ll see how I integrated Ollama for generating patient history summaries directly from structured FHIR data stored in IRIS, using lightweight local language models (LLMs) such as Llama 3.2:1B or Gemma 2:2B.

The goal was to build a completely local AI pipeline that can extract, format, and narrate patient histories while keeping data private and under full control.

All patient data used in this demo comes from FHIR bundles, which were parsed and loaded into IRIS via the IRIStool module. This approach makes it straightforward to query, transform, and vectorize healthcare data using familiar pandas operations in Python. If you’re curious about how I built this integration, check out my previous article Building a FHIR Vector Repository with InterSystems IRIS and Python through the IRIStool module.

Both IRIStool and FHIR Data Explorer are available on the InterSystems Open Exchange — and part of my contest submissions. If you find them useful, please consider voting for them!

With the rapid adoption of telemedicine, remote consultations, and digital dictation, healthcare professionals are communicating more through voice than ever before. Patients engaging in virtual conversations generate vast amounts of unstructured audio data, so how can clinicians or administrators search and extract information from hours of voice recordings?

Enter IRIS Audio Query - a full-stack application that transforms audio into a searchable knowledge base.

Hey Community,

The InterSystems team put on our monthly Developer Meetup with a triumphant return to CIC's Venture Café, the crowd including both new and familiar faces. Despite the shakeup in both location and topic, we had a full house of folks ready to listen, learn, and have discussions about health tech innovation!

IRIS Audio Query is a full-stack application that transforms audio into a searchable knowledge base.

community/ ├── app/ # FastAPI backend application ├── baml_client/ # Generated BAML client code ├── baml_src/ # BAML configuration files ├── interop/ # IRIS interoperability components ├── iris/ # IRIS class definitions ├── models/ # Data models and schemas ├── twelvelabs_client/ # TwelveLabs API client ├── ui/ # React frontend application ├── main.