I need to create a task to read the contents of file in an IRIS file system and store it in Persistent DB. What is the type of property thats defined in the Task that lets user select the input directory.

Are you planning to attend InterSystems READY 2025 (June 22–25), this year's global summit?

After we rolled out a new cointainer based on containers.intersystems.com/intersystems/irishealth:2023.1 this week, we suddenly noticed that our FHIR Repository started responding with an Error 500. This turns out to be caused by PROTECT violations on the new HSSYSLOCALTEMP namespace and database used by this version of the IRIS for Health FHIR components.

The trick to solve that is to add the "%DB_HSSYSLOCALTEMP" to the Web Application(s) that handle FHIR Requests. You can script that by running the following Class method in the namespace(s) that define these Web Applications:

do ##class(HS.Hello, community!

I am working on implementing OAuth 2.0 authentication in InterSystems IRIS and need to correctly define a CSRF token that will be validated by OAuth.Response. However, I am having trouble finding a clear method to configure the CSRF token correctly.

So far, I have tried:

- Setting the CSRF token in the request header.

- Inserting the CSRF token via InsertCookie.

Despite these attempts, I haven’t been successful. On the OAuth.Response page, the CSRF token is empty, and I get the error message “Invalid CSRF token” because the csrfToken is empty.

We have a scenario where we use the best practice article of a BP and DTL to split up HL7 messages mainly ORUS

https://community.intersystems.com/post/splitting-oru-messages-using-ob…

It is really useful but we have this code in many places that we are trying to consolidate it in one place.

We do not want to split it at the first stage in our rules as there is a lot of messages that go to sink. So we are trying for specific rules and the incoming hl7 matches certain rules and classified for certain downstream systems that it is then split and transformed.

Hello Community,

When I run the following code with x undefined in terminal, it throws a syntax error and returns control to the program stack. After issuing a GO command, execution continues, and setting the global variable ^zz1.

code 1:

test.mac

if $Data(@x@(a,b,c)) {

set ^zz1=1212

}

write !,1212,!

//

//or

if $Data(@x@(a,b,c)) set ^zz1=1212

write !,1212,! if I assign the result of $D(@x@(a,b,c)) to a local variable like d using set d=$D(@x@(a,b,c)), and then use if d { ... }, the code fails(global is not set) working as expected.

Code 2

Hey Community,

We're excited to invite you to the next InterSystems UKI Tech Talk webinar:

👉Unlocking the Power of InterSystems Data Studio

⏱ Date & Time: Thursday, May 29, 2025 10:30-11:30 UK

👨🏫 Speaker: @Saurav Gupta, Data Platform Team Lead, InterSystems

Hi, Community!

Ready to go beyond built-in utility functions in your HealthShare® Health Connect Cloud™ integrations?

👉 Learn how to Add Custom Utility Functions in Health Connect Cloud!

I'm trying to deploy a python/flask application to an Iris4Health container (2025.1) but running into some issues with packages and where they should be installed.

First one if the flask packages themselves. I tried to installed the flask packages into the python external language server virtual environment, but even doing this when configuring the WSGI application in the web application section, it would complain about not having a WSGI framework. Once I created a custom container and added the flask package at the OS level, I was able to configure the web application without it complaining.

We’re issuing a point release for InterSystems IRIS, IRIS for Health, and Health Connect 2025.1 — version 2025.1.0.225.1 — to address a critical interoperability issue affecting users who leverage System Default Setting enabled business hosts.

What’s the issue?

In certain configurations where a Business Host is marked as System Default Setting enabled, applying new settings via the UI may incorrectly indicate that changes were applied, even though the necessary restart did not occur.

For 15 over years I have been playing with ways to speed up the way I use InterSystems systems and technology via AutoHotkey scripting As a power keyboard user (I avoid my mouse when possible) I found it very helpful to set up hotkeys to get to my most frequently accessed systems and research utilities as quickly as possible. While I have used this approach for many years, this is the first time that I am introducing my approach and a customer-facing hotkey script to the D.C. and OEx.

WSDL for a CDC vendor was provided with a URL using a custom socket (non 443). Everything generated fine, but when making calls to their https:// URL that has their custom port in the URL - no response comes back. The assumption is, their server isn't even processing the request, as Postman does using the custom https://path.to.server:NNNN port in the URL.

I see with a normal web operation that uses %Net.HttpRequest overriding the port property is easily done - but no animal seems to exist for any generated SOAP Operation class.

We're excited to announce the first-ever UI update for CCR Client Tools. The Client Tools UI has been upgraded to match the modern CCR UI, aiming to create a more seamless experience across both applications. The color scheme, fonts, and style elements have all been updated accordingly in the new UI:

.png)

All functionality has been preserved through the update, and the new changes are fully compatible with all major browsers, VSCode, and the legacy Studio IDE.

Hey Community!

We're happy to share the next video in the "Code to Care" series on our InterSystems Developers YouTube:

Foreword

InterSystems IRIS versions 2022.2 and newer feature the ability to authenticate to a REST API using JSON web tokens (JWTs). This feature enhances security by limiting where and how often passwords transfer over the network in addition to setting an expiration time on access.

The goal of this article is to serve as a tutorial on how to implement a mock REST API using InterSystems IRIS and lock access to it behind JWTs.

NOTE I am NOT a developer. I make no claims as to the efficiency, scalability, or quality of the code samples I use in this article.

I'm creating a Business Process that:

- Transforms one object into another using DTL

- Sends this object to an operation

- Transform the object obtained in first point into another one using DTL

- Sends the second object to an operation

The Business Process is created with BPL, and objects are stored in BP context. When I execute this Process, in the 3rd point the object obtained in first transformation doesn't exist. It's empty.

I have a local testing environment using VSCode.

I'm trying to create a routine %Test.mac, but keep getting permission error.

I have enabled write permissions to percent globals in the Admin Portal.

I'm connecting to Iris with superuser.

How do I give myself permissions?

Thanks

i got a particular application where i want that a user could only write to a DB without the reading permission. is this possible?

Just like a knockout punch, without giving the opponent a chance, Kubernetes, as an open source platform, has a universe of opportunities due to its availability (i.e., the ease of finding support, services and tools). It is a platform that can manage jobs and services in containers, which greatly simplifies the configuration and automation of these processes.

But let's justify the title image and give the tool in question the “correct” name: InterSystems Kubernetes Operator.

Hi Guys,

I'm a newbie running IRIS in a container (IRIS for UNIX (Ubuntu Server LTS for x86-64 Containers) 2024.3 (Build 217U) Thu Nov 14 2024 17:30:43 EST) and trying to setup Python so I can start working on ML & Autosklearn,my understanding is that IRIS already comes with embedded Python but unable to do something like "import pandas as pd" in VSCode which looks like I need install a more complete version of Python or packages, so what I'm missing?

.png)

Hi, I am unsure how to remove this restriction; when I am performing dynamic SQL using ##class(%SQL.Statement).%ExecDirectNoPriv(, .query, args...)

It works fine, but the moment I add specific properties from the persistent class I am performing the select on into the WHERE clause, I get: ERROR #5540: SQLCODE: -99 Message: User UnknownUser is not privileged for the operation.

I'm sure most of you are familiar with utility %SYS.MONLBL that is crucial when analysing code performance bottlenecks. It allows you to select a number of routines that you want to monitor at runtime and also specify what process(es) you want to watch. BUT, what if you do not know exactly, what process would execute your code? This is true with many web based (CSP/REST) applications today. You want to minimize the resource utilization on your production system that needs analysis. So, how about doing a small tweak?

1.

Hi all,

I'm exited to announce a GitHub Action to do direct deploy code from a github repository direct to a IRIS Instance.

Pleas visit https://openexchange.intersystems.com/package/Github-Action-IRIS-Deployer

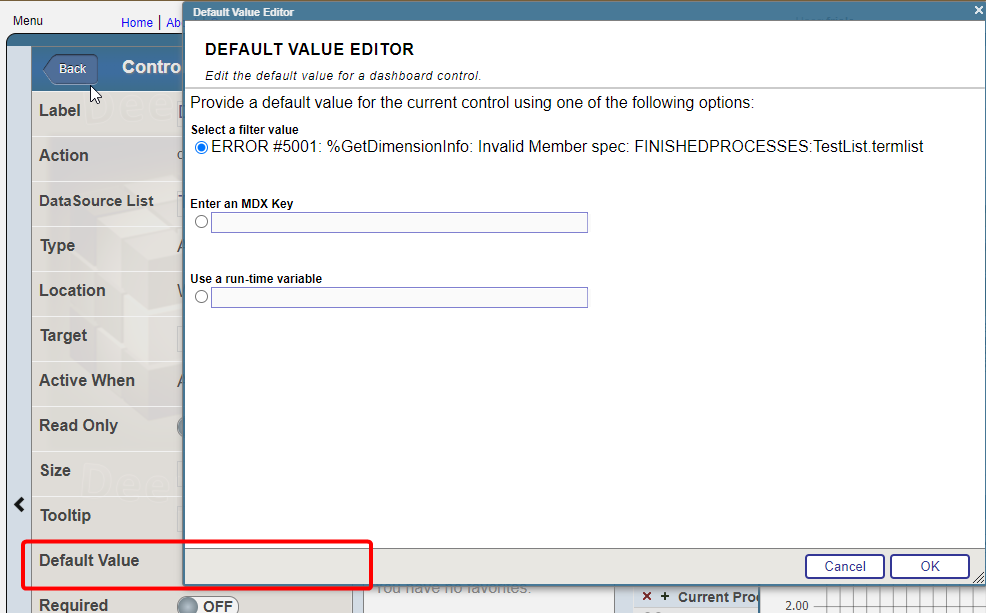

I have a widget that uses "choose Data source" control option. Termlist for the control consist of the two data sources and i want to set one of them by default. For example: I have two data sources, one is grouped by month, the other by year. I need to set the one that is grouped by year by default

Using default value at the bottom return an error

How can i achive that?

Hello. Currently, we are developing using Cache 2018 version.

Our team is working on improving an existing legacy program so that it can also be used on the web.

Before asking my question, here is the development environment.

- IDE: IntelliJ

- Framework: Spring Boot, MyBatis

- DB Connection: JDBC (using the library provided by InterSystems)

Currently, we are successfully mapping global data through the %PERSISTENT class and able to query it with SQL. However, the problem is that the retrieved "Korean" data is all broken.

Hello community,

The Certification Team of InterSystems Learning Services is excited to announce the release of our new InterSystems IRIS Development Professional exam. It is now available for purchase and scheduling in InterSystems exam catalog. Potential candidates can review the exam topics and the practice questions to help orient them to exam question approaches and content. Candidates who successfully pass the exam will receive a digital certification badge that can be shared on social media accounts like LinkedIn.  If you are new to InterSystems Certification, please review our program pages that include information on taking exams, exam policies, FAQ and more.

If you are new to InterSystems Certification, please review our program pages that include information on taking exams, exam policies, FAQ and more.

Over the past couple of months, I have been working on the SMART on FHIR EHR Launch to test the capabilities of IRIS for Health using two open-source apps from CSIRO: SMART-EHR-Launcher and SMART Forms App. This journey has been incredibly interesting, and I’m truly grateful for the opportunity to work on this task and explore more of IRIS for Health’s potential.

After successfully demonstrating the seamless launch of multiple external SMART apps at the HL7 AU FHIR Connectathon, I’m excited to share what I’ve learned with the community.

Hey Developers,

Watch this video to learn how Personal Community can be leveraged to enhance your digital front door strategy:

⏯ HealthShare Personal Community and Your Digital Front Door @ Global Summit 2024

As part of a process to generate FHIR XML bundles from HL7 messages, I have a subtransform transforming segments of an MDM_T02 message into a section of XML (EnsLib.EDI.XML.Document). So far this has worked well although I've encountered strange behaviour when making use of an xmlns attribute, whereby the attribute's name is duplicated despite the schema and DTL editor displaying it correctly.

.png)

.png)

Here's a snippet of the div.xmlns definition from the XSD schema used

.png)

Has anyone else encountered this? Any help is much appreciated

.jpg)