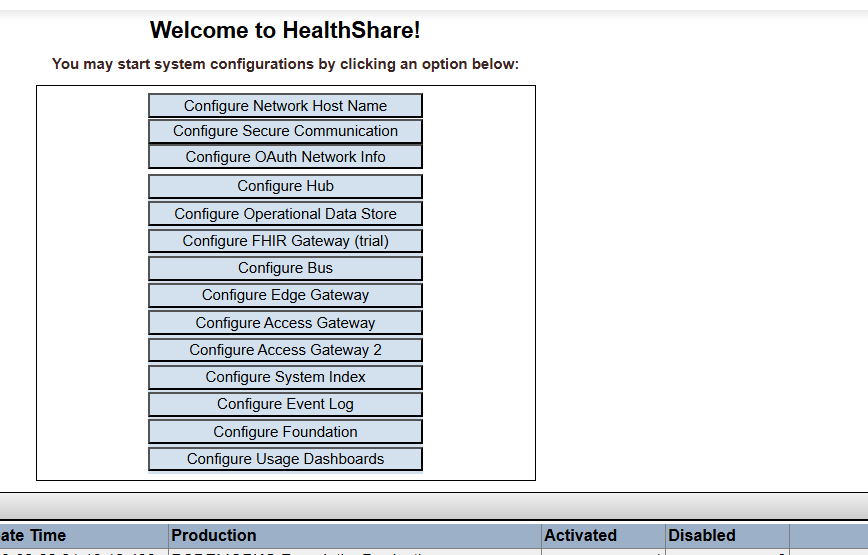

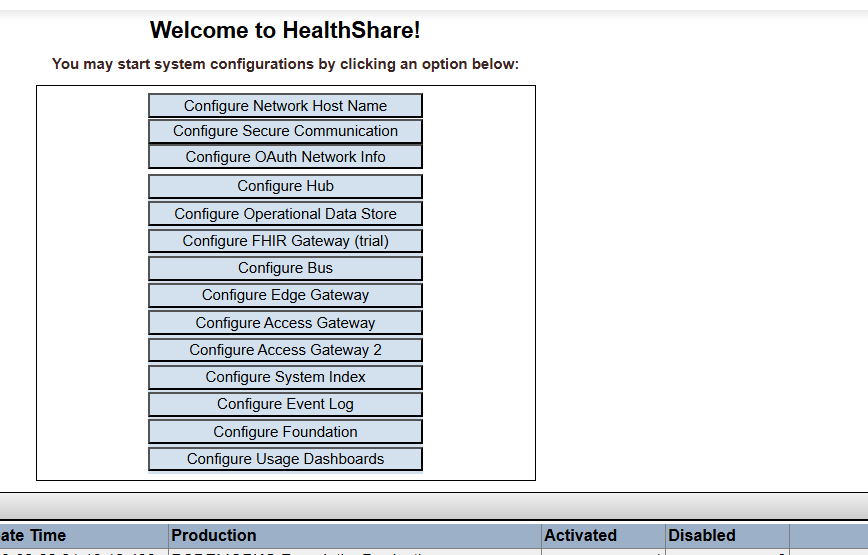

I have installed personal community but still can't see the configure personal community option here. this is linux

I have installed personal community but still can't see the configure personal community option here. this is linux

I am available to engage on projects as an Iris Healthcare Consultant, leveraging over 20 years of extensive experience in IT architecture with a special focus on Intersystems IRIS and Health Share solutions.

My expertise includes leading critical NHS projects in the UK as well as building SaaS solution designed to enhance revenue cycle management and facilitate integration between hospitals and doctors in the US.

What is wrong in this snippet??

Its an iris lab so nothing so personal that someone can't use it

{

"workbench.colorTheme": "InterSystems Default Dark Modern",

"intersystems.servers": {

"irislab": {

"webServer": {

"scheme": "https",

"host": "35.188.112.210",

"port": 29363

},

"username": "tech",

"password": "demo"

}

},

"objectscript.conn": {

"server": "irislab",

"ns": "USER", // Add your namespace here (e.g., USER)

"active": true

}

}

HI,

docs.intersystems.com

has been down for almost a week now.

Do we have any update as to when it will be back up?

Hello fellow community members,

I would like to offer my services as an Intersystems Professional and am available to work on projects.

I have more than a decade experience into Intersystems stack of technologies including IRIS, Ensemble, Healthshare, Healthconnect, Cache Objectscript, Mumps, Zen, Analytics etc. with companies spread over US and UK involved in multiple domains.

Hello,

Am curious if anyone has a working example of IRIS/Ensemble consuming a CDS file and one or two sample CDS files for reference.

Thanks in advance.

Hello fellow community members,

I would like to offer my services as an Intersystems Professional and am available to work on projects.

I have more than a decade experience into Intersystems stack of technologies including IRIS, Ensemble, Healthshare, Healthconnect, Cache Objectscript, Mumps, Zen, Analytics etc. with companies spread over US and UK involved in multiple domains.

There are couple of options to do this.

1. You can extend your class &JSON.Adaptor, it will do it for you with inbuilt logicaldtodisplay methods

2. Create another request class and do your transformations

3. Before calling the json convert to stream methods, transform the object's field with a ZDT.

---------------------------------------------------------------------------------------------------------------------------------------

I have an extremely simple example where there is a class with 3 properties

Class NV.Operations.FileOutbound.Data.

IRIS is an excellent option for machine learning projects with massive data operation scenarios, because the following reasons:

1. Supports the use of shards to scale the data repository, just like MongoDB.

2. Supports the creation of analytical cubes, this in association with sharding allows to have volume with performance.

3. Supports collecting data on a scheduled basis or in real time with a variety of data adapter options.

4. It allows to automate the entire deduplication process using logic in Python or ObjectScript.

5.

Hello All,

I have been using IRIS / Ensemble for over a decade and appreciate lot of it's functionalities and features, however besides having a UI of 80's era (which doesn't bother me), what I believe where IRIS is lacking is lack of out of the box connectors (services/operations).

If we look at IRIS's competitors for eg Mulesoft, Talend, Boomi they all have hundreds of pre-built connectors for major applications like Salesforce, SAP etc and cloud services like Azure, AWS etc to store and retrieve information and data.

Just to give an idea here is a link of Dell Boomi connectors

https://help.boomi.com/bundle/connectors/page/c-atm-Connectors.html

Hi All,

We have been using DeepSee which has been the integrated Analytic Dashboard built over Cache Cubes. It works fine but it's visual capabilities are limited and most probably is getting phased out.

If I am not wrong, Tableau is the suggested alternative to DeepSee .

Hi All,

We have few queries which are simple selects . For simplicity let's say there is a query that joins two tables and gets few columns and both tables have no indexes.

Select Tab1.Field1, Tab2.Field2

From Table1 Tab1

Join Table2 Tab2

On Tab2.FK = Tab1.PK

When we do query plan for this it shows approx 6 million, however if we make a simple adjustment to the query

Select Tab1.Field1, Tab2.Field2

From Table1 Tab1

Join Table2 Tab2

On Tab2.FK = Tab1.PK

WHERE Tab1.Id > 0 (Which will always be the case)

The query plan comes down to few thousands. So approx 99% improvement.

HI,

I am planning to build a WebApp that will have tons of data to display in tables, ability to add comments on that table rows and may be some few more small features as we move on.

1. It has to be Secure

2. It has to be fast

3. It has to be Non CSP / Non Zen

4. Will have Cache DB to pull records from but should be flexible to do that from any odbc resource

5.

Hello All,

We need to develop a small csp application which shows data in simple paginated / searchable table for business users.

It is to be built on an old version of Cache and is not a big full fledged application but something temporary. We can't use Zen and using a combination of csp & Bootstrap as bootstrap makes the pages look beautiful with little effort.

I have built the table in boostrap and it works fine with pagination and search working perfectly

The problem occurs only when we go above 500 records.

HI,

I have made a query with class definitions and all their properties and put them in a view.

All is good besides Parameters is showing junk characters. Is there a way to do it cleanly besides getting into the code??

SELECT

CC.ID As CompiledClass,

CC.SqlSchemaName,

CC.SqlTableName,

CP.Name As PropertyName,

CP.SqlFieldName,

CP.Type,

PD.Parameters

FROM %Dictionary.CompiledProperty CP

JOIN %Dictionary.CompiledClass CC

ON CP.Parent = CC.ID

JOIN %Dictionary.PropertyDefinition PD

ON PD.ID = CP.ID1

Hi,

Does anyone have a simple code snippet to encode and upload a raw text to Azure Blob?

HI

We can easily do a <call> from graphic BPL's

I was wondering what is the best way we can call another Business Process or Operation via code.

Thanks in advance

At least three different ways to process errors (status codes, exceptions, SQLCODE etc is given in ObjectScript. Most systems have status, but for a range of reasons exceptions are more convenient to manage. You spend some time translating between the various techniques dealing with legacy code. For reference, I use several of these excerpts. It is hoped that they will also support others.

///Status from SQLCODE:

set st

This Utility creates an xlsx file with the file name provided.

There are mutliple ways to write data to it, I am retrieving it via Sql queries in this example.

Include %occFile Class Utils.XLSX

{

ClassMethod DateTime() As %String [ CodeMode = expression ]

{

$TR($ZDateTime($H,3),"- :")

}

ClassMethod GenerateXLSX(

IsActive As %Boolean,

Output FileName As %String) As %Status

{

Set stream = ##class(%Stream.FileCharacter).%New()

Set tmpFile = ##class(%File).TempFilename("xls") If (IsActive) {

s lutFileName ="Test"_..DateTime()

Set tmpFile = ##class(%File).GetDirectory(tmpFile)_lutFileName_

In our table, we have a column cities, which has a filter which displays the cities of a state depending on the state

For simplicity let's assume we have a page

Property State As %String = "CA"

Our filter should run this query to show all cities of state ca

"SELECT Name FROM City WHERE State= "_%page.State

<column colName="City"

filterQuery="SELECT Name FROM City WHERE State= <This is where I am stuck>"

filterType="query"

header="City"

width="10%">

</column>

I am not able to pass any parameter to filterQuery.

Hello All,

There are few tools for SQL optimization available and even query builder has Show Plan to give us an estimation of the resources needed to execute.

For Methods - Is there anything similar ??

I would like to see a similar approach as to the time taken for method to execute.

Is Studio Debugger only option ??

Let's say we have a class

AbcRequest extends Ens.Request, PropertiesBaseAbstractClass(has all my properties common to all request)

Prop1

Prop2 and so on

Now in my Business Operation

I want to make a json dynamically from this request.

Yes obviously I can do is

Set requestDefinition = ##class(%Library.CompiledClass).%OpenId("MyCreateRequest")

Set JsonObject = ##class(%DynamicObject).%New()

for i=1:1:requestDefinition.Properties.Count() {

Set key = requestDefinition.Properties.GetAt(i).Name

Set value = $property(pRequest,requestDefinition.

Hello All,

I have been associated with Intersystems technologies for over a decade working on Cache, Zen, Ensemble etc.

This is a very niche field and a lovely community. I wanted to extend my hands to connect with people who are of same field or related to it.

Here is my linkedin profile. Pls feel free to send me an invite or drop me a message

Hi

Just curious to know about the pros and cons of Parent/Child Vs One/Many.

We do use a bit of both.

One big reason we use Parent child is we feel if we delete one global, it gets rid of all child data too and all parent child data is stored in one global. Much easier to manage.

1. Define Persistent Class

Call utility class to fetch json via query.

Class Test.JSONFromSQL Extends (%Persistent, %Populate)

{

Property FirstName As %String(POPSPEC = "FirstName()");

Property LastName As %String(POPSPEC = "LastName()");

Property CountOfThings As %Integer(POPSPEC = "Integer()");

ClassMethod OutputJSON() As %Status

{

If '..%ExistsId(1) Do ..Populate(100)

Set sql="select FirstName, LastName, CountOfThings from Atmus_Web_Test.JSONFromSQL"

Quit ##class(JSON.FromSQL).OutputJsonFromSQL(sql)

}

2. Utility Class

Class JSON.FromSQL Extends JSON.Base

{

ClassMethod OutputJsonFromSQL(

sql,

HI

-- We have our Pivot ready for some data for all accounts. And we want to now filter it by Account

[Account].[H1].Account.&[1]

It works fine at the pivot level and it filters the records. We save the pivot with no filter values as we want users to decide the Account they want to filter for

-Now If i save this pivot without a value of a filter , then obviously it has all records and I want to filter it at the Dashboard level on my page.

URL I am passing

..... .dashboard&SETTINGS=FILTER:[Account].[H1].[Account].&[1]&NOTITLE=1

I am just curious if someone has used this service to send Alert emails through AWS / Ensemble

I have verified 2 email ids - One to send. One to receive

Have also configured an access key for the email smtp server and added the credential to ensemble

but I get this error

ERROR #6033: Error response to SMTP MAIL FROM: 530 Authentication required.

email-smtp.us-east-1.amazonaws.com

25

So my first opinion is the authentication is not happening via the credentials settings. It needs to be passed in a different way

If anyone has done it before. Do let me know

It's Ok I figured it out.

Can we plugin a different UI or anyone has a suggestion to a more appealing UI in Deepsee that can be plugged in easily

I have a class extends %Persistent & %XML.Adaptor

It has 100 properties for example

Now I do intend to create a xml schema that I can import in Ensemble->XMLSchemas

I did try to use XMLExportToString and %XMLWriter.GetXMLString

but didn't give me a proper schema. May be I am missing some small step

Can someone pls help

Hello,

Fellow community members, here is an extendable / re-usable / generic pub-sub model.

An initial calling service reads a CSV file, parses through all records and transforms it to a generic JSON message.

The message is then transported via a pub-sub business process to the end-points Targets (business operations)

as configured for the subscribers for each Topic as demonstrated in the image below..png)

Steps and flow to implement this are as follows

1. Service

a. Read a csv file, loop through all records

b.