Advent of Code 2021: Code in ObjectScript to win!

Hey Developers,

Ready to participate in our annual December competition?

Join the Advent of Code 2021 with InterSystems and participate in our ObjectScript contest to win some $$$ prizes!

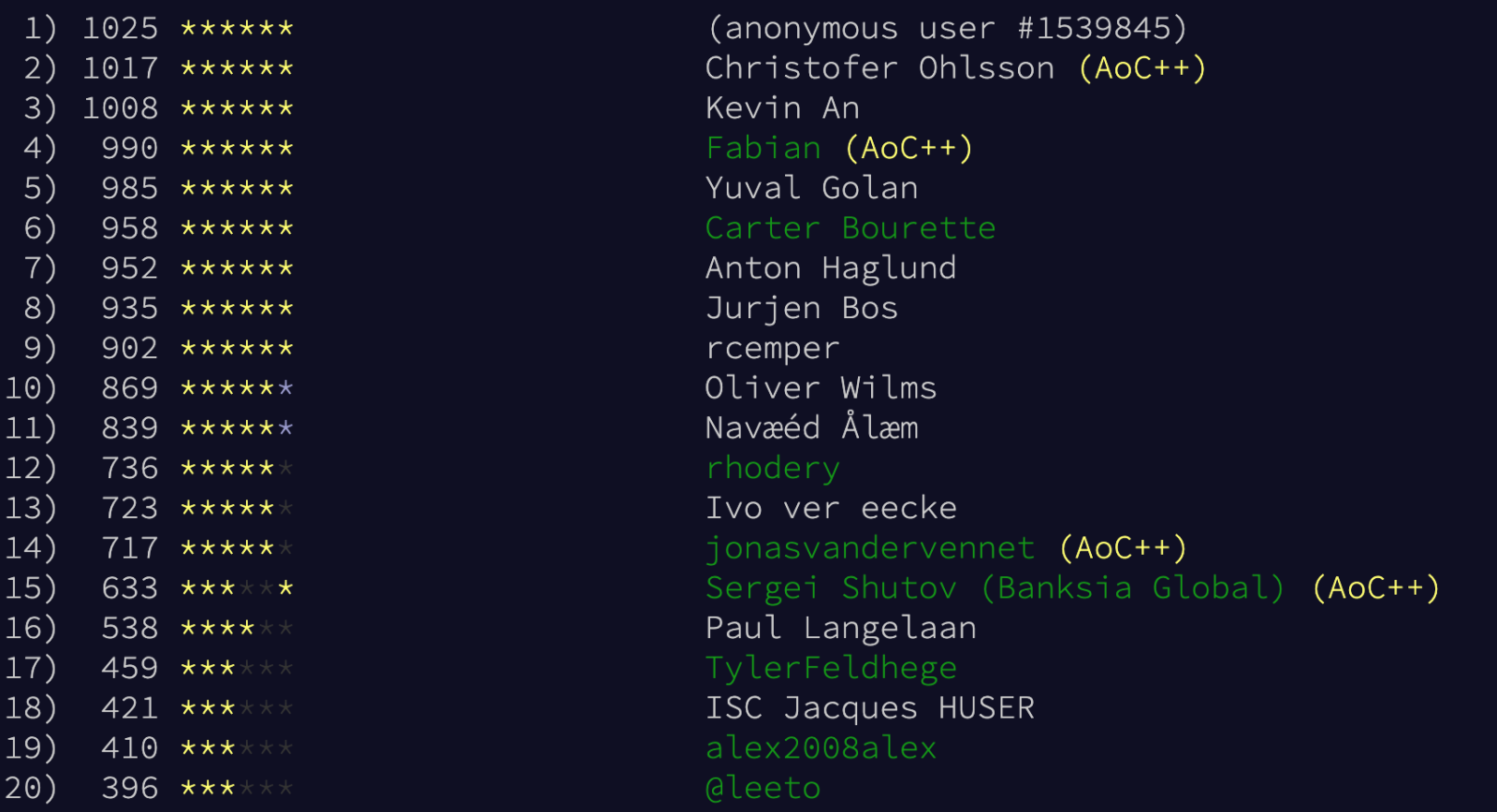

🏆 Our Leaderboard 🏆

Here you can find our last year's participants.

👉🏼 Join the ObjectScript private leaderboard with 130669-ab1f69bf code.

Note: You need to sign in to Advent of code (e.g. with GitHub / Google / Twitter / Reddit account) to see the leaderboard and participate in the contest.

Prizes:

🥇 1st place - $3,000

🥈 2nd place - $2,000

🥉 3rd place - $1,000

All winners will also get a special high-level Global Master badge.

Note: InterSystems employees are not eligible for money prizes.

Win Conditions:

1. To win our prize you should be on the top of the ObjectScript Leaderboard and upload all the solutions in a public repository on GitHub and present the code in InterSystems ObjectScript in UDL form as presented in the template below:

⬇️ The Advent of Code contest ObjectScript template

2. There should be no errors in the ObjectScript quality scanner for your project.

3. All participants have two days (December 26-27) to make your repository public. Winners will be announced on December 28.

4. All participants must be registered on the InterSystems Developer Community.

So!

The first puzzles will unlock on December 1st at midnight EST (UTC-5).

See you then and good luck to all of you!

Comments

The Contest Advent of Code 2021 starts on Wednesday!

Are you ready? 🤗

Could you elaborate a bit more on "And there should be no errors in the ObjectScript quality scanner for your project." . Does code smell count as an error ? Or is it just bugs (e.g. passed failed verdict given by the quality scanner ) ?

Also is there any way for me to check my code with the Objecscript quality scanner beforehand ? I downloaded sonarqube on my local machine, and tested sonarqube analysis on my local machine, but it seems like the default sonarqube analysis skips over .cls (Objectscript) files.

Hi @Rahim Mammadli !

Just include this file into your GitHub repo when you'll publish it after the advent - and it will send your repo for testing automatically.

Or you can do it even in the beginning - some participants publish code even during the event.

I made this repo public, but it doesn't seem to have been scanned?

https://github.com/projecteulerlover/AdventOfCode

Resolved: it looks like submitting a dummy change kicked off the scan.

Yes. The Github Actions script reacts on a push to the repository because of this line.

In case this is helpful for any other AOC noobs, the '130669-ab1f69bf' code doesn't go in the sponsor code field that you will be shown when you first create an account. You need to click on the 'Private leaderboard' link in red to the right of that field, and that will bring you to a new page where you can enter the above code.

Aaaaand it started! Good luck everyone!

any other restrictions on the code we should be aware of?

I was going to play but it is difficult to get started.

Tried to get the template, downloaded git and Docker desktop, got a git account, got IRIS working through Docker - was quite impressed with that) but cannot get the template. Am getting at least 2 errors on "docker-compose build" command:

.png)

Is it possible to play without the template? Surely it's just a class in UDL format?

The day 1 problem is easy to solve. I expect most of us could do it in one line of code. But what are the rules? How does the scoring work? Is it a race? Do you need to be in the first 5 people to finish it every day? Is it judged by someone? If so, what are you looking for? If you can't get your entry in on day 1 will you be too far behind?

Going to try it on a MAC instead of Windows, maybe that will help.

Judging: (from AoC)

Below is the Advent of Code 2021 overall leaderboard; these are the 100 users with the highest total score. Getting a star first is worth 100 points, second is 99, and so on down to 1 point at 100th place.so you collect stars

Just an addendum that since we use the private leaderboard mentioned in the original post, replace "100" with "the number of people in the private leaderboard", and decrement this by one for each person that did a particular part before you.

So it is a race. And being awake at the right time no matter where you are in the world is a big advantage given a fixed start time of Midnight UTC-5.

Based on past years (participating as I have time between my day job and family obligations... so typically dropping off around the 15th), the ObjectScript leaderboard tends to be pretty competitive. Especially in terms of when the challenges drop - I'm not staying up until midnight or whatever it is to be in the top few.

Generally, Docker is better on a Mac than Windows, from what I hear.

Thanks for the quick reply. Yep, worked first time on a MAC.

Still not sure of the rules, and given your experience, I'm not sure it will be enjoyable. Can't believe this is the template:

.png)

At least I've gained some knowledge on some of what can be done with Docker.

this is just a placeholder to demonstrate the structure

GitHuB doesn't allow empty directories

Thanks Robert. I guess that by now all the points worth gaining are gone so it won't hurt to post this. Is this the sort of answer that is being looked for?

(Rename classname to Day1?, Entry point should be ClassMethod Test()?)

.png)

I think as long as this code doesn't have errors (when you look for your repository in https://community.objectscriptquality.com/projects?sort=-analysis_date), and gets the correct answer on the AoC website, it should be accepted.

Clarifying: I really enjoy the coding challenges and would highly recommend them, but competing for a top spot is a level of intensity beyond what I'd do. (Even if I wasn't an InterSystems employee and was eligible for prizes other than clout. ![]() )

)

Just installed Docker on my machine for the first time (had just used it for GitHub Actions/Travis CI before). I feel a little bit like an old dog trying to learn new tricks... AOC 2021 is a good excuse for it!

Hey Developers!

Let's see the top 20 🔥:

.png)

Happy coding!

Our Top 20 🔥

We broke it guys :)

Hey hey!

Let's see our top 20 🔥

Watching the daily results of our private leaderboard I did some observations:

- the number of registered participants has grown since start from 68 to

93, 82 no surprise with the published code - actually, only 33 participants have collected points and stars

- I downloaded the actual ranking as JSON file into a local table

- I could identify only

1722 names from DC that I flagged DC for our ranking - if I missed you pls. let me know your identity in AoC by DC mail to add your DC flag

Date: 2021-12-27 08:53:47 UTC

Rank DC stars score name 1 1 50 4087 Kevin An 2 1 50 4026 Yuval Golan3 1 50 4056 rcemperdisqualified by using Embedded Python 4 1 50 3798 ISC Jacques HUSER ------------------------------------------------ all completed 5 1 41 2901 Bert Sarens 6 1 36 2825 Oliver Wilms 7 1 36 2689 dspools 8 1 36 2683 Ivo ver eecke 9 1 28 2060 Tim Leavitt 10 1 22 1775 Fabian 11 1 17 1273 rhodery 12 1 18 1265 Shamus Clifford 13 1 18 1230 CrashAvery 14 1 16 1149 TylerFeldhege 15 1 15 1147 Sergei Shutov (Banksia Global) 16 1 11 764 José Roberto Pereira Junior 17 1 9 599 jackpto 18 1 6 386 NjekTt 19 1 5 307 Stuart Byrne 20 1 4 267 goran7t 21 1 2 148 David Hockenbroch 22 1 2 116 Evgeny Shvarov ------------------------------------------------ DC members 23 50 4145 Steven Reiz 24 32 2584 Navæéd Ålæm 25 28 2271 Carter Bourette 26 11 804 jonasvandervennet 27 11 768 @leeto 28 8 548 Paul Langelaan 29 6 416 alex2008alex 30 1 57 maxisimo

Instead of a daily reply, I plan just to update this reply once a day

2021-12-18 22:01:42 UTC - DC Leaderboard updated

I wrote a script to calculate what the scoreboard looks like if we only account for people using COS (using @Robert Cemper's list). That is, I used the provided JSON data to form my own sorted lists of solve times for part 1 and part 2 for each question, discluding users not present in Robert's list. Scores are then recalculated based on each user's position in the sorted lists and summed up (which should match how they are calculated by AoC).

Interesting. How actual is your JSON input. ?

I download the same JSON file with the "local_score" from AoC

Update my table daily and sort it by( DC desc , score desc) every night. (see timestamps)

But your scores do not match AoC but deviate from AoC in both directions from last night's input.

(eg. yours by ~1000 pts minus ? )

Ah, I am using the same json inputs as you (freshly downloaded a few seconds before the script was run). The thing with only sorting the scores from the json file is that people who didn't solve in CoS shouldn't really reflect the "actual standings" for the contest. That's why the scores are so different- for example suppose the leaderboard only consists of the three users "cos_coder_1", "cos_coder_2", and "non_cos_coder". My script effectively removes the effect that "non_cos_coder" has on the leaderboard (e.g. on days where they got first, the other two users effectively get first/second).

The overall decreased scores is because this means we consider fewer participants as "active".

I see! And I feel it is right.

Therefore I suggested using a different private leaderboard about 2 weeks ago

where you have to request the access code from Developer Community Managers.

Instead of this public published access key. It could be kind of a registration.

And with 23 actives ist's not much extra effort + no need for recalculation and filtering.

Eventually, this may happen next year.

Though technically It may still be possible this year it's just bad taste

to change rules if you dislike the results during the game.

The next challenge is that ISC employees are excluded from prizes.

which requires a further step of filtering. Though I have this Info, I didn't apply.

2021-12-25 22:24:55 UTC - DC Leaderboard final update

Only 4 of 22 registered community members have completed all 25 exercises and reached 50 stars

My own contribution at pos. #3 was disqualified by using Embedded Python.

Updated win conditions:

3. All participants have two days (December 26-27) to make your repository public. Winners will be announced on December 28.

4. All participants must be registered on the InterSystems Developer Community.

Hello, what if one solved 1 day ( 2 puzzles of same day ) on paper , instead of writing code ? Asking for a friend

Happy holidays everyone! Another year of interesting problems and order of operation related errors ;)

Repository: https://github.com/projecteulerlover/AdventOfCode

ObjectScript quality status: https://community.objectscriptquality.com/dashboard?id=intersystems_iris_community%2FAdventOfCode

Oops, thought I had done this; done.

All code is also visible in QualityCheck Tab Code

https://community.objectscriptquality.com/code?id=intersystems_iris_community%2FAdventOfCode

Completed Solution:

Repository: https://github.com/rcemper/AoC2021-rcc

Description & Quality status: https://community.intersystems.com/post/aoc2021-rcc

Wow this is very interesting. We can use Python inside Iris? (e.g., https://github.com/rcemper/AoC2021-rcc/blob/master/src/dc/aoc2021/Day23…)

If I had know this ahead of time this would have been a lot less painful...

YES, pre-release was available in the first days of Dec.

So dropping a few days and converting to Embedded Python was worth the rewrite.

Since this is the future.

It's somehow strange that the most likely oldest participant took that path first.

Guess that's my plan for next year :) I guess I don't really follow ISC products for the rest of the year

you weren't the only one :)

a really good analysis is also to look at the split times of everybody (=how long between submitting part 1 and part 2). the api gives us the data.

especially when there are cases where the first few people per problem took hours for the split time and then there's a lot of people with sub 1 minute times. I won't go through the effort of looking through their code to see if they actually only had to do a one line change to solve the second part. i would call that suspicious.

After the holidays, could we get an update on the use of embedded Python for these contests?

I always got the feel from InterSystems events like this that we are trying to push for greater product visibility to those outside the InterSystems/CoS/Mumps umbrella, and that these contests welcome new people to take part in these technologies. Please correct me if I'm incorrect in this assumption- if this is the case, the rest of this post is just nonsensical rambling.

I don't fault anyone who used it during the contest; for example, I see from scrolling up that @FabianHaupt asking for clarifications on additional rules for the code in this thread, which I guess could have been hinting at this feature (which didn't receive any public follow up). However, it makes me feel strange that this is both allowed and no announcement was made about it.

From my perspective, the first rule which says "...present the code in InterSystems ObjectScript in UDL form..." would automatically make most new contestants write in CoS only, when its more likely (from a probabilistic perspective) that they have more experience and would have done much better with Python. Obviously, the fact that embedded Python in IRIS as a feature isn't a state secret, but I still feel like new users are disadvantaged from knowing about it.

Hi @Kevin An! Embedded Python was not eligible for the contest.

It was an ObjectScript contest only as it ware stated by @Anastasia Dyubaylo .

As for the next year we don't know yet whether it makes a lot of sense to compete in Embedded Python as it looks like the sort of tasks in AoC can be solved without deep usage of DBMS or/and globals so it becomes a competition in clear python.

Any advice on how we could make Embedded Python competition within AoC and keep the sense is much appreciated.

It feels weird to me that the rules should be changed to disallow Python for this year, as that would actively cost Robert Cemper his 3rd place prize, but on this it's of course up to the organizers.

As for your second point, I think it is correct that having the IRIS wrapper around pure Python code would be a bit pointless, and it would also be strange for InterSystems to sponsor such a event. I think the main selling point of doing these problems in CoS is a flexibility of working with multidimensional arrays and the flexibility of this data structure to solve challenging and intricate problems.

Repository (for all years) can be found here: https://github.com/uvg/AdventOfCodeUvg

COS Code for 2021 : https://github.com/uvg/AdventOfCodeUvg/tree/master/2021/COS

ObjectScript quality status: https://community.objectscriptquality.com/dashboard?id=intersystems_iris_community%2FAdventOfCodeUvg

@Kevin An and @Yuval Golan!

Congratulations on your victory in the contest! Could you please submit both repositories to Open Exchange?

It's a pity that's it all the code is in mac and int thus so there is no quality check available that works for CLS ObjectScript code only for now.

Also, it's difficult to run and examine the code you submitted as it needs a lot of manual steps to load it into a docker.

It'd be great if you could add a docker environment into the repo (like in a template) and hopefully convert code to CLS ObjectScript.

It's not "the must", but it'd be very convenient to use for the community.

Thanks, and congratulations!

Thank you @Evgeny Shvarov

Now with classes, you can find them on

.png)