You don't indicate which product you are using (IRIS, Cache, Ensemble) which may impact on some details. However the basic code to load the form data and execute the Post call is going to be the same.

You need an instance of %Net.HTTPRequest. If you are in Ensemble then you would work through the adapter. For Cache or IRIS create an instance of this class and configure it for the server end point.

Add your form data to the request by calling the InsertFormData method.

ex. req.InsertFormData("NameFld", "NameValue")

To cause the request to be performed call the Post method

ex. set status = req.Post(REST resource URL)

If you assign the server and port properties of the request then do not include them in the URL here.

You can access the response in the HTTPResponse property of the request. This will be an instance of the %Net.HTTPResponse class.

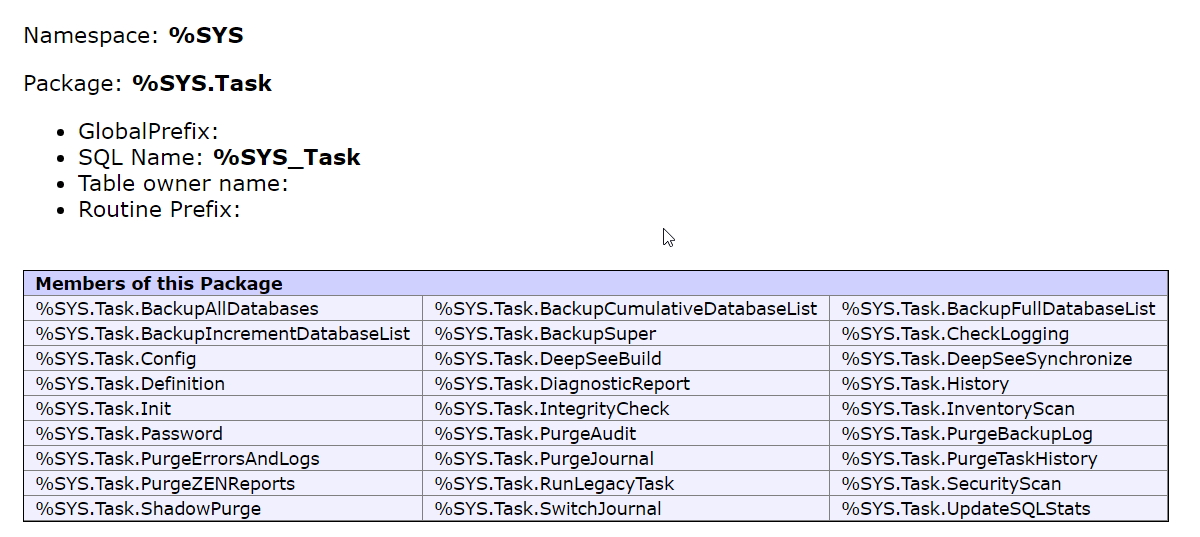

You can read more on the %Net.Request class in the class reference at https://docs.intersystems.com/latest/csp/documatic/%25CSP.Documatic.cls

- Log in to post comments