Continuous Delivery of your InterSystems solution using GitLab - Part IV: CD configuration

In this series of articles, I'd like to present and discuss several possible approaches toward software development with InterSystems technologies and GitLab. I will cover such topics as:

- Git 101

- Git flow (development process)

- GitLab installation

- GitLab Workflow

- Continuous Delivery

- GitLab installation and configuration

- GitLab CI/CD

In the first article, we covered Git basics, why a high-level understanding of Git concepts is important for modern software development, and how Git can be used to develop software.

In the second article, we covered GitLab Workflow - a complete software life cycle process and Continuous Delivery.

In the third article, we covered GitLab installation and configuration and connecting your environments to GitLab

I this article we'll finally write a CD configuration.

Plan

Environments

First of all, we need several environments and branches that correspond to them:

| Environment | Branch | Delivery | Who can commit | Who can merge |

|---|---|---|---|---|

| Test | master | Automatic | Developers Owners | Developers Owners |

| Preprod | preprod | Automatic | No one | Owners |

| Prod | prod | Semiautomatic (press button to deliver) | No one |

Owners |

Development cycle

And as an example, we'll develop one new feature using GitLab flow and deliver it using GitLab CD.

- Feature is developed in a feature branch.

- Feature branch is reviewed and merged into the master branch.

- After a while (several features merged) master is merged into preprod

- After a while (user testing, etc.) preprod is merged into prod

Here's how it would look like (I have marked the parts that we need to develop for CD in cursive):

- Development and testing

- Developer commits the code for the new feature into a separate feature branch

- After feature becomes stable, the developer merges our feature branch into the master branch

- Code from the master branch is delivered to the Test environment, where it's loaded and tested

- Delivery to the Preprod environment

- Developer creates merge request from master branch into the preprod branch

- Repository Owner after some time approves merge request

- Code from the preprod branch is delivered to the Preprod environment

- Delivery to the Prod environment

- Developer creates merge request from preprod branch into the prod branch

- Repository Owner after some time approves merge request

- Repository owner presses "Deploy" button

- Code from prod branch is delivered to the Prod environment

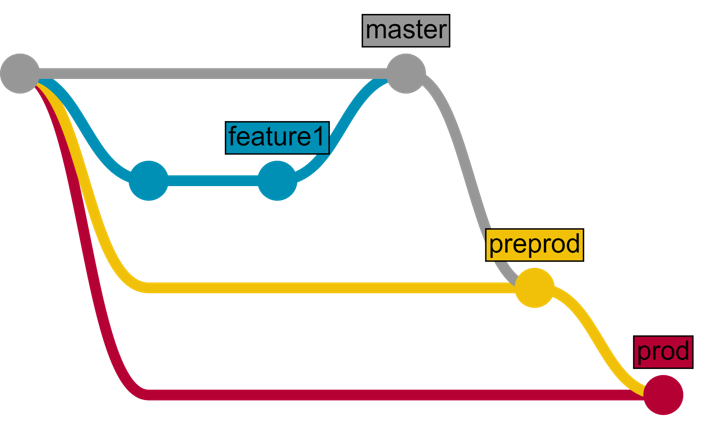

Or the same but in a graphic form:

Application

Our application consists of two parts:

- REST API developed on InterSystems platform

- Client JavaScript web application

Stages

From the plan above we can determine the stages that we need to define in our Continuous Delivery configuration:

- Load - to import server-side code into InterSystems IRIS

- Test - to test client and server code

- Package - to build client code

- Deploy - to "publish" client code using web server

Here's how it looks like in .gitlab-ci.yml configuration file:

stages: - load - test - package - deploy

Scripts

Load

Next, let's define the scripts. Scripts docs. First, lets define a script load server that loads server-side code:

load server:

environment:

name: test

url: http://test.hostname.com

only:

- master

tags:

- test

stage: load

script: csession IRIS "##class(isc.git.GitLab).load()"What happens here?

- load server is a script name

- next, we describe the environment where this script runs

- only: master - tells GitLab that this script should be run only when there's a commit to master branch

- tags: test specifies that this script should only run on a runner which has test tag

- stage specifies stage for a script

- script defines code to execute. In our case, we call classmethod load from isc.git.GitLab class

Important note

For InterSystems IRIS replace csession with iris session.

For Windows use: irisdb -s ../mgr -U TEST "##class(isc.git.GitLab).load()

Now let's write the corresponding isc.git.GitLab class. All entry points in this class look like this:

ClassMethod method()

{

try {

// code

halt

} catch ex {

write !,$System.Status.GetErrorText(ex.AsStatus()),!

do $system.Process.Terminate(, 1)

}

}Note that this method can end in two ways:

- by halting current process - that registers in GitLab as a successful completion

- by calling $system.Process.Terminate - which terminates the process abnormally and GitLab registers this as an error

That said, here's our load code:

/// Do a full load

/// do ##class(isc.git.GitLab).load()

ClassMethod load()

{

try {

set dir = ..getDir()

do ..log("Importing dir " _ dir)

do $system.OBJ.ImportDir(dir, ..getExtWildcard(), "c", .errors, 1)

throw:$get(errors,0)'=0 ##class(%Exception.General).%New("Load error")

halt

} catch ex {

write !,$System.Status.GetErrorText(ex.AsStatus()),!

do $system.Process.Terminate(, 1)

}

}Two utility methods are called:

- getExtWildcard - to get a list of relevant file extensions

- getDir - to get repository directory

How can we get the directory?

When GitLab executes a script first it specifies a lot of environment variables. One of them is CI_PROJECT_DIR - The full path where the repository is cloned and where the job is run. We can easily get it in our getDir method:

ClassMethod getDir() [ CodeMode = expression ]

{

##class(%File).NormalizeDirectory($system.Util.GetEnviron("CI_PROJECT_DIR"))

}

Tests

Here's test script:

load test:

environment:

name: test

url: http://test.hostname.com

only:

- master

tags:

- test

stage: test

script: csession IRIS "##class(isc.git.GitLab).test()"

artifacts:

paths:

- tests.htmlWhat changed? Name and script code of course, but artifact also was added. An artifact is a list of files and directories which are attached to a job after it completes successfully. In our case after the tests are completed, we can generate HTML page redirecting to the test results and make it available from GitLab.

Note that there's a lot of copy-paste from the load stage - environment is the same, script parts, such as environments can be labeled separately and attached to a script. Let's define test environment:

.env_test: &env_test

environment:

name: test

url: http://test.hostname.com

only:

- master

tags:

- testNow our test script looks like this:

load test:

<<: *env_test

script: csession IRIS "##class(isc.git.GitLab).test()"

artifacts:

paths:

- tests.htmlNext, let's execute the tests using UnitTest framework.

/// do ##class(isc.git.GitLab).test()

ClassMethod test()

{

try {

set tests = ##class(isc.git.Settings).getSetting("tests")

if (tests'="") {

set dir = ..getDir()

set ^UnitTestRoot = dir

$$$TOE(sc, ##class(%UnitTest.Manager).RunTest(tests, "/nodelete"))

$$$TOE(sc, ..writeTestHTML())

throw:'..isLastTestOk() ##class(%Exception.General).%New("Tests error")

}

halt

} catch ex {

do ..logException(ex)

do $system.Process.Terminate(, 1)

}

}Tests setting, in this case, is a path relative to repository root where unit tests are stored. If It's empty then we skip tests. writeTestHTML method is used to output html with a redirect to test results:

ClassMethod writeTestHTML()

{

set text = ##class(%Dictionary.XDataDefinition).IDKEYOpen($classname(), "html").Data.Read()

set text = $replace(text, "!!!", ..getURL())

set file = ##class(%Stream.FileCharacter).%New()

set name = ..getDir() _ "tests.html"

do file.LinkToFile(name)

do file.Write(text)

quit file.%Save()

}

ClassMethod getURL()

{

set url = ##class(isc.git.Settings).getSetting("url")

set url = url _ $system.CSP.GetDefaultApp("%SYS")

set url = url_"/%25UnitTest.Portal.Indices.cls?Index="_ $g(^UnitTest.Result, 1) _ "&$NAMESPACE=" _ $zconvert($namespace,"O","URL")

quit url

}

ClassMethod isLastTestOk() As %Boolean

{

set in = ##class(%UnitTest.Result.TestInstance).%OpenId(^UnitTest.Result)

for i=1:1:in.TestSuites.Count() {

#dim suite As %UnitTest.Result.TestSuite

set suite = in.TestSuites.GetAt(i)

return:suite.Status=0 $$$NO

}

quit $$$YES

}

XData html

{

<html lang="en-US">

<head>

<meta charset="UTF-8"/>

<meta http-equiv="refresh" content="0; url=!!!"/>

<script type="text/javascript">

window.location.href = "!!!"

</script>

</head>

<body>

If you are not redirected automatically, follow this <a href='!!!'>link to tests</a>.

</body>

</html>

}Package

Our client is a simple HTML page:

<html>

<head>

<script type="text/javascript">

function initializePage() {

var xhr = new XMLHttpRequest();

var url = "${CI_ENVIRONMENT_URL}:57772/MyApp/version";

xhr.open("GET", url, true);

xhr.send();

xhr.onloadend = function (data) {

document.getElementById("version").innerHTML = "Version: " + this.response;

};

var xhr = new XMLHttpRequest();

var url = "${CI_ENVIRONMENT_URL}:57772/MyApp/author";

xhr.open("GET", url, true);

xhr.send();

xhr.onloadend = function (data) {

document.getElementById("author").innerHTML = "Author: " + this.response;

};

}

</script>

</head>

<body onload="initializePage()">

<div id = "version"></div>

<div id = "author"></div>

</body>

</html>And to build it we need to replace ${CI_ENVIRONMENT_URL} with its value. Of course, real-world application would probably require npm, but it's just an example. Here's the script:

package client:

<<: *env_test

stage: package

script: envsubst < client/index.html > index.html

artifacts:

paths:

- index.htmlDeploy

And finally, we deploy our client by copying index.html into webserver root directory.

deploy client: <<: *env_test stage: deploy script: cp -f index.html /var/www/html/index.html

That's it!

Several environments

What to do if you need to execute the same (similar) script in several environments? Script parts can also be labels, so here's a sample configuration that loads code in test and preprod environments:

stages:

- load

- test

.env_test: &env_test

environment:

name: test

url: http://test.hostname.com

only:

- master

tags:

- test

.env_preprod: &env_preprod

environment:

name: preprod

url: http://preprod.hostname.com

only:

- preprod

tags:

- preprod

.script_load: &script_load

stage: load

script: csession IRIS "##class(isc.git.GitLab).loadDiff()"

load test:

<<: *env_test

<<: *script_load

load preprod:

<<: *env_preprod

<<: *script_loadThis way we can escape copy-pasting the code.

Complete CD configuration is available here. It follows the original plan of moving code between test, preprod and prod environments.

Conclusion

Continuous Delivery can be configured to automate any required development workflow.

Links

- Hooks repository (and sample configuration)

- Test repository

- Scripts docs

- Available environment variables

What's next

In the next article, we'll create CD configuration that leverages InterSystems IRIS Docker container.

Comments

Hi Eduard,

I had a look at your continuous delivery articles and found them awesome! I tried to set up a similar environment but I'm struggling with a detail... Hope you'll be able to help me out.

I currently have a working gitlab-runner installed on my Windows Laptop with a working Ensemble 2018.1.1 with the isc.gitlab package you provided.

C:\Users\gena6950>csession ENS2K18 -U ENSCHSPROD1 "##class(isc.git.GitLab).test()"

===============================================================================

Directory: C:\Gitlab-Runner\builds\ijGUv41q\0\ciussse-drit-srd\ensemble-continuous-integration-tests\Src\ENSCHS1\Tests\Unit\

===============================================================================[...]

Use the following URL to view the result:

http://10.225.31.79:8971/csp/sys/%25UnitTest.Portal.Indices.cls?Index=2…

All PASSEDD

C:\Users\gena6950>

I had to manually "alter" the .yml file because of a new bug with parenthesis in the gitlab-runner shell commands (see https://gitlab.com/gitlab-org/gitlab-runner/issues/1941). Relevant parts of this file are there (the file itself is larger but I think it's irrelevant). I put a "Echo" there to see how the command was received by the runner.

stages:

- load

- test

- packagevariables:

LOAD_DIFF_CMD: "##class(isc.git.GitLab).loadDiff()"

TEST_CMD: "##class(isc.git.GitLab).test()"

PACKAGE_CMD: "##class(isc.git.GitLab).package()".script_test: &script_test

stage: test

script:

- echo csession ENS2K18 -U ENSCHSPROD1 "%TEST_CMD%"

- csession ENS2K18 -U ENSCHSPROD1 "%TEST_CMD%"

artifacts:

paths:

- tests.html

And this is the output seen by the gitlab output :

Running with gitlab-runner 11.7.0 (8bb608ff)

on Laptop ACGendron ijGUv41q

Using Shell executor...

Running on CH05CHUSHDP1609...

Fetching changes...

Removing tests.html

HEAD is now at b1ef284 Ajout du fichier de config du pipeline Gitlab

Checking out b1ef284e as master...

Skipping Git submodules setup

$ echo csession ENS2K18 -U ENSCHSPROD1 "%TEST_CMD%"

csession ENS2K18 -U ENSCHSPROD1 "##class(isc.git.GitLab).test()"

$ csession ENS2K18 -U ENSCHSPROD1 "%TEST_CMD%"

<NOTOPEN>>ERROR: Job failed: exit status 1

I'm pretty sure it must be a small thing but I can't put my finger on it!

Hope you'll be able to help!

Kind regards

Andre-Claude

I have not tested the code on Windows, but here's my idea.

As you can see in the code for test method in cause of exceptions I end all my processes with

do $system.Process.Terminate(, 1)it seems this path is getting hit.

How to fix this exception:

- Check that test method actually gets called. Write to a global in a first line.

- In exception handler add do ex.Log() and check application error log to see exception details.

Thank you, I will have a look at that and also, I'm replicating my setup on a redhat gitlab-runner. I'll update this post if I find my way out of this on Windows. I also noticed that the "ENVIRONMENT" variables were not passed appropriately in a way that csession understands. The $system.Util.GetEnviron("CI_PROJECT_DIR") and $system.Util.GetEnviron("CI_COMMIT_SHA") calls both returns an empty string.

Perhaps the <NOTOPEN> is related to the way the stdout is read in windows.

Solution for this issue was posted here.

Thanks for this detailed article but have a few questions in mind ( probably you might have faced/answered when you have implemented this solution)

1) These CI/CD builds on the corresponding servers are Clean build ? If the commit is about deleting 4/5 classes unless we do a "Delete" Exclusive/all files on the servers regular load from Sandbox may not overwrite/delete the files that are present on the Server where we are building ?

2) Are the Ensemble Production classes stored as One Production file but in different versions (with Different settings) in respective branches ? or dedicated production file for each Branch/Server so Developers merge Items (Business Service, Process etc) as they move them from one branch to another ? what is the best approach that supports this above model ?

Per your second question, best practice is generally to use System Defaults which are set in your Namespace and store the production settings (rather than storing them in the Production class). This allows you to prevent having to have differences in the Production class between branches.

I agree, but unfortunately Portal's edit forms for config items always apply settings into the production class (the XData block). Even worse, Portal ignores the source control status of the production class, so you can't prevent these changes. Portal users have to go elsewhere to add/edit System Defaults values. It's far from obvious, nor is it easy. And because they don't have to (i.e. the users can just make the edit in the Portal panel and save it) nobody does. We raised all this years ago, but so far it's remained unaddressed ![]()

See also https://community.intersystems.com/post/system-default-settings-versus-…

Interesting questions.

- There are several ways to achieve clean and pseudo-clean builds:

- Containers. Clean builds every time. Next articles in the series explore how containers can be used for CI/CD.

- Hooks. Curently I implemented one-time and every-time hooks before and after build. They can be used to do deletion, configuration, etc.

- Recreate. Add action to delete before build:

- DBs

- Namespaces

- Roles

- WebApps

- Anything else you created

- I agree with @Ben Spead here. System default settings are the way to go. If you're working outside of Ensemble architecture, you can create a small settings class which gets the data from global/table and use that. Example.

Hi Eduard! thank you for this series. It has been very useful for understanding how to structure GitLab-based CI/CD around InterSystems environments.

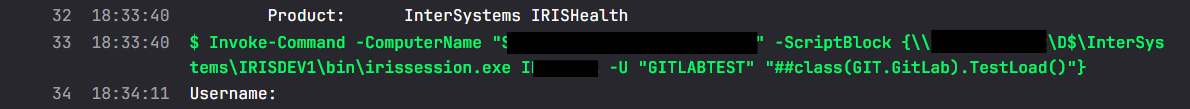

I’m trying to adapt the CD approach from this post to InterSystems IRIS 2025.1 on Windows, and I’ve been running into some trouble with the command-line execution side. In particular, I noticed the article’s examples are centered around Caché/Linux-style usage, with the note for IRIS and Windows. In my case, I can execute my routine interactively through the terminal path, but when I try to use run / runw from a GitLab Runner using the Shell executor, the process completes differently and the code does not appear to execute as expected.

Have you seen this behavior before on Windows with IRIS? Is there a different command you would recommend today for IRIS 2025.1 on Windows in a GitLab CD script, especially for non-interactive execution from a runner?

Also, have you ever implemented CD using functionality from the Embedded Git package from Open Exchange instead of a pure import/load approach? For example, something conceptually similar to calling an Embedded Git API method from a CI/CD job so the server pulls the approved code from the repository.

Any guidance on the recommended command or pattern for modern IRIS on Windows would be greatly appreciated. Thanks again for putting this series together.

You can run irissession:

C:\InterSystems\IRIS\bin\irissession.exe iris -U "%SYS" "classmethod"

As for EmbeddedGit you can commit using EmbeddedGit and deploy using gitlab runners. I suppose you can call EmbeddedGit methods to import code, probably SourceControl.Git.Utils:ImportAll.

irissession prompts for credentials. Is that a server setting?

Yes, you can use OS auth to bypass it in a secure manner.

It works now. Thanks!