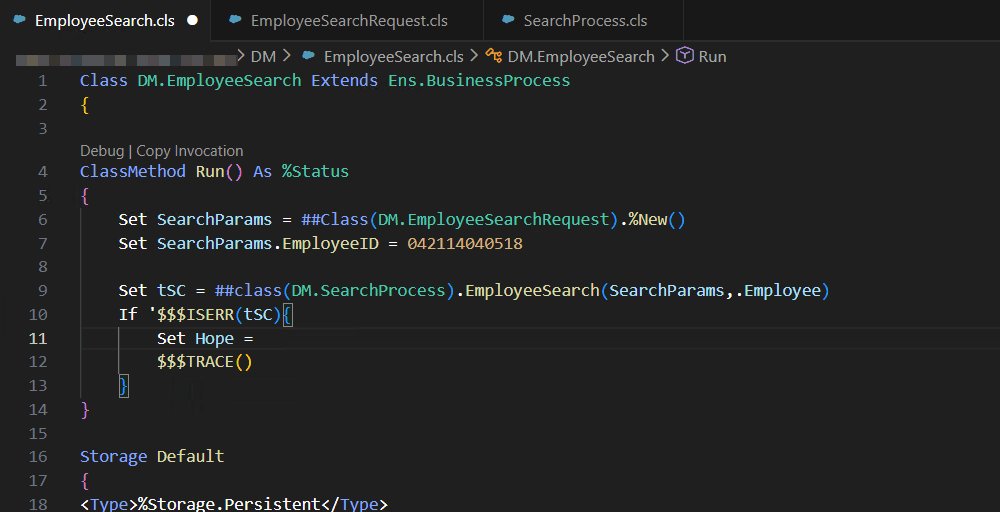

The Method as shown in my examples was "%Next" but you have missed the %.

See this more complete example:

Class Demo.Operations.GetResultsFromLookupTable Extends Ens.BusinessOperation

{

Method OnMessage(pRequest As Ens.Request, Output pResponse As Ens.StringContainer) As %Status

{

Set tSC = $$$OK

Set tStatement = ##class(%SQL.Statement).%New()

Set tQuery = "SELECT * From Ens_Util.LookupTable WHERE TableName = ?"

Set tTable = "testLookupTable"

$$$THROWONERROR(tSC,tStatement.%Prepare(tQuery))

Set tResult = tStatement.%Execute(tTable)

Set count = 0

While tResult.%Next(){

//Do what you need to with the results here

$$$TRACE("Result: "_tResult.KeyName_", "_tResult.DataValue)

Do $INCREMENT(count)

}

Set pResponse = ##Class(Ens.StringContainer).%New()

Set pResponse.StringValue = count_" results found."

Quit tSC

}

}

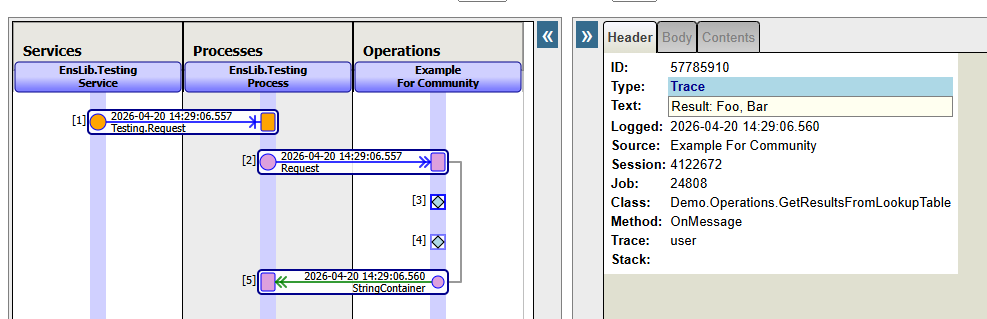

Which gives this in a trace:

Also, please note that in my initial response, I had a mistake in the column names I referenced. I had put "KeyName" and "KeyValue" whereas the second should have been "DataValue" as you already had in your first screenshot.

- Log in to post comments