Totally agree.

- Log in to post comments

Totally agree.

If anyone is looking to use in their Multi-Model solution InterSystems IRIS's DocDB, I posted this article, sharing a Postman Collection of sample REST API calls. I also added to the article a Relational/SQL angle of the data.

Hope you find it useful:

https://community.intersystems.com/post/document-database-docdb-sample-…

Indeed Nicky, the size of the Audit database is a serious concern.

One option you might want to consider (it entails some coding but might be worth your time, vs. your sizing concerns), is to have some task that would do "house keeping" for your events.

If you have a lot of various SQL events (you mention INSERTs, and probably also SELECTs) that you don't have interest in, and one or two that you do (like DELETE). You could have a task that would run periodically (per the timing that makes sense for you, could even be every hour if that's relevant). This task would go through the SQL Audit events, specifically the SQL ones you just turned on, looking at the Description field returned, it should include the type of statement (e.g. SELECT or DELETE). Then for every event that is not a DELETE, for example, you can delete it (remove it from the Audit Log; or export and delete if you want to save them aside).

You could query the Audit by using the %SYS.Audit relevant class queries, "List" for example, filtering on the related event, though Description is just returned and not filtered in advance.

Or you could use direct SQL, for example (this time for looking for the DELETEs) -

SELECT ID, EventSource, EventType, Event, Description FROM %SYS.Audit

WHERE (Event='XDBCStatement') AND (Description %STARTSWITH 'SQL DELETE')Which would return for example:

| ID | EventSource | EventType | Event | Description |

|---|---|---|---|---|

| 2021-01-10 08:23:24.420||MYMACHINE:MYINSTANCE||2386 | %System | %SQL | XDBCStatement | SQL DELETE Statement |

Then you could of course also look for what is not DELETEs...

SELECT ID, EventSource, EventType, Event,Description FROM %SYS.Audit

WHERE (Event='XDBCStatement') AND NOT (Description %STARTSWITH 'SQL DELETE')which could return for example:

| ID | EventSource | EventType | Event | Description |

|---|---|---|---|---|

| 2021-01-10 08:32:53.014||MYMACHINE:MYINSTANCE||2388 | %System | %SQL | XDBCStatement | SQL SELECT Statement |

Then you could delete it, for example:

DELETE FROM %SYS.Audit

WHERE ID='2021-01-10 08:32:53.014||MYMACHINE:MYINSTANCE||2388'[Of course you could combine these last two statements into one - e.g. DELETE FROM ... WHERE ID IN (...)]

Hope this helps...

Nicky,

You can use the %System->%SQL family of audit events.

From the docs:

| Event Source | Event Type and Event | Occurs When | Event Data Contents | Default Status |

|---|---|---|---|---|

| %System |

%SQL/ DynamicStatement |

A dynamic SQL call is executed. | The statement text and the values of any host-variable arguments passed to it. If the total length of the statement and its parameters exceeds 3,632,952 characters, the event data is truncated. | Off |

| %System |

%SQL/ EmbeddedStatement |

An embedded SQL call is executed. See below for usage details. | The statement text and the values of any host-variable arguments passed to it. If the total length of the statement and its parameters exceeds 3,632,952 characters, the event data is truncated. | Off |

| %System |

%SQL/ XDBCStatement |

A remote SQL call is executed using ODBC or JDBC. | The statement text and the values of any host-variable arguments passed to it. If the total length of the statement and its parameters exceeds 3,632,952 characters, the event data is truncated. | Off |

If you're interested only with JDBC you can stick with the last event above (XDBCStatement).

Note, while we're on the subject, if you're looking for "fancier" kind of auditing, for example to log changed fields (before and after values), you can check this thread by @Fabio.Goncalves.

For differences between InterSystems CACHE and InterSystems IRIS Data Platform I suggest you have a look here.

Specifically you can find there a table comparing the products (including InterSystems Ensemble).

As well as a document going into detail about various new features and capabilities

If you want to perform a PoC for a new system definitely use InterSystems IRIS.

Important Note -

Some classes - for example the built-in Business Process class (Ens.BusinessProcessBPL) - have already specific implementation of an %OnDelete method, and hence adding the DeleteHelper as a SuperClass would override the "default" %OnDelete that class might have (depending on the order of Inheritance defined in the class).

So for example a BPL-based Business Process would have this %OnDelete -

ClassMethod %OnDelete(oid As %ObjectIdentity) As %Status

{

Set tId=$$$oidPrimary(oid)

&sql(SELECT %Context INTO :tContext FROM Ens.BusinessProcessBPL WHERE %ID = :tId)

If 'SQLCODE {

&sql(DELETE from Ens_BP.Context where %ID = :tContext)

&sql(DELETE from Ens_BP.Thread where %Process = :tId)

}

Quit $$$OK

}Note this takes care of deleting the related Context and Thread data when deleting the Business Process instance. This is the desired behavior. Without this lots of "left-over" data could remain.

Note also that in fact the "AddHelper" class that assists in adding the DeleteHelper as a Super Class to various classes - does not add the DeleteHelper to Business Process classes -

// Will ignore classes that are Business Hosts (i.e. Business Service, Business Process or Business Operation)

// As they do not require this handling

If $ClassMethod(className,"%IsA","Ens.Host")||$ClassMethod(className,"%IsA","Ens.Rule.Definition") {

Continue

}

But if you add the DeleteHelper manually to your classes please take caution to see if the class already has an %OnDelete() method and how you'd like to handle that.

A good option is to simply add a call to the original %OnDelete in the newly generated %OnDelete() method calling ##super().

Thanks to @Suriya Narayanan Suriya Narayanan Vadivel Murugan for helping a customer diagnose this situation!

Good point!

Thanks for adding this related BP consideration Eduard.

Hi Murillo,

I replied to your similar comment on this discussion thread. In fact I referenced this discussion there as well...![]()

So you'll find a more detailed answer there.

In any case - using both utilities could be helpful for your scenario going ahead - the DeleteHelper to hopefully avoid these kinds of cases, and this utility to validate you indeed don't have any "Purge leaks".

Hi Murillo,

Happy you found interest in this utility.

The situation you described is in fact the classic scenario I had in mind when I built this utility, so it could definitely help.

Here are a few clarifications though –

As I mentioned in my original post above, in “relatively” newer versions of Ensemble (since 2017.1), and definitely in InterSystems IRIS, within the SOAP Wizard there is a checkbox that if you check, the auto-generated classes will include a similar %OnDelete method to the one generated by my utility. And therefore the built-in Purge Task will take care of deleting this data (going ahead that is… see more about this in the next comment).

So in these versions, for this specific scenario (SOAP Wizard generated classes, as well the XML Schema Wizard) you don’t have to use my utility. For other cases, where you have custom Persistent classes with inter-relations, my utility would still be helpful.

This %OnDelete method (generated by my utility, or by checking the checkbox in the Wizard mentioned above) takes care of deleting these objects when the Message Body is deleted. But if the Message Body has already been deleted (which seems like your case), this would not help (directly) retroactively.

It would still be recommended to add it, for Purges happening going ahead (and for another consideration mentioned soon), but just by adding this, the old data accumulated will not get auto-magically deleted.

If indeed you have this old data (of objects pointed to by older, previously purged Message Bodies) accumulated then you’ll have to take care of deleting it programmatically.

I won’t get into too many details about how to do this, but here are a few general words of background –

The general structure of Ensemble Messages (in this context) is:

Message Header -> Message Body [ -> Possible other Persistent objects referenced at; and potentially other levels of more object hierarchy]

The built-in Purge Task will delete the Message Header along with the Message Bodies (assuming you checked the “Include Bodies” checkbox in the Purge Task definition, which in 99.99% cases I’ve seen should be checked). But it will not delete other Persistent objects the Message Body was referencing, at least not just “out-of-the-box” (that’s where the %OnDelete method comes into play).

So if your Message Headers and Bodies referring to the “other objects” were already deleted, in order to delete the “not referenced anymore” objects, you’d need to find the last ID of these objects (at the top most level of the object hierarchy) that still has a reference, and delete those under that ID (assuming an accumulative running integer ID for these objects).

I believe the WRC (InterSystems Support – Worldwide Response Center) have a utility built for these kinds of cases (at least scenarios), so I recommend reaching out to the WRC for their assistance, if this is your situation.

Note that if you added my %OnDelete to your classes, then finding just the top-level object in the hierarchy, the one(s) that the Message Body referenced, would be enough to delete, since the %OnDelete will take care of deleting the rest of “the tree” of objects. That’s why I said above that it won’t help the situation of this “lingering” data all by itself, but it could help.

As you can see finding yourself in the situation of having accumulated old data that is not too straightforward to delete, is something you’d very much want to avoid. That is why I also built another utility that helps in validating, during development/testing stages, that there are no such “Purge leaks” in your interfaces.

In addition this tool also helps at providing an estimate as to how much diskspace (for database growth and journaling) your interface will require.

You can check this out here.

Hope this helps.

Thanks Evgeny, understood.

So you're saying: "InterSystems spec-first /api/mgmnt/ service generates such an endpoint." - that means that if I used the spec-first approach (which I did, I used ^%REST) I should have this /_spec endpoint? Because that didn't seem to happen...

Thanks for this tip Evgeny.

Indeed this /_spec convention pointing to the Swagger spec does seem convenient, I wasn't aware this was part of the Open API Specification (in fact I didn't find this mentioned in the Spec...).

I actually saw the method you mentioned when I was looking at the rest template provided as part of the previous contest, and thought of using it, but eventually since I used the spec-first approach, and this method you mentioned is currently (at least as-is) implemented assuming you use a code-first approach, I opted not to, and to have the same functionality (a REST API end-point providing the Swagger spec) via our already built-in API Mgmt. REST API (which I mentioned above).

I could of course consider adding something similar in the future.

Thanks Evegny.

Well no error info is a little hard to work with...

But (un)fortunately I was able to reproduce this (though earlier on in the process I tested with the test ZPM registry and it seemed to work fine...).

In any case I see (as I mentioned in my previous reply, it was worth looking there) that the UI Web Application was defined with an unauthenticated authentication method, only.

Even though the Module.xml file (link from previous version) defines it with Authentication...

Indeed when I look at the -verbose output of the ZPM install I see different behavior in each of the two CSP Apps being installed.

Even though both define this -

PasswordAuthEnabled="1"

UnauthenticatedEnabled="0"For the REST app I see this:

Creating Web Application /pbuttons

AutheEnabled: 32

AuthenticationMethods:Which is correct.

But for the 2nd app I see this:

Creating Web Application /pButtonsUI

AuthenticationMethods:Note no "AutheEnabled: 32" which it should have.

I edited some of the code within the ZPM class (%ZPM.PackageManager.Developer.Processor.CSPApplication) and saw that indeed the related properties (PasswordAuthEnabled & UnauthenticatedEnabled) where empty...

Playing around and comparing with some other module.xml's I found that perhaps the order of the values make a difference, so I changed:

<CSPApplication

Url="/pButtonsUI"

Path="/src/pButtonsAppCSP"

Directory="{$cspdir}/pButtonsUI"

PasswordAuthEnabled="1"

UnauthenticatedEnabled="0"

ServeFiles="1"

Recurse="1"

CookiePath="/pButtonsUI"

/>to be:

<CSPApplication

Url="/pButtonsUI"

ServeFiles="1"

Recurse="1"

CookiePath="/pButtonsUI"

UseCookies="2"

PasswordAuthEnabled="1"

UnauthenticatedEnabled="0"

Path="/src/pButtonsAppCSP"

Directory="{$cspdir}/pButtonsUI"

/>And now when I run the zpm install I see thsi:

Creating Web Application /pButtonsUI

AutheEnabled: 32

AuthenticationMethods:As expected.

So I pushed this update to github and hopefully this should work for you now.

In any case -

(a) I would recommend investigating why this was behavior - is this by design that the order of these attributes matter - if not - we should fix it, and if yes - we should document it.

(b) and regarding documentation - I would recommend to document the actual attributes of this CSPApplication tag. In the current documentation there is a simple reference to the %Installer tags, but in reality though they are similar they are still different in some cases... so we should either indeed adhere to those, or document the ones that Module.xml actually uses.

For example, for relevance to the current discussion - the CSPApplication tag in our docs (referred to by the ZPM Module.xml docs) has an AuthenticationMethods attribute while the Module.xml has a combination of others instead.

Another example - Module.xml has a Path attribute and %Installer does not.

Let me know if this now works, and what you think about what I observed.

FYI

The latest version available in the Open Exchange supports now also ZPM (Package Mapper) installation.

Thanks for trying Evgeny,

Regarding the error page you are getting in the UI I believe it's pointing you to the Application Error Log in the System Mgmt. Portal (right? the screenshot above is cut...), so try and look there and let me know what you find.

One place to verify things are generally setup ok for the web side, is to see the Web Application was created and defined correctly (including authentication, pointing to the correct physical folder, etc.).

Let me know what you find.

Regarding the Swagger/Open API spec - you find it in any of these -

1. A json file under the /swagger folder (as mentioned towards the end of the Readme). I used this for easier editing of the spec within VSCode with the relevant plugin.

2. The .spec class /src/zpButtons/REST/spec.cls

3. Using our built-in API Mgmt. REST API, in this case the URL would be, for example:

http://localhost:52773/api/mgmnt/v2/%25SYS/zpButtons.API.REST

With either of these you can take the JSON and view it via the Swagger Editor - https://editor.swagger.io/

Let me know if this is what you meant.

Note for more information about the pButtons/SystemPerformance utility see -

From Documentation:

https://docs.intersystems.com/irislatest/csp/docbook/DocBook.UI.Page.cls?KEY=GCM_systemperf

From the Developer Community (mostly by @Murray Oldfield):

https://community.intersystems.com/post/intersystems-data-platforms-and-performance-%E2%80%93-part-1

https://community.intersystems.com/post/intersystems-data-platforms-and-performance-%E2%80%93-how-update-pbuttons

https://community.intersystems.com/post/extracting-pbuttons-data-csv-file-easy-charting

https://community.intersystems.com/post/yape-yet-another-pbuttons-extractor-and-automatically-create-charts

https://community.intersystems.com/post/extracting-pbuttons-data-csv-file-windows

Hi Michel,

Are you using DICOM interfaces?

I believe the proper way to do this is not by editing the Production class XData, but rather by using the icon/button you can see in the image above, with the small light-blue arrow:

When hovering over this icon you can see the text: "Select settings defaults".

See details of how to use this here from the Docs.

In your case you would there check the checkbox fro the "try" setting, press the "Restore Defaults" button on the bottom of the dialog, and then press Apply on the Settings tab.

If, as you mention the purpose of this logging is debugging, then I would recommend going with $$$TRACE vs. $$$LOGINFO. This will allow you to turn on and off tracing without making code changes. Whereas if you go with $$$LOGINFO it's always there unless you remove it (or comment it out), then you need to compile, and then if you need it once more...

See also this related article.

Regarding your question on the *.impl generated class -

if you already added code to this class and later update the swagger and consume it again - indeed the code you added will remain.

If you review the video recording of the Global Summit Solution Developer Conference session I mentioned above - you can see a live demonstration of this specific case. [I recommend you see all of it, but this particular part starts at about 33:30]

Looks great David! ![]()

I think for the "background" part this Global Summit presentation - "API Design for REST" (by @Michael Smart) could be helpful.

See also using the ^SECURITY utility (for manual export vs. programmatic which is possible via the Security package classes API).

For example:

%SYS>do ^SECURITY 1) User setup 2) Role setup 3) Service setup 4) Resource setup 5) Application setup 6) Auditing setup 7) Domain setup 8) SSL configuration setup 9) Mobile phone service provider setup 10) OpenAM Identity Services setup 11) Encryption key setup 12) System parameter setup 13) X509 User setup 15) Exit Option? 2 1) Create role 2) Edit role 3) List roles 4) Detailed list roles 5) Delete role 6) Export roles 7) Import roles 8) Exit Option? 6 Export which roles? * =>

If I understood the question correctly I think there might be some confusion...

Indeed internally Ensemble stores the Message Header times (TimeCreated & TimeProcessed) in a UTC time (per the considerations Robert mentioned) but when these times are displayed in the Message Viewer they go through conversion and are displayed in local time.

So if you would open the object via code, or run an SQL query directly, you would be dependent on running the relevant LogicalToDisplay() or LogicalToOdbc() methods for objects, or using the ODBC or Display runtime modes, but on the default Message Viewer web page these times should appear local.

You can examine this by doing a simple select on the Message Header table and you can see the difference between the Logical and Display modes.

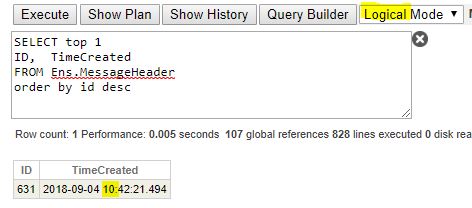

For example, for a certain message, the Logical (internal UTC) time is 10:42 -

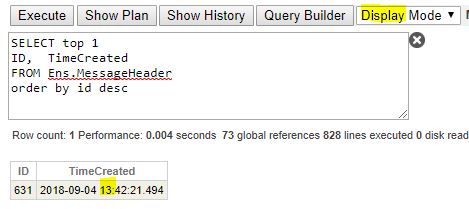

And now the same time using Display mode, the time is 13:42 -

And compare this with what you see in the Message Viewer, you see 13:42 -

and -

Note if you take a look at the Ens.DataType.UTC data-type class you can see it's conversion methods.

For example the LogicalToDisplay() method.

Here's an example of what it looks like when it's code runs.

In the following code I show my current local time (using $H, taking into account Time Zone and Daylight Saving), then I show the current general time (using $ZTS, per GMT and no Daylight Saving), and then applying the code run by the method, calling the method, and explicitly.

TEST>set currentLocalTime = $ZDateTime($Horolog,3,,3) TEST>set currentGeneralTime = $ZDateTime($ZTimeStamp,3,,3) TEST>write currentLocalTime 2018-09-20 10:46:07.000 TEST>write currentGeneralTime 2018-09-20 07:46:15.107 TEST>write $zdatetime($zdTH($zdatetimeh(currentGeneralTime,3,,,,,,,,0),-3),3,,3) 2018-09-20 10:46:15.107 TEST>write ##class(Ens.DataType.UTC).LogicalToDisplay(currentGeneralTime) 2018-09-20 10:46:15.107

See the -3 argument in the $ZDTH function call, from the docs this does the following -

$ZDATETIMEH takes a datetime value specified in $ZTIMESTAMP internal format, converts that value from UTC Universal time to local time, and returns the resulting value in the same internal format

Hi Dmitry,

Recommending your very cool and useful utility to someone I realized I did not find installation instructions, not here in this article, nor in the GitHub readme.

Could you please point me to them, or in case they indeed do not exist currently can you please provide them, for the benefit not only of the person I'm sharing this with, but with other Community members who will want to use this in the future.

Tx!

What is the class name of your Web Service?

Is it also a Business Service? If so - what is the name of the BS component in the Production?

What URL are you using to access this WebService?

I have encountered this error when trying to call the WebService in a way that did not allow Ensemble to understand what is the name of the business service class it needs to use (for example by using the CfgItem URL parameter).

Happy this helped.

In general (you might be aware of this, but for the benefit of the Community) a good resource about searching Messages (including Search Tables and SQL, and other options), is this Online Course.

The issue of indexing data previously processed (before the Search Table was defined for the Business Service/Operation) is addressable by calling the BuildIndex() method of the relevant SearchTable class.

For example:

Set sc=##class(YourPackage.YourSearchTableClass).BuildIndex()If I understand your question correctly then I think you'd benefit from defining and using a Search Table.

See from the Documentation -

If you need to query specifically via SQL (and not using the Message Viewer) then see also this answer (though relating to HL7, but relevant in the same way to the XML case) regarding using the Search Table within a query.

Please note that by default when a Persistent instance is %Saved()'d it gets done inside a Transaction, and therefore, even if the Database the Class' storage globals' are in, is not journaled, these SETs (and KILLs) will get journaled (for supporting rollback).

See this article for more details regarding avoiding journaling data. Specifically - the options of using CACHETEMP or turning off transactions for object filing.

Note if you are concerned with the audit logs generated for every turning off and on the journal for your process - you could simply turn off that System Audit event - %System/%System/JournalChange. Taking of course into consideration the drawback of not logging these kind of events outside the context of this specific scenario.