Hi Cedric.

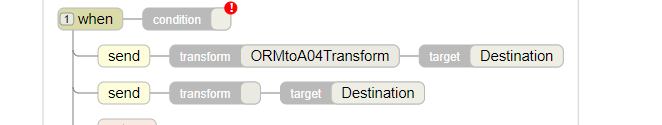

I think you should be able to achieve this using the routing rules by doing something like this: (Please ignore my blank condition in the example)

It should complete the first send (which is your transform to A04) and then it should send your source ORM message on the second send.

- Log in to post comments