Hey Community!

We're happy to share the next video in the "Code to Care" series on our InterSystems Developers YouTube:

Artificial Intelligence (AI) is the simulation of human intelligence processes by machines, especially computer systems. These processes include learning (the acquisition of information and rules for using the information), reasoning (using rules to reach approximate or definite conclusions) and self-correction.

Hey Community!

We're happy to share the next video in the "Code to Care" series on our InterSystems Developers YouTube:

#North American Demo Showcase entry.

>> Answer the question below to be entered in the raffle!

⏯️ IRIS Agents

A Python framework for building and orchestrating AI agents on InterSystems IRIS.

🗣 Presenter: @Suprateem Banerjee, Sales Engineer at InterSystems

#North American Demo Showcase entry.

>> Answer the question below to be entered in the raffle!

⏯️ Health Galaxy: AI-Enabling Healthcare Applications

Health Galaxy creates an AI access point on top of any FHIR server, bringing healthcare into the AI future that has become a reality for many other industries.

🗣 Presenter: @Zelong Wang, Sales Engineer at InterSystems

#North American Demo Showcase entry.

>> Answer the question below to be entered in the raffle!

We are using IRIS for Health to develop an agentic AI chatbot workflow that can interact with a patient using voice commands, reach out to an EHR or other system for context, and provide recommendations back.

Presenters:

🗣 @Vic Sun, Sales Engineer at InterSystems

🗣 @Brad Nissenbaum, Sales Engineer at InterSystems

🗣 Danielle Micciantuono, Clinical Solutions Specialist at InterSystems

#North American Demo Showcase entry.

>> Answer the question below to be entered in the raffle!

⏯️ AI Assistants for the Unified Care Record Powered by Gemini

In this demo, you will see how Gemini works directly with FHIR data, and how it leverages the harmonized dataset provided by InterSystems Unified Care Record. It also showcases multiple AI assistants helping multiple groups of users, e.g. clinicians, patients.

🗣 Presenter: @Simon Sha, Sales Architect at InterSystems

IRIS Audio Query is a full-stack application that transforms audio into a searchable knowledge base.

community/ ├── app/ # FastAPI backend application ├── baml_client/ # Generated BAML client code ├── baml_src/ # BAML configuration files ├── interop/ # IRIS interoperability components ├── iris/ # IRIS class definitions ├── models/ # Data models and schemas ├── twelvelabs_client/ # TwelveLabs API client ├── ui/ # React frontend application ├── main.py # FastAPI application entry point └── settings.py # IRIS interoperability entry point

Today, coding assistants like Claude, GitHub Copilot and Cursor have transformed the way developers write code. However, these tools are limited by being isolated from the systems and data sources that developers work with daily. This limitation can be overcome through the Model Context Protocol (MCP), an open standard designed to connect AI assistants to external data sources and tools in a secure and standardized way.

In this review article, we'll explore the current state-of-the-art regarding the MCP within the InterSystems ecosystem.

#North American Demo Showcase entry.

>> Answer the question below to be entered in the raffle!

⏯️ AI-Assisted Rare and Complex Disease Detection

This demo shows how InterSystems Health Gateway can be used to pull in outside patient records from networks like Carequality, CommonWell, and eHealth Exchange, creating a more complete longitudinal view in a clinical viewer. That full record is then analyzed by AI to surface potential rare disease considerations with clear reasoning, helping clinicians see patterns they might otherwise miss.

Presenters:

🗣 @Jesse Reffsin, Senior Sales Engineer at InterSystems

🗣 @Georgia Gans, Sales Engineer at InterSystems

🗣 @Annie Tong, Sales Engineer at InterSystems

Hi Developers,

We are happy to announce the new InterSystems online programming contest:

🏆 InterSystems Programming Contest: AI Agents for FHIR🏆

Duration: May 25 - June 14, 2026

Prize pool: $12,000

Many organizations that operate systems built on legacy technology stacks are facing significant support and maintenance complexities. They are eager to modernize, but the transition is usually prohibitively complex and expensive. These challenges apply to virtually any legacy tech, while InterSystems-based systems have their own unique nuances.

Key modernization challenges include:

For those of you that weren't at READY last week, you may have missed the exciting announcement that the Early Access Program for AI Hub is officially open. It was announced during an amazing demo from @Benjamin De Boe and @Jeff Fried, I recommend catching up with this demo when the recording is released! I had the opportunity to play with AI Hub in advance, and thought I might share an introduction with the community.

Hey Community!

We're happy to share the next video in the "Code to Care" series on our InterSystems Developers YouTube:

This article introduces SHAP explainability methods as an approach to understand the reasons behind predictions in machine learning black-box models. It also includes a simple Jupyter notebook that you can use and modify to gain hands-on experience with these concepts:

https://www.kaggle.com/code/jorgeivnjh/explainability-in-ml-models

https://github.com/JorgeIvanJH/Explainability-in-ML-models

We will leverage these concepts for a future implementation in our Continuous Training Pipeline: https://community.intersystems.com/post/complementing-iris-mlflow-continuous-training-ct-pipeline

Ever since I started using IRIS, I have wondered if we could create agents on IRIS. It seemed obvious: we have an Interoperability GUI that can trace messages, we have an underlying object database that can store SQL, Vectors and even Base64 images. We currently have a Python SDK that allows us to interface with the platform using Python, but not particularly optimized for developing agentic workflows. This was my attempt to create a Python SDK that can leverage several parts of IRIS to support development of agentic systems.

In last post I talked about iris-copilot, an apparent vision that in near future any human language is a programming language for any machines, systems or products. Its agent runners were actually using such so-called 3rd generation of agents. I want to keep/share a detailed note on what it is, for my own convenience as well. It was mentioned a lot times in recent conversations that I was in, so probably worth a note.

Hey Community!

We're happy to share the next video in the "Code to Care" series on our InterSystems Developers YouTube:

⏯ MCP Explained: Bridging the Gap between AI Agents and Tools

I'm starting to play more with AI enabled coding.

I've been using Github Copilot inside Visual studio code, which is very good at coming up with autocomplete suggestions that are accurate and useful. (Along with some utter rubbish, naturally).

For web development I'm starting to use Claude Code in VS Code to help create web sites and integrations. I want to see how it can help with IRIS development.

However I can't get claude to read any iris code directly as I'm connected to my server via isfs server connections.

I'm a huge sci-fi fan, but while I'm fully onboard the Star Wars train (apologies to my fellow Trekkies!), but I've always appreciated the classic episodes of Star Trek from my childhood. The diverse crew of the USS Enterprise, each masterminding their unique roles, is a perfect metaphor for understanding AI agents and their power in projects like Facilis. So, let's embark on an intergalactic mission, leveraging AI as our ship's crew and boldly go where no man has gone before

Customer support questions span structured data (orders, products 🗃️), unstructured knowledge (docs/FAQs 📚), and live systems (shipping updates 🚚). In this post we’ll ship a compact AI agent that handles all three—using:

Hi, Community!

Here's another way AI can speed up your processes—take a look! 👇

Generating Transformation Descriptions with the DTL Explainer

We didn't start with a big AI strategy.

We had a legacy InterSystems Caché 2018 application, a lot of old business logic, and a practical need: build a new UI and improve code that had been running for years. At first, I thought an AI coding agent would help only with a small part of the work. Maybe some boilerplate, some REST work around the system, and a bit of help reading old ObjectScript.

In practice, it became much more than that.

Over the past year there have been a few DC articles offering MCP servers designed to connect to IRIS and help the AI features of VS Code and its cousins do a better job. For example:

Why do we need this?

Lack of Compiled Context: AI tools only see source code; they don't know what the final compiled routine looks like.

Macro Hallucination: Because AI doesn't see our #include files or system macros, it often makes them up, wasting time during debugging.

The Documentation Gap: Deep logic optimization often requires understanding internal macros that aren't fully covered in public documentation.

Manual Overhead: Currently, the only way to fix this is to manually use the IRIS VS Code extension to find the "truth" in the routine.

Hey Community!

We're happy to share the next video in the "Code to Care" series on our InterSystems Developers YouTube:

A Continuous Training (CT) pipeline formalises a Machine Learning (ML) model developed through data science experimentation, using the data available at a given point in time. It prepares the model for deployment while enabling autonomous updates as new data becomes available, along with robust performance monitoring, logging, and model registry capabilities for auditing purposes.

InterSystems IRIS already provides nearly all the components required to support such a pipeline. However, one key element is missing: a standardised tool for model registry.

Hi folks,

One of the things I've been thinking about recently: how strong AI is changing my work as an ObjectScript developer right now! Yeah, yeah, I know I'm not a very fast, it should have happened 2-3 years ago... But it's only now that I've realized that I am not writing the code all my workday like before.

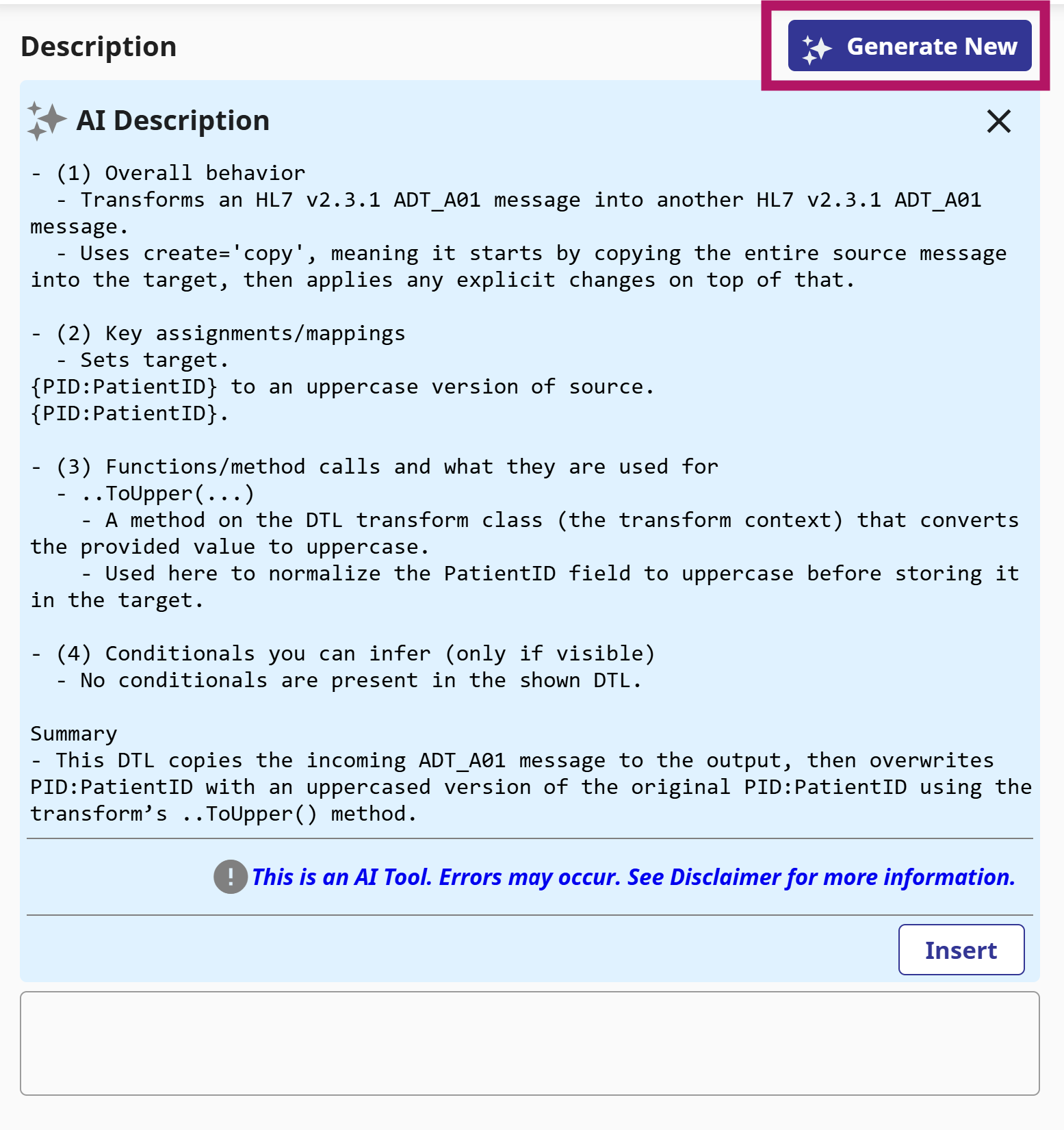

v2026.1 was just released as GA, and one of the features I'm looking forward to using is the DTL Explainer feature.

This allows you to take a Data Transformation, and with a click of a button get a human-readable description of the transformation (which you can also use as the basis for the DTL Description).

For complex DTLs, especially ones you didn't write yourself, or you did but a long time ago, this will allow you to get a clear quick understanding of what it's doing.

Hi Community,

Enjoy the new video on InterSystems Developers YouTube:

What is Unstructured Data?

Unstructured data refers to information lacking a predefined data model or organization. In contrast to structured data found in databases with clear structures (e.g., tables and fields), unstructured data lacks a fixed schema. This type of data includes text, images, videos, audio files, social media posts, emails, and more.

Why Are Insights from Unstructured Data Important?

According to an IDC (International Data Corporation) report, 80% of worldwide data is projected to be unstructured by 2025, posing a significant concern for 95% of businesses.

In the previous article, we saw in detail about Connectors, that let user upload their file and get it converted into embeddings and store it to IRIS DB. In this article, we'll explore different retrieval options that IRIS AI Studio offers - Semantic Search, Chat, Recommender and Similarity.