Maximum Global Size ?

Dear people,

I (really) spent hours on finding the maximum size a Global is allowed to be (for Windows, if that matters). All I seem to run into are database sizes (derived from a max number of blocks and block size), but I refuse to believe that is correct because too small to be realistic. ![]()

It will undoubtedly my fault that I don't understand or misread the concepts of "database" vs Globals, envisioning files as such while one set of functional Globals in one file (?!) could comprise of a database, hence it is one file after all, that file (block structure) not able to be larger than 17GB or whatever it is that I (mis)calculate. Anyway, even if one Global would be allowed to be 17GB, it does not exactly feel like "the largest" etc. databases, which is what I see advertised ?

The reason I like to know is because I'm heading for a migration from another DBMS with really multiples of mentioned size per table alone, at ~1500 tables (not all as large) and I would hope that this is possible regarding limits. There would still be some hope because what we currently use (Sybase) is not sparse, but without knowing in advance the net result of that.

N.b.: I read about a possible "spread" of Globals over more than one database, but at this moment this is beyond my comprehension (for possible solution).

Otherwise, if necessary you can talk back in technical contexts; I am from the Mumps era that multiway balanced trees where the best "we" ever created, and I could understand the implication of e.g. too many depth levels and from there a limit. This is 40 years or so ago now and I am more than eager to come back !

Regards and thanks !

Peter

Comments

Globals are stored in the database and they are limited only by the size of that database.

I'm not sure if the size of the database has any limits, at all, but at least what I know, is that I have database files with up to 10 terabytes per one file. And the only reason is that it's not large than that, we split the data into smaller files due to external reasons, such as filesystems, and just having smaller files makes it easier to operate as a file.

That looks sufficiently clear !

Thank you Dmitry.

Hello Peter,

Like you, I have an > 40-year history with MUMPS dating back before DSM-11.

Not every implementation of the global storage was effíent (no names, no finger-pointing)

What you see today in IRIS is the result of continuous effort on improving performance

and storage efficiency.

In past, the size of a physical disk was the limit. Today with virtual storage systems the

limit is set only by the file system used. This is a fact that InterSystems lives with since "ever".

A word concerning global efficiency:

Multiple experiences over years showed me that the space consumption with IRIS and

its predecessor Caché was always significantly lower than with the various source DBs

I was invited to migrate. With Sybase, it was rather more impressive than with others.

The immediate consequence of this high efficient storage technology is the gain in speed.

Also with the most modern technologies, there is still a transfer between persistent

storage (aka. disk) and memory. And the less you have to transfer the faster you are.

Finally, welcome to this society!

Robert

Thank you so much, Robert. Also for the warm welcome.

Over the course of time I will need to learn what is and what is not off-topic in here, as well as that at this moment I am not sure whether it is a good idea to create a thread about what task I put myself to. It could be interesting, or maybe not at all. ... So let me type ahead a little in here ...

The least I could try to bring across is that my explicit specialism is "performance" as such and you could say that from there I (with my company) implemented the first ERP system on PC's and Novell, back in 1987. Servers were 2x 386 @ 40Mhz, one for the table files, and one for the index files. PC's were XT's @ 12Mhz. Concurrent users 120.

The kind of sad thing was (still is) that I left my great MUMPS application at Shell/NAM, that served 80 concurrent users on a poor PDP 11 (the VAX just saw light when I left). It already contained messaging between users/tasks by a self-made protocol. I saw no future in MUMPS and nobody would be able to read the code anyway ...

Starting the ERP software, I taught (designed ?) myself up to some 9th normal forms (anyone ? :-)) and from there the highest normalized database emerged (with now 1500 centralized entities indeed plus 500 or so local ones).

Believe it or not, but the MS-Dos code in Foxbase and its DBF stuff, never was changed "manually" in the 35 years it now exists. Still it is now all Windows with Advantage Database Server (from Sybase) as the database. So all necessary code changes in order to technically and also functionally keep up to snuff, was all done by generation of the framework (which I tend to call an OS). The functional programs itself didn't even change (except for interpreting DB commands).

The system is so large that is is impossible to "rewrite" it. No low-code will help, and any perceived framework will only be counterproductive. So it is my conclusion that the framework itself already has to spring from (re-)generation and while at that anyway, the remainder of ~ 15,000 functions (think 15,000 forms) can be taken along just the same.

Where MS bought Fox Software (in order to let it die) and SAP bought Sybase (in order to let it die) there's nothing much more left than looking for tools who won't be bought. Is InterSystems that ? ... I'm an opportunistic guy.

Lastly for now, envision my quest for any coding environment that will allow for macro substitution. So really, once you're into that (this started for me with MUMPS back at the time) you won't like otherwise (Foxbase and DBase-like languages could do it too). But beware the moment that you have to leave (like my current situation) and can only re-generate. Then no real compiler is going to help you. And so, for now, I love the existence of InterSystems ?

But we will see.

If someone has hints, contacts perhaps, higher level objectives maybe ? I will be listening (in here or privately). It could be the idea to eventually outsource the lot and the rest is to your and my imagination.

And happy to answer questions of course ! (but bear my English please)

Peter

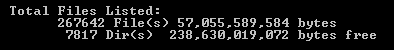

PS: The picture below shows some redundancy because of the various stacked other generated codes like native Visual FoxPro vs ADS, Dos vs Windows, Source vs Executables, a version which interprets Languages. But divide by 10 to be on the safe side.

I have a system on Caché, which has about 100TB of data in it, with a couple of thousands of concurrent users. And it's ECM system, which in fact has no developers support for some time, but still able to be modified, as it's kind of low-code system, where all the forms can be configured with UI, all the processes and document's conventions as well, and it has integrations with many other systems. So, probably it can be proof that InterSystems is good enough for such cases.

Thank you again, Dmitry.

Yes, I think that is telling. For fun, and getting a bit acquainted with the community somewhat : I have a similar story about one of our customers. You may recognize it : the largest company (in this case Cargill) and for one of its operating companies support was outsourced into the sky, and it took ten years before we were able to pass all the security stuff required to even perform an upgrade of their system. 10 years.

In those 10 years, our system "was able to" not have a single issue. If that would have happened, don't ask me what would have happened to that company, which is for a full 100% dependent on this software (that's life with ERP software).

And thus it indeed is so that one of its "partners" (we ourselves, an InterSystems partner (?), Intersystems themselves, should not bail out.

Or be bought out. '-)

--------------------

If this is too much of chit-chat for a decent community, someone will tell me ?

Hi Peter!

Thanks for sharing! BTW, do you want to publish your company on the Partner Directory?

I have just check in our IRIS based asone.global multitenancy - beyond ERP system we now have 19966 classes.

| 19964 | 2020-06-04 16:56:50 | |

| 19965 | 2020-08-04 12:40:09 | |

| 19966 | 2019-08-02 15:43:18 |

Cool.

Do you autogenerate?

Or did you actually wrote 20 000 classes?

Genuinely curious.

Maybe it is easy for someone to hand a link to screenshots of "forms", created by means of the framework ?

Or a link to a website that uses it ? (content unimportant)

I just wonder whether something depicts similarities. It would not be a showstopper (depending on how it looks - haha), but I am just curious.

Hello Peter,

Welcome back to the community.

I also have almost ~30 years (since 1991) experience on Intersystems technology starting with some PDP11, VAX & Alpha machines (anyone else misses the VMS OS like I do?) with DSM, the first PCs with MSM and then (1999) came Cache that become IRIS.. very long, interesting and challenging way !

I do remember at 1992 customers with 50-100 users running MSM on a 286 machines. and it was fast (!) At that times, where disks were slow, small & expensive, developers used to define the globals in a very compacted & normalized way, so applications flew on that platform.

Today, when disks (as Rebert said) are without limits, fast & relatively cheap, some "new" developers tend to disparage with a correct design of a DB, which on a big databases of few TBs it is noticeable.

You can get a significant improvement when the are been optimized (this is part of my job in the past few years). and this is not just the data structure, but also in correct way of optimized coding.

In my opinion, the multi-level tree of blocks that hold a global, is limited by the DB itself, which is limited by the OS file system. With global mapping, and sharding (new technology) - even a DB in a size of PETA bytes coukld easily hold them, and be a very efficient, like this technology was forever.