Load a recipe dataset with Foreign Tables and analyze it using LLMs with Embedded Python (Langchain + OpenAI)

We have a yummy dataset with recipes written by multiple Reddit users, however most of the information is free text as the title or description of a post. Let's find out how we can very easily load the dataset, extract some features and analyze it using features from OpenAI large language model within Embedded Python and the Langchain framework.

Loading the dataset

First things first, we need to load the dataset or can we just connect to it?

There are different ways you can achieve this: for instance CSV Record Mapper you can use in an interoperability production or even nice OpenExchange applications like csvgen.

We will use Foreign Tables. A very useful capability to project data physically stored elsewhere to IRIS SQL. We can use that to have a very first view of the dataset files.

We create a Foreign Server:

CREATE FOREIGN SERVER dataset FOREIGN DATA WRAPPER CSV HOST '/app/data/'

And then a Foreign Table that connects to the CSV file:

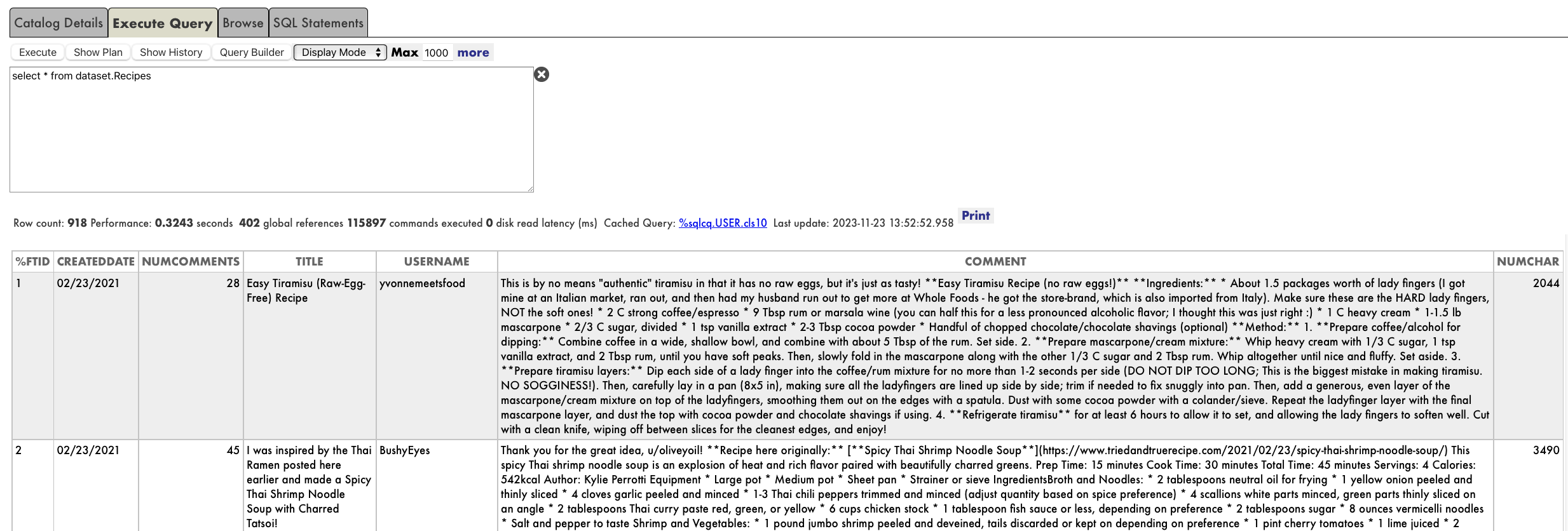

CREATE FOREIGN TABLE dataset.Recipes (

CREATEDDATE DATE,

NUMCOMMENTS INTEGER,

TITLE VARCHAR,

USERNAME VARCHAR,

COMMENT VARCHAR,

NUMCHAR INTEGER

) SERVER dataset FILE 'Recipes.csv' USING

{

"from": {

"file": {

"skip": 1

}

}

}

And that's it, immediately we can run SQL queries on dataset.Recipes:

## What data do we need? The dataset is interesting and we are hungry. However if we want to decide a recipe to cook we will need some more information that we can use to analyze.

We are going to work with two persistent classes (tables):

- yummy.data.Recipe: a class containing the title and description of the recipe and some other properties that we want to extract and analyze (e.g. Score, Difficulty, Ingredients, CuisineType, PreparationTime)

- yummy.data.RecipeHistory: a simple class for logging what are we doing with the recipe

We can now load our yummy.data* tables with the contents from the dataset:

do ##class(yummy.Utils).LoadDataset()

It looks good but still we need to find out how are going to generate data for the Score, Difficulty, Ingredients, PreparationTime and CuisineType fields.

## Analyze the recipes We want to process each recipe title and description and:

- Extract information like Difficulty, Ingredients, CuisineType, etc.

- Build our own score based on our criteria so we can decide what we want to cook.

We are going to use the following:

- yummy.analysis.Analysis - a generic analysis structure we can re-use in case we want to build more analysis.

- yummy.analysis.SimpleOpenAI - an analysis that uses Embedded Python + Langchain Framework + OpenAI LLM model.

LLM (large language models) are really a great tool to process natural language.

LangChain is ready to work in Python, so we can use it directly in InterSystems IRIS using Embedded Python.

The full SimpleOpenAI class looks like this:

/// Simple OpenAI analysis for recipes

Class yummy.analysis.SimpleOpenAI Extends Analysis

{

Property CuisineType As %String;

Property PreparationTime As %Integer;

Property Difficulty As %String;

Property Ingredients As %String;

/// Run

/// You can try this from a terminal:

/// set a = ##class(yummy.analysis.SimpleOpenAI).%New(##class(yummy.data.Recipe).%OpenId(8))

/// do a.Run()

/// zwrite a

Method Run()

{

try {

do ..RunPythonAnalysis()

set reasons = ""

// my favourite cuisine types

if "spanish,french,portuguese,italian,korean,japanese"[..CuisineType {

set ..Score = ..Score + 2

set reasons = reasons_$lb("It seems to be a "_..CuisineType_" recipe!")

}

// don't want to spend whole day cooking :)

if (+..PreparationTime < 120) {

set ..Score = ..Score + 1

set reasons = reasons_$lb("You don't need too much time to prepare it")

}

// bonus for fav ingredients!

set favIngredients = $listbuild("kimchi", "truffle", "squid")

for i=1:1:$listlength(favIngredients) {

set favIngred = $listget(favIngredients, i)

if ..Ingredients[favIngred {

set ..Score = ..Score + 1

set reasons = reasons_$lb("Favourite ingredient found: "_favIngred)

}

}

set ..Reason = $listtostring(reasons, ". ")

} catch ex {

throw ex

}

}

/// Update recipe with analysis results

Method UpdateRecipe()

{

try {

// call parent class implementation first

do ##super()

// add specific OpenAI analysis results

set ..Recipe.Ingredients = ..Ingredients

set ..Recipe.PreparationTime = ..PreparationTime

set ..Recipe.Difficulty = ..Difficulty

set ..Recipe.CuisineType = ..CuisineType

} catch ex {

throw ex

}

}

/// Run analysis using embedded Python + Langchain

/// do ##class(yummy.analysis.SimpleOpenAI).%New(##class(yummy.data.Recipe).%OpenId(8)).RunPythonAnalysis(1)

Method RunPythonAnalysis(debug As %Boolean = 0) [ Language = python ]

{

# load OpenAI APIKEY from env

import os

from dotenv import load_dotenv, find_dotenv

_ = load_dotenv('/app/.env')

# account for deprecation of LLM model

import datetime

current_date = datetime.datetime.now().date()

# date after which the model should be set to "gpt-3.5-turbo"

target_date = datetime.date(2024, 6, 12)

# set the model depending on the current date

if current_date > target_date:

llm_model = "gpt-3.5-turbo"

else:

llm_model = "gpt-3.5-turbo-0301"

from langchain.chat_models import ChatOpenAI

from langchain.prompts import ChatPromptTemplate

from langchain.chains import LLMChain

from langchain.output_parsers import ResponseSchema

from langchain.output_parsers import StructuredOutputParser

# init llm model

llm = ChatOpenAI(temperature=0.0, model=llm_model)

# prepare the responses we need

cuisine_type_schema = ResponseSchema(

name="cuisine_type",

description="What is the cuisine type for the recipe? \

Answer in 1 word max in lowercase"

)

preparation_time_schema = ResponseSchema(

name="preparation_time",

description="How much time in minutes do I need to prepare the recipe?\

Anwer with an integer number, or null if unknown",

type="integer",

)

difficulty_schema = ResponseSchema(

name="difficulty",

description="How difficult is this recipe?\

Answer with one of these values: easy, normal, hard, very-hard"

)

ingredients_schema = ResponseSchema(

name="ingredients",

description="Give me a comma separated list of ingredients in lowercase or empty if unknown"

)

response_schemas = [cuisine_type_schema, preparation_time_schema, difficulty_schema, ingredients_schema]

# get format instructions from responses

output_parser = StructuredOutputParser.from_response_schemas(response_schemas)

format_instructions = output_parser.get_format_instructions()

analysis_template = """\

Interprete and evaluate a recipe which title is: {title}

and the description is: {description}

{format_instructions}

"""

prompt = ChatPromptTemplate.from_template(template=analysis_template)

messages = prompt.format_messages(title=self.Recipe.Title, description=self.Recipe.Description, format_instructions=format_instructions)

response = llm(messages)

if debug:

print("======ACTUAL PROMPT")

print(messages[0].content)

print("======RESPONSE")

print(response.content)

# populate analysis with results

output_dict = output_parser.parse(response.content)

self.CuisineType = output_dict['cuisine_type']

self.Difficulty = output_dict['difficulty']

self.Ingredients = output_dict['ingredients']

if type(output_dict['preparation_time']) == int:

self.PreparationTime = output_dict['preparation_time']

return 1

}

}

The RunPythonAnalysis method is where the OpenAI stuff happens :). You can run it directly from your terminal for a given recipe:

do ##class(yummy.analysis.SimpleOpenAI).%New(##class(yummy.data.Recipe).%OpenId(12)).RunPythonAnalysis(1)

We will get an output like this:

USER>do ##class(yummy.analysis.SimpleOpenAI).%New(##class(yummy.data.Recipe).%OpenId(12)).RunPythonAnalysis(1)

======ACTUAL PROMPT

Interprete and evaluate a recipe which title is: Folded Sushi - Alaska Roll

and the description is: Craving for some sushi but don't have a sushi roller? Try this easy version instead. It's super easy yet equally delicious!

[Video Recipe](https://www.youtube.com/watch?v=1LJPS1lOHSM)

# Ingredients

Serving Size: \~5 sandwiches

* 1 cup of sushi rice

* 3/4 cups + 2 1/2 tbsp of water

* A small piece of konbu (kelp)

* 2 tbsp of rice vinegar

* 1 tbsp of sugar

* 1 tsp of salt

* 2 avocado

* 6 imitation crab sticks

* 2 tbsp of Japanese mayo

* 1/2 lb of salmon

# Recipe

* Place 1 cup of sushi rice into a mixing bowl and wash the rice at least 2 times or until the water becomes clear. Then transfer the rice into the rice cooker and add a small piece of kelp along with 3/4 cups plus 2 1/2 tbsp of water. Cook according to your rice cookers instruction.

* Combine 2 tbsp rice vinegar, 1 tbsp sugar, and 1 tsp salt in a medium bowl. Mix until everything is well combined.

* After the rice is cooked, remove the kelp and immediately scoop all the rice into the medium bowl with the vinegar and mix it well using the rice spatula. Make sure to use the cut motion to mix the rice to avoid mashing them. After thats done, cover it with a kitchen towel and let it cool down to room temperature.

* Cut the top of 1 avocado, then slice into the center of the avocado and rotate it along your knife. Then take each half of the avocado and twist. Afterward, take the side with the pit and carefully chop into the pit and twist to remove it. Then, using your hand, remove the peel. Repeat these steps with the other avocado. Dont forget to clean up your work station to give yourself more space. Then, place each half of the avocado facing down and thinly slice them. Once theyre sliced, slowly spread them out. Once thats done, set it aside.

* Remove the wrapper from each crab stick. Then, using your hand, peel the crab sticks vertically to get strings of crab sticks. Once all the crab sticks are peeled, rotate them sideways and chop them into small pieces, then place them in a bowl along with 2 tbsp of Japanese mayo and mix until everything is well mixed.

* Place a sharp knife at an angle and thinly slice against the grain. The thickness of the cut depends on your preference. Just make sure that all the pieces are similar in thickness.

* Grab a piece of seaweed wrap. Using a kitchen scissor, start cutting at the halfway point of seaweed wrap and cut until youre a little bit past the center of the piece. Rotate the piece vertically and start building. Dip your hand in some water to help with the sushi rice. Take a handful of sushi rice and spread it around the upper left hand quadrant of the seaweed wrap. Then carefully place a couple slices of salmon on the top right quadrant. Then place a couple slices of avocado on the bottom right quadrant. And finish it off with a couple of tsp of crab salad on the bottom left quadrant. Then, fold the top right quadrant into the bottom right quadrant, then continue by folding it into the bottom left quadrant. Well finish off the folding by folding the top left quadrant onto the rest of the sandwich. Afterward, place a piece of plastic wrap on top, cut it half, add a couple pieces of ginger and wasabi, and there you have it.

The output should be a markdown code snippet formatted in the following schema, including the leading and trailing "```json" and "```":

json

{

"cuisine_type": string // What is the cuisine type for the recipe? Answer in 1 word max in lowercase

"preparation_time": integer // How much time in minutes do I need to prepare the recipe? Anwer with an integer number, or null if unknown

"difficulty": string // How difficult is this recipe? Answer with one of these values: easy, normal, hard, very-hard

"ingredients": string // Give me a comma separated list of ingredients in lowercase or empty if unknown

}

======RESPONSE

json

{

"cuisine_type": "japanese",

"preparation_time": 30,

"difficulty": "easy",

"ingredients": "sushi rice, water, konbu, rice vinegar, sugar, salt, avocado, imitation crab sticks, japanese mayo, salmon"

}

That looks good. It seems that our OpenAI prompt is capable of returning some useful information. Let's run the whole analysis class from the terminal:

set a = ##class(yummy.analysis.SimpleOpenAI).%New(##class(yummy.data.Recipe).%OpenId(12))

do a.Run()

zwrite a

USER>zwrite a

a=37@yummy.analysis.SimpleOpenAI ; <OREF>

+----------------- general information ---------------

| oref value: 37

| class name: yummy.analysis.SimpleOpenAI

| reference count: 2

+----------------- attribute values ------------------

| CuisineType = "japanese"

| Difficulty = "easy"

| Ingredients = "sushi rice, water, konbu, rice vinegar, sugar, salt, avocado, imitation crab sticks, japanese mayo, salmon"

| PreparationTime = 30

| Reason = "It seems to be a japanese recipe!. You don't need too much time to prepare it"

| Score = 3

+----------------- swizzled references ---------------

| i%Recipe = ""

| r%Recipe = "30@yummy.data.Recipe"

+-----------------------------------------------------

## Analyzing all the recipes! Naturally, you would like to run the analysis on all the recipes we have loaded.

You can analyze a range of recipes IDs this way:

USER>do ##class(yummy.Utils).AnalyzeRange(1,10)

> Recipe 1 (1.755185s)

> Recipe 2 (2.559526s)

> Recipe 3 (1.556895s)

> Recipe 4 (1.720246s)

> Recipe 5 (1.689123s)

> Recipe 6 (2.404745s)

> Recipe 7 (1.538208s)

> Recipe 8 (1.33001s)

> Recipe 9 (1.49972s)

> Recipe 10 (1.425612s)

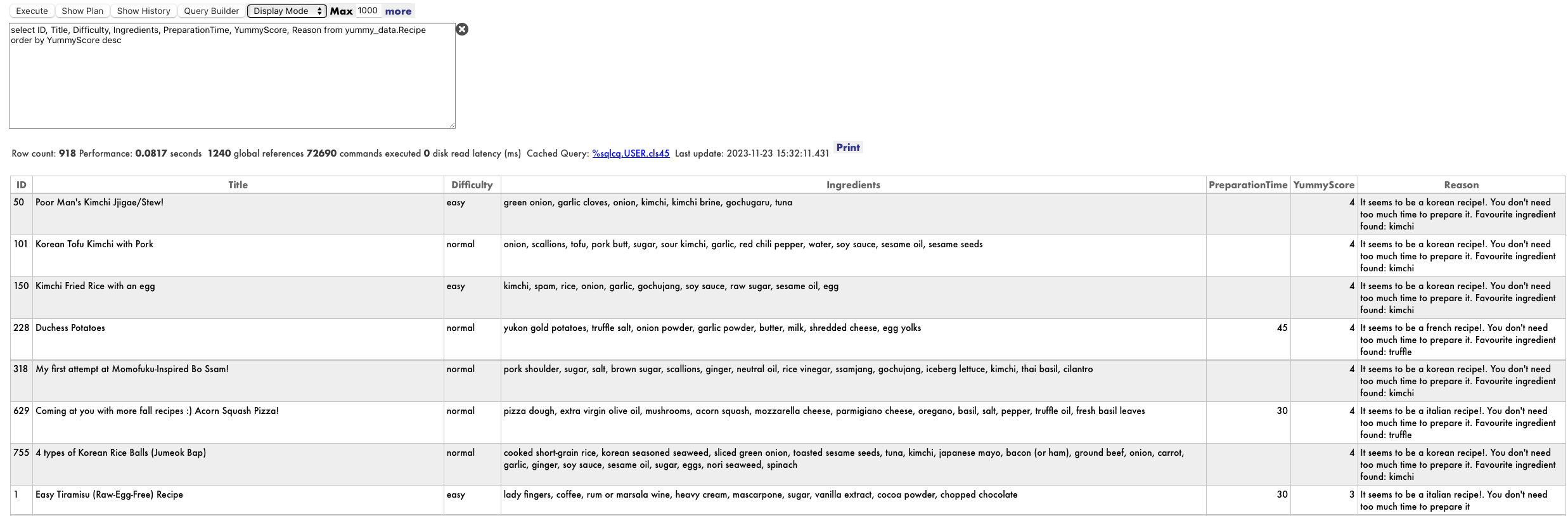

After that, have a look again at your recipe table and check the results

select * from yummy_data.Recipe

I think I could give a try to Acorn Squash Pizza or Korean Tofu Kimchi with Pork :). I will have to double check at home anyway :)

Final notes

You can find the full example in https://github.com/isc-afuentes/recipe-inspector

With this simple example we've learned how to use LLM techniques to add features or to analyze some parts of your data in InterSystems IRIS.

With this starting point, you could think about:

- Using InterSystems BI to explore and navigate your data using cubes and dashboards.

- Create a webapp and provide some UI (e.g. Angular) for this, you could leverage packages like RESTForms2 to automatically generate REST APIs to your persistent classes.

- What about storing whether you like or not a recipe, and then try to determine if a new recipe will like you? You could try an IntegratedML approach, or even an LLM approach providing some example data and building a RAG (Retrieval Augmented Generation) use case.

What other things could you try? Let me know what you think!

Comments

Thanks @Alberto Fuentes for this very good example of LLM + Embedded Python.

Really interesting! Thank you @Alberto Fuentes !

💡 This article is considered InterSystems Data Platform Best Practice.