Open AI integration with IRIS

As you all know, the world of artificial intelligence is already here, and everyone wants to use it to their benefit.

There are many platforms that offer artificial intelligence services for free, by subscription or private ones. However, the one that stands out because of the amount of "noise" it made in the world of computing is Open AI, mainy thanks to its most renowned services: ChatGPT and DALL-E.

What is Open AI?

What is Open AI?

Open AI is a non-profit AI research laboratory launched in 2015 by Sam Altman, Ilya Sutskever, Greg Brockman, Wojciech Zaremba, Elon Musk, John Schulman and Andrej Karpathy with the aim of promoting and developing friendly artificial intelligence that would benefit humanity as a whole.

Since its foundation, the guys have released some fascinating products that, if used for good purposes, could be really powerful tools. Yet, like any other new technology, they pose a threat of potentially being used to commit crimes or do evil.

I decided to test the ChatGPT service and asked it what the definition of artificial intelligence was. The answer I received was an accumulation of notions found on the Internet and summarized in such a way a human would respond.

In short, an AI can only reply using the information used to train it. Employing its internal algorithms and the data fed to it during the training, it could compose articles, poems, or even pieces of computer code.

Artificial intelligence is going to impact the industry considerably and ultimately revolutionize everything…. Perhaps the expectations of how artificial intelligence will affect our future are being overstated, so we shall start to use it correctly for the common good.

We are tired of hearing that this new technology will change everything and that ChatGPT is the tool that will turn our world upside down, just like its brother GPT-4 did. Neither will these tools leave people without jobs, nor are they going to rule the world (like Skynet). What we are trying to analyze here is the trend. We start by looking at where we were before to understand what we have achieved so far and thus anticipate where we will find ourselves in the future.

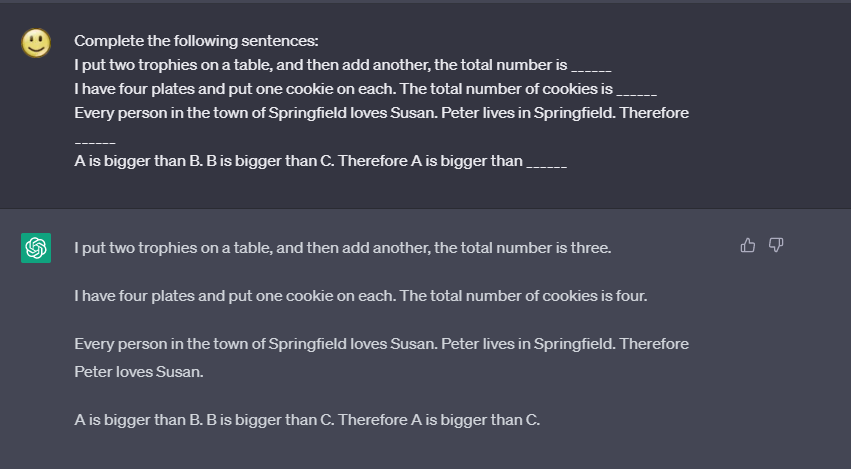

In 2020, psychologist and cognitive scientist Gary Marcus published an article analyzing how GPT-2 worked. He conducted a methodical study of its operation revealing that that type of tool actually failed to understand what it was writing or what orders it received.

“Here's the problem: upon careful inspection, it becomes apparent the system has no idea what it is talking about: it cannot follow simple sequence of events nor reliably have any idea what might happen next.”

Follow the link below to see the entire article: https://thegradient.pub/gpt2-and-the-nature-of-intelligence/

You can clearly witness the evolution here! GPT-3 (2020) had to be trained using enough inputs indicating what you wanted to achieve, whereas the current GPT-4 version can use a natural language making it possible to give those inputs in an easier way. Now it "seems" to understand us as well as know what it is talking about itself.

Now when we use the same example designed by Gary Marcus in 2020 for GPT-2, we get the result as expected:

OpenAI can currently provide us with a set of tools that have greatly evolved amazingly fast and, if combined properly, will make it much easier for us to obtain a more efficient result compared to the past.

What products does OpenAI offer?

I am going to talk about the two best-known ones, such as DALL-E and Chat-GPT. However, they also have other services, such as Whisper, which transcribes audio into text and even translates it into a different language, or Embeddings, which allows us to measure the relationship of the text strings for searches, recommendations, groupings, etc…

What do I need to use these services?

You will have to create an OpenAI account, which is very easy to make, and you will be all set to use their services directly through their website at that point.

Chat: https://chat.openai.com

DALL-E: https://labs.openai.com

We want to integrate these services from IRIS, so we should use its API to access them. First, we must create an account and provide a payment method to be able to use the API. The cost is relatively small and depends on the use you want to give it. The more tokens you consume, the more you have to pay 😉

What is a token?

It is a way the models uses to understand and process the texts. Tokens can be words or just character fragments. For example, the word "hamburger" is divided into the tiles "ham," "bur," and "ger," while a short common word like "pear" is a single tile. Many tokens begin with a blank space, for example, " hello" and " bye".

Is it complicated to use the API?

Not at all. Follow the steps bellow and you will not have any problems:

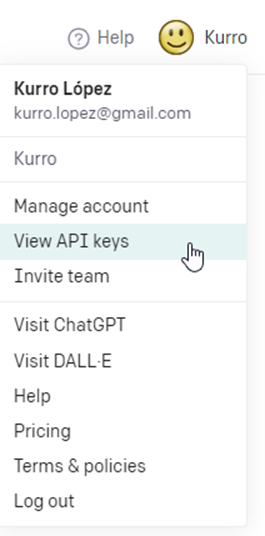

Step 1: Create an API Key

Select the option "View API Key" within the menu of your user

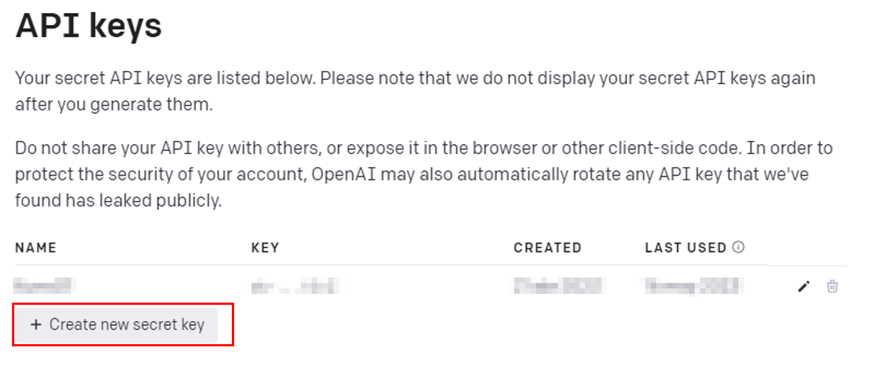

Step 2: Create a new secret key

Press the button at the bottom of the section API Keys

VERY IMPORTANT: Once the secret key is created, it cannot be recovered at any time, so remember to save that information in a secure place.

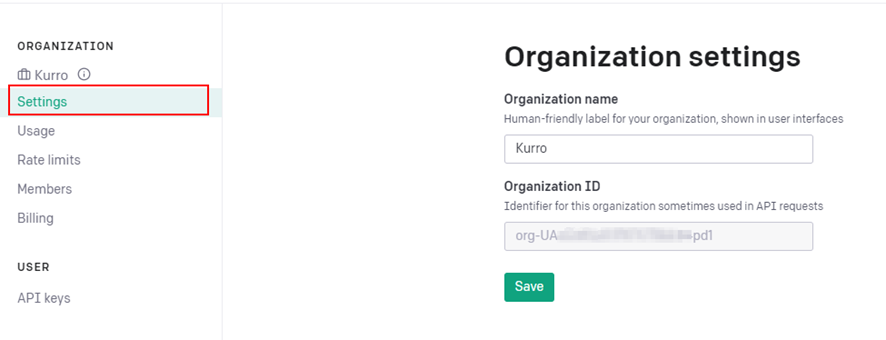

Step 3: Define the name of your organization

The definition of your organization is not mandatory but recommended. It is a part of the header of API calls. You also ought to copy your organization's code for later use.

You can modify it as many times as you want.

Step 4: Prepare the API call using the Secret Key and the Organization ID

As part of the API call, you must use a Bearer token authentication header and indicate the Secret Key.

It should also be indicated as a header parameter next to the Organization ID

| Header parameter | Value |

| api-key | sk-MyPersonalSecretKey12345 |

| OpenAI-Organization | org-123ABCFakeId |

This would be an example of an invocation

POST https://api.openai.com/v1/images/create

header 'Authorization: Bearer sk-MyPersonalSecretKey12345'

header 'api-key: sk-MyPersonalSecretKey12345'

header 'OpenAI-Organization: org-123ABCFakeId '

header 'Content-Type: application/json'

This configuration is common for all endpoints, so let's see how some of its best-known methods work.

Models

Endpoint: GET https://api.openai.com/v1/models

You can download all the models that OpenAI has defined to be used. Each of those models has different characteristics though. The latest, most up-to-date model for use in Chat is “gpt-4”. Bear in mind that all models IDs are in lowercase.

If the model name is not provided, it will return all existing models.

You can see its features and where you can use it on the OpenAI documentation page https://platform.openai.com/docs/models/overview

Chat

Endpoint: POST https://api.openai.com/v1/chat/completions

It allows you to create a conversation with the indicated model or through a notice. You can indicate how many tokens you want to use as a maximum and when you should stop the conversation.

The input parameters will be as follows:

- model: Required. This is the ID of the model to use. You can use the ListModels API to see all of the available models or check our model overview for its description.

- messages: Required. Contains the type of message indicating which paper is to be used. You can define a dialog form indicating if it is the user or the assistant.

- role: It is the role of the person of the message.

- content: It is the content of the message.

- temperature: Optional. It describes the demonstrated temperature with the value between 0 and 2. If a very high number is given, the result is more random. If a low digit is chosen, the answer will be more focused and deterministic. If it’s not defined, the default value is 1.

- stop: Optional. It is related to sequences where the API stops generating more tokens. If "none" is indicated, the tokens will be generated infinitely.

- max_tokens: Optional. It describes the maximum number of tokens to generate the content and is limited to the maximum number of tokens allowed by the model.

Check out the link below for the documentation describing this method: https://platform.openai.com/docs/api-reference/chat/create

Image

Endpoint: POST https://api.openai.com/v1/images/generations

It allows you to create an image as indicated by the prompt parameter. In addition, we can define the size and the way we want the result to be returned, be it through a link or content in Base64

The input parameters would be as mentioned below:

- message: Required. It is related to a text describing the image we want to generate.

- n: Optional. It is related to the maximum number of images to generate. This value should be between 1 and 10. If it's not indicated, the default value is 1.

- size: Optional. It is related to the size of the generated image. The value must be "256x256", "512x512", or "1024x1024". if it's not indicated, the default values is "1024x1024"

- response_format: Optional. It is related to the format of how you want the generated images to be returned. Values should be "url" or "b64_json". If it’s not indicated, the default values is "url"

Check out the link below for the documentation describing this method: https://platform.openai.com/docs/api-reference/images/create

What does iris-openai offer?

Link: https://openexchange.intersystems.com/package/iris-openai

This framework is designed to utilize request and response messages with the necessary properties to connect with OpenAi and use such of its methods as Chat, Models, and Images.

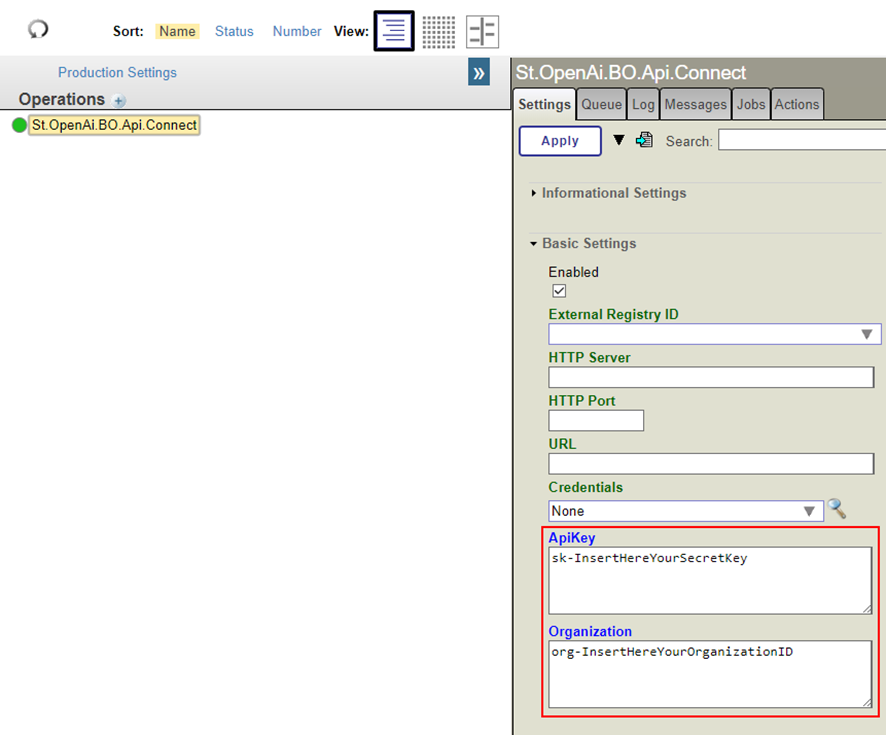

You can configure your production in a way that will allow you to use the messaging classes to call a Business Operation that connects to the OpenAI API.

Remember that you must configure the production to indicate the values of the Secret Key and Organization ID as stated above.

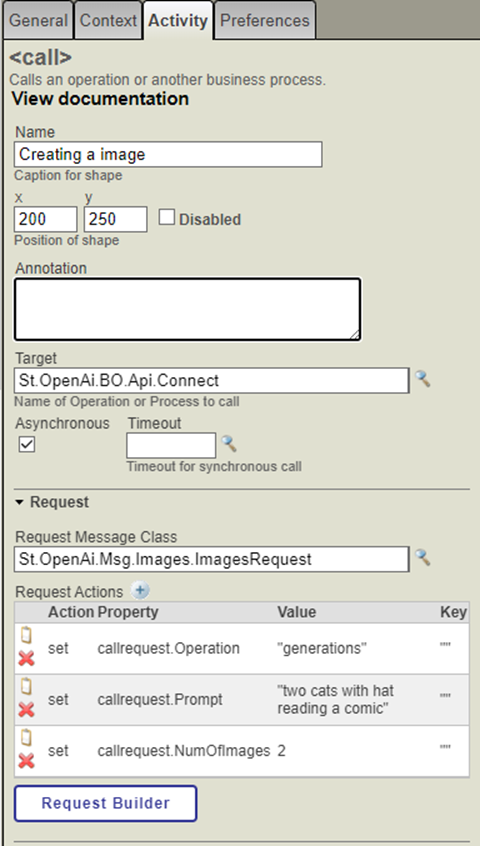

If you want to create an image, you need to produce a class instance "St.OpenAi.Msg.Images.ImagesRequest" and populate the values of the options to make a new picture.

Example:

Set image=##class(St.OpenAi.Msg.Images.ImagesRequest).%New()

Set image.Operation = "generations"

Set image.Prompt = "Two cats with a hat reading a comic"

Set image.NumOfImages = 2

When finished, call Business Operation "St.OpenAi.BO.Api.Connect"

Note: In this case, it will retrieve the link of two created images.

{

"created": 1683482604,

"data": [

{

"url": "https://link_of_image_01.png”

},

{

"url": "https://link_of_image_02.png”

}

]

}

If it has been indicated that we want to operate a Base64 instead of a link, it will retrieve the following message:

{

"created": 1683482604,

"data": [

{

"b64_json": "iVBORw0KGgoAAAANSUhEUgAABAAAAAQACAIAAADwf7zUAAAAaGVYSWZNTQAqAAAACAACknwAAgAAACkAAAAmkoYAAgAAABgAAABQAAAAAE9wZW5BSS0tZjM5NTgwMmMzNTZiZjNkMDFjMzczOGM2OTAxYWJiNzUAAE1hZGUgd2l0aCBPcGVuQUkgREFMTC1FAAjcEcwAAQAASURBVHgBAAuE9HsBs74g/wHtAAL7AAP6AP8E+/z/BQYAAQH++vz+CQcH+fn+AgMBAwQAAPr++///AwD+BgYGAAIC/fz9//3+AAL7AwEF/wL+9/j9DQ0O/vz/+ff0CQUJAQQF/f/89fj4BwcD/wEAAfv//f4BAQQDAQH9AgIA/f3+AAABAgAA/wH8Af/9AQMGAQIBAvv+/////v/+/wEA/wEAAgMA//sCBAYCAQ”

},

{

"b64_json": "D99vf7BwcI/v0A/vz9/wH8CQcI+vz8AQL9/vv+CAcF+wH/AwMA9/f8BwUEAwEB9fT+BAcKBAIB//7//gX5//v8/P7+DgkO+fr6/wD8AP8B/wAC/f4CAwD+/wT+Av79BwcE/Pz7+/sBAAD+AAQE//8BAP79AgIE///+AQABAv8BAwYA+vkB/v7/AwQE//7+/Pr6BAYCBgkE/f0B/Pr6AQP+BAED/gMC/fr+AwEC/v/+//7+CQcH+fz5BAYB9vf9BgQD+/n+BwYK/wD////9/gD5AwIDAAQE+/j6BAUD//rwAC/fr6+wYEBAQAA/4B//v6+/8AAAUDB/L49woGAQMDCfr7+wMCAQMHBPvy+AQJBQD+/wEEAfr3+gIGBgP/Af3++gUFAvz9//4A/wP/AQIGBPz+/QD7/wEDAgkGCPX29wMCAP4FBwX/+23"

}

]

}

What's next?

After the release of this article, the content of iris-openai will be extended to make it possible to use for Wisper methods and image modification.

A further article will explain how to use these methods and how to include our images or make transcriptions of the audio content.

Comments

very cool! thank you for the time you spent compiling this and sharing it!

Nice one. Exactly as how it should be on a BO config. :) AI was never made so simple yet so powerful before. Really appreciate the way it was being kept simple.

Set image.Prompt = "Two cats with a hat reading a comic"Come on, why wouldn't you post the results?

+1. Would love to see Stable Diffusion and Midjourney results too, although not as simple as Dall-E2. Pity it still can't draw an E2E solution diagram accurately for the routes and interfaces yet. If anyone happens to identify a 3rd party syntax tool... :)

.png)

@Eduard Lebedyuk Here you have ;)

Nice article. Kepp going.