Full screen editor for routines, arrays and text files in terminal mode

Hello developers! I present to you the project of editors in terminal mode. The full-screen editor of routines, arrays and text files in terminal mode can be useful to you when debugging your project in docker or when your web interface is unavailable or limited for some reason. Although this project is self-sufficient, I decided to make it as an addition to the ‘zapm’ module for the convenience of calling editor commands.

If your instance does not have a ZPM, then you can install the zapm-editor module in one line:

set $namespace="%SYS", name="DefaultSSL" do:'##class(Security.SSLConfigs).Exists(name) ##class(Security.SSLConfigs).Create(name) set url="https://pm.community.intersystems.com/packages/zpm/latest/installer" Do ##class(%Net.URLParser).Parse(url,.comp) set ht = ##class(%Net.HttpRequest).%New(), ht.Server = comp("host"), ht.Port = 443, ht.Https=1, ht.SSLConfiguration=name, st=ht.Get(comp("path")) quit:'st $System.Status.GetErrorText(st) set xml=##class(%File).TempFilename("xml"), tFile = ##class(%Stream.FileBinary).%New(), tFile.Filename = xml do tFile.CopyFromAndSave(ht.HttpResponse.Data) do ht.%Close(), $system.OBJ.Load(xml,"ck") do ##class(%File).Delete(xml) zpm "install zapm-editor"

Program editor

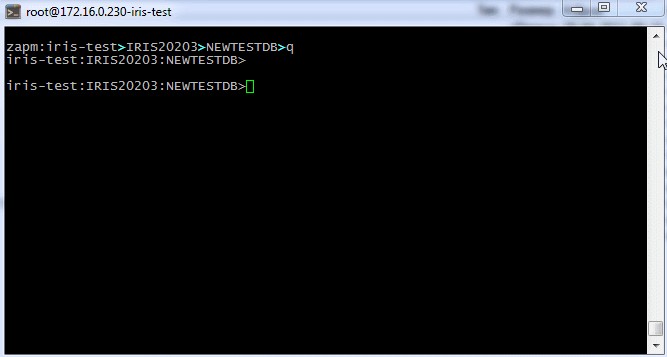

The program editor is invoked from the terminal by the command Zapm "edit-rou" or by a short command in the "edr" shell. After that we get into the line editor, where you can change the name of the program or select it from the directory by entering “*”. Pressing the F1 key will show the help text. The up arrow will call up the history of previously entered program names. Let's write a program for creating a test multilevel array.

Array editor

You can enter the editor from the terminal with the command: Zapm "edit-glo" or in the shell with the short command "edg". Load the array editor with the command zapm edit-glo test Confirm the name in the stock editor. Here you can also call up history with an up arrow. Entering "*" and enter the globals directory. Scroll the output of the global up and down. PgUP and PgDown. Let's demonstrate streaming output with an up arrow and stopping the streaming output with any key, such as a space bar. F1 call for help. To edit the current node, press the F4 button. In edit mode, you can move from node to node up or down. Pressing the spacebar will go to the line editor of the node. After completion of editing, confirm the correction with the Enter key, and cancel with Esc. To edit a specific node, you can go to it F7 by entering the desired index. For example 1000. It is also possible to filter and display only those nodes that will satisfy the entered conditions, the F6 key. For example, we introduce a condition to display only nodes of the 2nd level. Let's change the values inside the list of values g [",". The filter is valid until we exit the array editor to the line editor. Another editing mode is navigation through the array like a tree, going through levels. The F5 key. Moving up and down the nodes, entering the level with the Enter key, and leaving the level with the Esc key. Edit node F4. Exit the editor completely and copy the global to the locale under a different name. And by entering the command:

USER>merge local=^test

USER>zapm "edit-glo local"

And let's edit the last node of the locale. Let's exit and use the zwrite command to show the modified locale. Everything that was done with the global arrays can be done with the local one.

Navigation through ZPM modules in system areas.

You can enter the module from the terminal with the command Zapm "dit-zpm" or in the shell with the short command "edz" Let's load the list of areas and go to the area with any installed ZPM module. Let's go to the Root directory of the module and see the classes. Let's look at the module.Xml file and exit it, go to readme.md. If desired, it is possible to edit text files by entering the F4 key. With the editor enabled, you can save the current line to the clipboard with the ctrl-c command and paste from the clipboard with the ctrl-v command.

Filesystem Navigator

You can enter the module from the terminal with the Zapm command "edit-file" or in the shell with the short command "edf" Load the file navigator and go through the directories looking into the files. Then go to the module.xml file of the apptools-editor application downloaded from the directory. Pressing the F4 key will switch to the editing mode. Let's change the version of the module in it. Let's type the command to view the log file.

USER>Zapm "edit-file /opt2/iris/msg/messages.log"

Or load it along the short path:

USER>zapm "edf mess"

Load the shortcut:

USER>zapm "edf cpf"

If you think it will be useful for you, please vote for my project.

If you think it will be useful for you, please vote for my project.

Comments

Just great! I like it. ![]()

with a solid partition / session under the feet. no local stuff, not browsers !

(btw. I had something similaar in mind but this is much better)

Thanks a lot, Robert.

It's better not to look into the source code, I'm afraid to look there myself. Some parts of the global editor's code still remember DSM11, and the release took place in 1991.

Why not take advantage of what is there and what is still working well.

You can't get all the %R*.int and %G*.int routines in Studio (no idea about VSCode)

But a closer look to the well know system globals and a ZLOAD brings those zombies back to life. ![]()

Ha ha ha, love the Zombies 🧟♂️. I have written little utilities based around ^$GLOBAL so that I can select a range of globals based on a wild card *{partial_global_name}* with an exclusion clause specified as -*{partial_global_name}* which will exclude those globals from the resultant selection. I then use the resultant array to perform actions like export, delete, kill the index globals for a range of D (data) globals and then run %BuildIndices(). I also wrote a global traverse function that would walk through a global nesting down through sub-nodes to N levels and then export the resultant tree to file using List to String for $List forms of globals. This is obviously something that the Management Portal -> Globals does but the code is well hidden. You could also specify an array of pseudo node syntax (global.level1...,levelN) followed by a field number and either an expression or classmethod call that would modify that value. The modified global(s) were then written to a new file which I could import. The import would kill the original globals and during the import convert the de-listed strings back into lists. It also allowed for fields to be inserted or removed, again with an expression or method call to populate the inserted field with a value. It was very useful when working with old style globals and for retro populating existing data for classes that had been modified and had either changed the data type on a field, added a required field or where a property had been removed and the storage definition had been changed and the global was now out of sync with the new storage definition.

It was very useful if the days when creating globals with many levels as we used to do years ago. These days it's seldom that you design globals like that especially if you want to use SQL on them.

But it was fun to work out the traverse algorithm using @gblref@() syntax and avoid stack overflows