Developing and Maintaining Code for Interoperability Components Within a Healthcare Institutional Environment

There are lessons we learned from developing and maintaining code for interoperability components within a healthcare institutional environment.

The plane is already flying

Be prepared to rebuild, improve, extend, and fix the plane mid-flight.

Maintenance windows for hospital systems are often very limited, with some required to be operational 24/7. While the critical health systems such as imaging modalities or registration kiosks — or at least their critical functions — must operate autonomously, efficiency and usability may be compromised when interoperability components malfunction. For instance, outpatient registration kiosks may fail or route patients incorrectly if appointment and practitioner data are missing or outdated, resulting in increased workload and stress.

Since IT teams and their budgets tend to be smaller in healthcare than banking, for example, developers are often responsible for the entire lifecycle of interoperability components, covering development, testing, deployment, maintenance, and eventual decommissioning.

High availability and mirroring in IRIS, combined with modern infrastructure (e.g., virtual server hosts), offer strong technical resilience. However, they do not eliminate the risk of software-related issues. To reduce those risks while ensuring uninterrupted service, you can apply several complementary strategies:

- Maintain a code repository and use revision control.

- Establish and support dedicated operating environments where features can be developed, and planned releases can be tested end-to-end.

- Monitor production environments and alert system administrators about critical failures.

Maintaining a code repository

From my perspective, using a single Git repository for all interoperability namespaces is the most effective way to promote code reuse and consistency. Depending on the dev team's maturity, the branching scheme can evolve from a simple main/develop pair to a full GitFlow architecture.

The repository has the following directories:

|

Directory |

Description |

|

(root) |

Readmes, changelogs, Dockerfiles, … |

|

src |

All resources supported by the IRIS compiler: classes, lookup tables, HL7 schemas, etc. Package structure and class names should follow established coding guidelines and naming conventions. |

|

resources |

All resources that are not supported by the IRIS compiler, such as test data, code value sets, diagram source text, etc. |

|

scripts |

Scripts used to run unit tests, deploy code, etc. (see examples below). |

|

out |

All output (test results,...) that should not be pushed into the repository, such as test results or other generated artifacts, diagrams, etc. This is also well suited for usage as ^UnitTestRoot. This directory is added to .gitignore. |

The repository should contain all artifacts (classes, lookup tables, HL7 schemas, etc.) that are not considered configuration elements.

Some classes, however, are regarded as configuration elements and require special handling, as their code is usually generated, varies across operating environments, and can be changed by system administrators using the Management Portal editors.

It includes interoperability productions (classes extending Ens.Production) and business or routing rules (classes extending Ens.Rule.Definition).

Operating Environments and Change Management

Development and release management for services built on Intersystems IRIS typically follow the same practices as other software platforms. To maintain high availability in the production environment, additional operating configurations are used to support a structured, controlled release process.

The environments run in separate IRIS instances, ideally utilizing the same IRIS version.

Employing different platforms for development and other domains is perfectly acceptable, considering the code accounts for these differences. In practice, this means, for example, avoiding issues related to shared file access, line terminators, and path delimiters.

Development Environment (DEV)

When designing interfaces with vendor‑supplied software, accurate and comprehensive specifications are essential for a successful and efficient process. In the context of EU public procurement tenders, it is good practice to include these technical specifications as mandatory in the technical section of the tender documentation.

For development and unit testing, you can use either a shared environment set up with the excellent embedded git server-side source control, or separate environments with a single, shared git repository.

Unit tests running in the development environment should not depend on such external resources as databases, APIs, or application HL7 interfaces. I set up two namespaces on DEV instances :

- DEV: Used for active development and running unit tests one by one

- TEST (or SCRATCH): Used for running all test suites after cloning a repository branch and re-creating (or wiping) the namespace. Loading and compiling the entire codebase in a clean namespace lets you ensure that all dependencies are properly resolved. Running the unit tests and fixing issues until they succeed minimizes the risk of regression.

Maintenance tasks on those namespaces are reduced to the minimum:

- No journaling (except in rare cases when required for testing).

- No backups

- Interoperability message purge is set to keep a minimal history

Testing is based on classes extending %UnitTest.* classes (see for example ks-iris-lib). Unit tests do not depend on external resources; they work with data stored in the repository's ‘resources’ directory or included as XData blocks in test classes.

During development, tests are run as needed using the VSCode test harness, while complete runs are performed via a script.

The script clones a repository branch (usually the develop or feature branch), loads and compiles all classes, and runs the complete set of available unit tests. Take a look at the example of the Windows shell script below:

@echo off

setlocal

rem A utility to clone IRIS Health items from git repository and run unit test in an empty namespace

rem

rem Usage :

rem

rem unit-tests <iris instance> <iris namespace>

rem

rem example :

rem

rem deploy-classes IRISHEALTH SCRATCH

rem

rem pre-requisites :

rem

rem * git installed and in path

rem * InterSystems IRIS Health installed and

rem * disk space in %TEMP% volume for a clone of repository

rem * existing IRIS Health instance and namespace

rem

rem

rem IRIS installation folder

rem

set IRISHome=c:\intersystems\IRISHealth

rem

rem repository http URL

rem

set Repository=https://github.com/theanor/ks-iris-lib

if "%3"=="" (set Branch=develop) else (set Branch=%3)

if "%1"=="" (set IRISInstance=IRISHealth) else (set IRISInstance=%1)

if "%2"=="" (set IRISNameSpace=SCRATCH) else (set IRISNameSpace=%2)

set TargetDir=%TEMP%\iris-health-deploy

echo Cleaning target directory

if exist %TargetDir% rmdir /s/q %TargetDir%

echo Cloning repository %Repository% branch %Branch%

git clone --branch %Branch% %Repository% %TargetDir%

echo Wiping namespace %IRISNameSpace%

"%IRISHome%\bin\irissession" %IRISInstance% -U %IRISNameSpace% "##class(%%UnitTest.Manager).WipeNamespace()"

echo Importing code into %IRISNameSpace%

"%IRISHome%\bin\irissession" %IRISInstance% -U %IRISNameSpace% "##class(%%SYSTEM.OBJ).ImportDir(""""""%TargetDir%\src"""""",""""""*.*"""""",""""""ckbry"""""",,1)"

echo Running repository tests in %IRISNameSpace%

"%IRISHome%\bin\irissession" %IRISInstance% -U %IRISNameSpace% "##class().RunRepositoryTests(""""""%IRISNameSpace%"""""",""""""%TargetDir%"""""")"

TEST Environment (TEST)

Application test environment setup and maintenance may incur additional licensing or costs. When drafting technical specifications for EU public market tenders, it is a good practice to include a requirement for at least one test environment.

While the DEV environment is used for unit testing, the TEST environment is utilized for integration testing. It may employ external resources such as databases and APIs exposed by test instances or replicas of interfaced applications.

In this context, it is helpful to clearly separate unit test classes and integration ones. This can be achieved by placing them in different packages or by using a dedicated marker parameter (for example, INTEGRATIONTEST = 1) to identify integration tests.

The test environment code is deployed (typically the development or a feature branch) using the same techniques as for the production environment, either via scripts or by embedding Git to import and compile artifacts from the Git repository branches.

Quality Assurance environments (QA)

While the TEST environment is used for integration testing, QA environment(s) are used for end-to-end, user acceptance testing. They may also be employed for user training.

QA environments interface with the test instances of external applications.

If you need to perform non-regression testing and incident analysis (reproducing bugs outside the production environment) simultaneously with user training, separate QA environments may be required to maintain three different setups:

- QA -1 : Previous release, used for incident management (checking if a bug was already present).

- QA 0 : Current release, utilized for training and incident management (reproducing incidents or bugs).

- QA +1 : Next release, employed for integration and non-regression testing.

Comprehensive coverage of application use cases involving operability components in end-to-end testing is the key to successful deployment. Remember to define end-to-end scenarios around real use cases together with project stakeholders.

Monitoring and Alerting

As these environments connect dozens of systems that exchange data across the network, the interoperability productions (and the IRIS instances running them) can generate substantial volumes of logs and alert information.

Maintaining a curated list of interfaced applications and configuring them as business partners allow you to build on the existing alerting framework. The business partner code can also relate to aadditional data about the application obtained from other sources such as a configuration management database.

To avoid alert fatigue, it is crucial to filter the noise to deliver only clear, relevant alerts to the right recipients. However, doing it with a high degree of precision is nothing but easy!

It is helpful to start with something simple and gradually reduce the noise level by tuning alert filtering and routing.

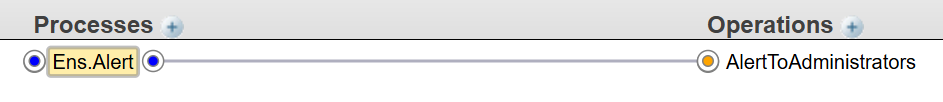

In its easiest form, alerts are routed by an EnsLib.MsgRouter.RoutingEngine to EnsLib.EMail.AlertOperation, sending all warnings to configured email addresses via SMTP:

To go further, we should define alert processors.

The CreateManagedAlertRule of Ens.Alerting.AlertManager lets you decide whether to create a managed alert.

The OnProcessNotificationRequest() of Ens.Alerting.NotificationManager allows you to determine if a managed alert should be notified.

Custom functions can help you with those decisions. Beneath, you can see some practical examples.

The source configuration item is at the origin of the session, so pass AlertRequest.SessionId to a function like shown below:

Include Ensemble

Class dc.alert.FunctionSet Extends Ens.Rule.FunctionSet

{

ClassMethod HeaderSourceConfigName(headerId As %Integer) As %String

{

#Dim header as Ens.MessageHeader

s header = ##class(Ens.MessageHeader).%OpenId(headerId)

return $select($isobject(header):header.SourceConfigName,1:"")

}

ClassMethod ConfigNameBusinessPartner(configName As Ens.DataType.ConfigName) As %String

{

#Dim result As StPierre.Type.ApplicationCode

return:$get(configName)="" ""

return $get($$$ConfigSetting(configName,"Host","BusinessPartner"))

}

}If you wish to send low criticality alerts only during working hours, you should define a schedule for those hours, for instance:

STOP:WEEK-*-01T17:00:00,START:WEEK-*-01T08:00:00,STOP:WEEK-*-02T17:00:00,START:WEEK-*-02T08:00:00,STOP:WEEK-*-03T17:00:00,START:WEEK-*-03T08:00:00,STOP:WEEK-*-04T17:00:00,START:WEEK-*-04T08:00:00,STOP:WEEK-*-05T17:00:00,START:WEEK-*-05T08:00:00

To avoid repeating the same alert, the alert manager uses the Ens.Alerting.Rule.FunctionSet.IsRecentManagedAlert function.

Alert notifications can also integrate with external monitoring tools like Prometheus or Nagios by extending Ens.Alerting.NotificationOperation

Deploying Changes While Production Is Running

Code Changes

In his excellent series of articles regarding continuous delivery using GitLab, @Eduard Lebedyuk explains explains what options we have for updating code and configuration while the interoperability is running. This article focuses on interoperability productions in particular.

Importing and compiling classes does not affect business host processes that are already running; they can use the newly compiled code only after a restart.

Newly instantiated classes can be affected, though. For example, new business process instances will immediately employ the recently compiled code. It is also true for all code that is invoked in the context of another process, such as data transformations.

Even if you manage to avoid tight coupling, classes may still depend on one another, and you might need to control the precise order in which business hosts are disabled and enabled. To achieve this, apply scripted deployment to do the following:

- Backup all classes to an XML file

- Import and compile items from the repository branch

Look at the example script to back up classes:

@echo off

setlocal

rem A utility to backup IRIS Health classes from a namespace to a xml file

rem

rem Usage :

rem

rem backup-classes <iris instance> <iris namespace> <target file>

rem

rem example :

rem

rem backup-classes IRISHM PROD

rem

rem

rem IRIS installation folder

rem

set IRISHome=c:\intersystems\IRISHealth

set IRISInstance=%1

set IRISNameSpace=%2

set BackupFile=%3

for /f %%i in ('powershell get-date -format "{yyyyMMdd}"') do set CurrentDate=%%i

if "%3"=="" (set BackupFile=classes-%CurrentDate%.xml)

echo %CurrentDate%

echo exporting classes to %BackupFile%

"%IRISHome%\bin\irissession" %IRISInstance% -U %IRISNameSpace% "##class(%%SYSTEM.OBJ).ExportAllClasses(""""""%BackupFile%"""""")"

echo:

echo Finished exporting classesCheck out a sample script to import and compile a UDL item from the repository:

@echo off

setlocal

rem A utility to clone IRIS Health items from git repository and deploy (load, compile) them to an iris instance

rem

rem Usage :

rem

rem deploy-classes <iris instance> <iris namespace>

rem

rem example :

rem

rem deploy-classes IRISHEALTH SCRATCH

rem

rem pre-requisites :

rem

rem * git installed and in path

rem * intersystems IRIS Health installed and

rem * disk space in %TEMP% volume for a clone of repository

rem * existing IRIS Health instance and namespace

rem

rem

rem IRIS installation folder

rem

set IRISHome=c:\intersystems\IRISHealth

rem

rem repository http URL

rem

set Repository=http://git.stpierre-bru.be/integration/iris-health.git

set Branch=develop

set TargetDir=%TEMP%\iris-health-deploy

set IRISInstance=%1

set IRISNameSpace=%2

echo Cleaning target directory

if exist %TargetDir% rmdir /s/q %TargetDir%

echo Cloning repository %Repository% branch %Branch%"

git clone --branch %Branch% %Repository% %TargetDir%

echo Loading UDL items

"%IRISHome%\bin\irissession" %IRISInstance% -U %IRISNameSpace% "##class(%%SYSTEM.OBJ).ImportDir(""""""%TargetDir%\src"""""",""""""*.*"""""",""""""ckbry"""""",,1)"

Configuration Changes

In the context of continuously running interoperability productions and due to the tight control over the type of classes that are compiled and their timing regarding operating business hosts, I would not recommend using IPM at the moment.

I do not know of any sites that use the Management Portal features for deployment. It might happen because it requires a background that strictly mirrors the live production environment to build packages with the Management Portal.

When starting from scratch, even with a single repository and module for your interoperability code, I recommend using deployment scripts.

Deployment scripts can be run ‘manually’ with the help of the Management Portal, terminal, VSCode or a mix of those.

For instance, when deploying new business hosts, I usually copy their settings from the QA environment production class XData block, adjusting the resulting XML elements in the production environment as needed.

In the rare case of a complex or tedious change, I tend to use the ad-hoc class methods to update interoperability programmatically. The methods are tested with the QA environment.

Hotfixes and Small Changes

Hotfixes and small configuration changes that affect only a few items can be deployed using the following:

- The Terminal: using SYSTEM.OBJ class methods or Security.* classes for sunch security elements as Roles, SQL privileges and applications

- VSCode server-side editing or Studio: Studio supports drag-and-drop of classes between instances, which I find very handy.

- Management Portal: To manually import compilable items (classes, LUTs, HL7 schemas,...) and configuration elements (Web Applications, Roles,...).