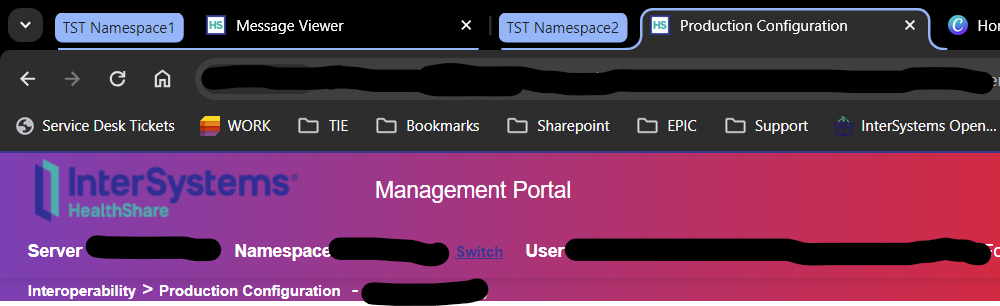

Welcome to the next chapter of my CI/CD series, where we discuss possible approaches toward software development with InterSystems technologies and GitLab.

Today, we continue talking about Interoperability, specifically monitoring your Interoperability deployments. If you haven't yet, set up Alerting for all your Interoperability productions to get alerts about errors and production state in general.

Inactivity Timeout is a setting common to all Interoperability Business Hosts. A business host has an Inactive status after it has not received any messages within the number of seconds specified by the Inactivity Timeout field. The production Monitor Service periodically reviews the status of business services and business operations within the production and marks the item as Inactive if it has not done anything within the Inactivity Timeout period.

The default value is 0 (zero). If this setting is 0, the business host will never be marked Inactive, no matter how long it stands idle.

This is an extremely useful setting since it generates alerts, which, together with configured alerting, allows for real-time notifications about production issues. Business Host being idle means there might be some issues with production, integrations, or network connectivity worth looking into.

However, Business Host can have only one constant Inactivity Timeout setting, which might generate unnecessary alerts during known periods of low traffic: nights, weekends, holidays, etc.

In this article, I will outline several approaches towards dynamic Inactivity Timeout implementation. While I do provide a working example (currently running in production for one of our customers), this article is more of a guideline for building your own dynamic Inactivity Timeout implementation, so don't consider the proposed solution as the only alternative.

.png)