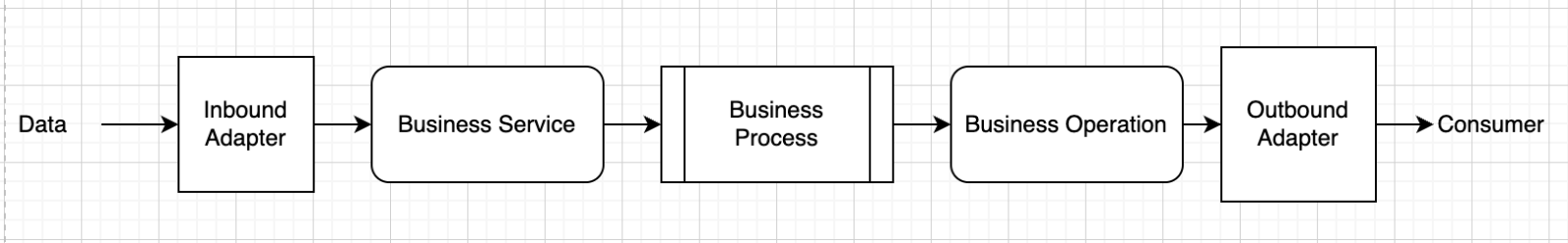

The concept of low code development is getting more and more important across all industries. Everybody who is starting to get into low code programming, will inevitably come across Node-RED. InterSystems IRIS is renowned for its interoperability and so should be accessible via Node-RED.